TL;DR:

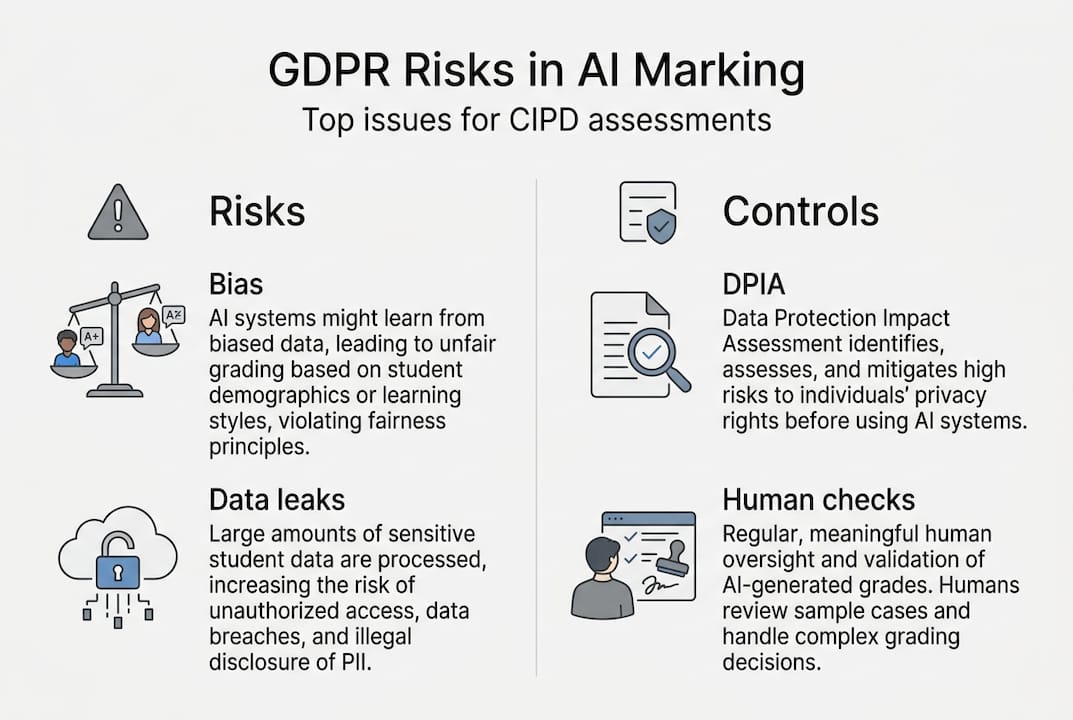

- GDPR principles require careful planning and documentation for GDPR-compliant AI-assisted marking.

- Human oversight is legally mandatory and essential for accountability and fairness in high-stakes assessments.

- Proper vendor agreements, risk assessments, and transparency build trust and protect against legal and reputational risks.

Adopting AI-assisted marking for CIPD qualifications can feel like a purely technical upgrade, but the moment learner data enters an AI system, you are operating inside a legal framework with real teeth. Many training providers discover GDPR obligations only after procurement, which is far too late. Non-compliance can trigger fines, damage your centre's reputation, and undermine learner trust in your assessment process. This guide cuts through the confusion by mapping the seven core GDPR principles to AI marking workflows, explaining when a Data Protection Impact Assessment is legally required, and giving you practical steps to build a compliant, auditable process from day one.

Table of Contents

- Core GDPR principles in AI-assisted educational assessments

- Mandatory Data Protection Impact Assessments (DPIAs) for AI marking

- Human oversight and rights in AI marking decisions

- Vendor selection, processor agreements, and special category data

- Why GDPR is your friend, not a foe: unpacking compliance for practical gains

- Stay compliant and efficient with trusted AI marking solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| DPIA is essential | Every AI marking deployment in education must start with a well-documented DPIA to manage risk. |

| Human review required | Fully automated marking is not allowed—learners have rights to appeal or challenge AI-influenced grades. |

| Vendor agreements matter | Choose only GDPR-compliant vendors and secure detailed DPAs covering processing, data centres, and training data. |

| Special data needs care | Processing biometrics or potentially biased grading involves extra GDPR obligations and transparent communication. |

| Compliance enables trust | Adhering to GDPR is not just about legality; it’s the foundation for credibility in AI-powered education. |

Core GDPR principles in AI-assisted educational assessments

GDPR is not a single rule. It is a set of seven interlocking principles, and each one has direct implications for how you collect, process, store, and delete learner data in an AI marking context. AI marking and GDPR principles interact in ways that are easy to miss if you treat compliance as an afterthought.

The seven core principles that govern compliant AI marking are:

- Lawfulness, fairness, and transparency: You need a clear legal basis for processing, and learners must understand how their work is assessed.

- Purpose limitation: Data collected for marking cannot be repurposed, for example to train the AI model.

- Data minimisation: Only the data strictly necessary for grading should be processed. Uploading full learner profiles when only assignment text is needed is a breach waiting to happen.

- Accuracy: AI outputs must be checked. Inaccurate marks that go unchallenged violate this principle.

- Storage limitation: Learner submissions and marks must be deleted once the retention period ends.

- Integrity and confidentiality: Submissions must be protected from unauthorised access or accidental loss.

- Accountability: You must be able to demonstrate compliance, not just claim it.

| GDPR principle | Risk in AI marking | Practical control |

|---|---|---|

| Transparency | Learners unaware AI was used | Disclose AI role in assessment policy |

| Data minimisation | Excess data uploaded to AI tool | Strip unnecessary fields before submission |

| Storage limitation | Submissions retained indefinitely | Set automated deletion schedules |

| Accountability | No audit trail of AI decisions | Log every AI output and human review |

In vocational settings like CIPD, accountability carries extra weight. Awarding bodies expect centres to demonstrate the integrity of every mark. Without documentation, you cannot defend a grading decision if a learner appeals.

"GDPR principles for AI-assisted marking include lawfulness, fairness and transparency, purpose limitation, data minimisation, accuracy, storage limitation, and accountability, which directly map to AI governance in education."

Pro Tip: Build your accountability trail from the start. Every AI-generated mark should be logged alongside the human reviewer's decision, creating a defensible record for both GDPR audits and awarding body scrutiny. Embedding human oversight in marking workflows is the single most effective accountability control you can implement.

Mandatory Data Protection Impact Assessments (DPIAs) for AI marking

Once you understand the principles, the next question is how to systematically prove and document compliance, and that begins with a DPIA. Under Article 35 of UK GDPR, a DPIA is legally required before you deploy any processing activity that is likely to result in a high risk to individuals. Automated assessment of learner work almost certainly meets that threshold.

DPIAs are required before deploying AI tools that process personal data in educational assessments, covering risks like bias, data flows, vendor compliance, and international transfers. Skipping this step is not a minor oversight; it is a breach of UK GDPR itself.

A well-structured DPIA for AI-assisted marking should follow these steps:

- Describe the processing: What data is collected, from whom, and for what purpose?

- Assess necessity and proportionality: Is AI marking the least privacy-invasive way to achieve consistent grading?

- Identify and assess risks: Consider algorithmic bias, lack of explainability, third-party vendor data flows, and potential international data transfers.

- Identify mitigations: Human review, data minimisation controls, vendor DPAs, and encryption.

- Consult your Data Protection Officer (DPO): Their sign-off is not optional if your organisation has one.

- Record and review: A DPIA is a living document. Review it whenever the tool or process changes.

Bias is a particularly acute risk in CIPD assessments. If the AI model was trained predominantly on one demographic's writing style, it may systematically under-mark learners from different linguistic or cultural backgrounds. Your AI ethics and DPIA guide should explicitly address how the vendor tests for and mitigates this.

International data transfers are another common blind spot. If your AI provider routes data through servers outside the UK or EU, you need either an adequacy decision or appropriate safeguards in place. Review AI feedback compliance requirements carefully before signing any vendor contract.

Pro Tip: Start your DPIA before you shortlist vendors. Knowing your risk profile in advance means you can ask the right questions during procurement rather than retrofitting compliance after the contract is signed. Reviewing AI workflow best practices early will sharpen your risk identification significantly.

Human oversight and rights in AI marking decisions

DPIAs highlight potential risks, but GDPR makes clear that some actions are simply not permitted, especially fully automated high-stakes marking. Article 22 of UK GDPR prohibits decisions based solely on automated processing that produce legal or similarly significant effects on individuals. A CIPD qualification grade is exactly that kind of decision.

Human oversight is essential; AI cannot make sole high-stakes decisions in assessments like grading or certification without meaningful human review, aligning with GDPR Article 22 prohibitions on solely automated decisions.

Meaningful human review is not a rubber stamp. A qualified assessor must genuinely engage with the AI's output, check its rationale, and be empowered to override it. Learners also hold specific rights you must respect:

- Right to explanation: Learners can request a meaningful explanation of how their mark was reached, including the AI's role.

- Right to object: Learners can object to automated processing, and you must have a procedure to handle this.

- Right to rectification: If a mark is inaccurate, learners have the right to have it corrected.

- Right of access: Learners can request all personal data held about them, including AI-generated assessments.

Your appeals process must be updated to reflect the AI-assisted context. If a learner challenges a grade, they are entitled to know that AI was involved and to have a human assessor review the work independently. The importance of human review cannot be overstated here; it is both a legal requirement and a quality safeguard.

"Providers must embed human review as a structural feature of the workflow, not an optional add-on."

From a practical standpoint, your digital workflow should log every instance where a human reviewer confirmed, amended, or overrode an AI mark. This creates the audit trail that protects you under both GDPR and CIPD awarding body requirements. Strong data security protocols must underpin the entire process.

Vendor selection, processor agreements, and special category data

Even with the right oversight internally, you are only as compliant as the tools and vendors you use. Procurement is where many providers make costly mistakes, often by selecting AI tools based on features alone without scrutinising data handling practices.

Under UK GDPR, your organisation is the data controller and your AI tool provider is the data processor. That relationship must be formalised in a Data Processing Agreement (DPA), which is mandatory. Your DPA must specify:

- Processing instructions and the scope of permitted use

- Security standards the vendor must maintain

- Confirmation that data is stored in UK or EU-based data centres

- A clear prohibition on using student submissions to train or improve the AI model

- Sub-processor disclosure and approval requirements

When evaluating AI grading software options, ask vendors directly whether their model is trained on customer data. Many providers use submitted content to improve their systems unless explicitly prohibited. This is a purpose limitation violation under GDPR.

Special category data adds another layer of complexity. Biometric data in proctoring or non-native speaker bias in essay grading trigger dual GDPR Article 9 special category processing and AI Act high-risk obligations, and emotion recognition is outright prohibited. If your CIPD programme includes any proctored assessments using facial recognition or keystroke analysis, you are in special category territory and need explicit consent and a separate legal basis.

| Compliance criterion | What to ask vendors |

|---|---|

| Data residency | Are servers in the UK or EU? |

| Model training | Is student data used to train the model? |

| DPA availability | Can they provide a compliant DPA? |

| Bias testing | How is algorithmic bias assessed and reported? |

| Sub-processors | Who else processes the data? |

For a fuller picture of how AI tools compare when integrating AI in assessment, build this compliance checklist into your procurement scoring matrix.

Why GDPR is your friend, not a foe: unpacking compliance for practical gains

Most training providers approach GDPR as a constraint, a list of things they cannot do. That framing is both limiting and inaccurate. The smarter view is that GDPR gives you a mature, structured framework for building AI marking systems that learners and awarding bodies can actually trust.

GDPR provides a mature, enforceable framework adaptable for AI in assessments via existing tools like DPIAs, Records of Processing Activities (ROPAs), and DPO consultations, enabling quick compliance without new systems. You do not need to build anything from scratch.

Transparency about AI involvement in marking does not erode trust; it builds it. Learners who understand how their work is assessed, and who know a qualified human has reviewed the output, are far more likely to accept and act on feedback. That is a direct improvement in learning outcomes, not just a legal tick-box.

Providers who invest in marking accuracy with AI through a rights-focused, documented approach also find that awarding bodies respond positively during quality assurance visits. Compliance becomes a competitive differentiator, not just a cost of doing business.

Stay compliant and efficient with trusted AI marking solutions

Ready to put these principles into practice? EduMark.ai is built specifically for CIPD training providers who need AI-assisted marking that is transparent, auditable, and GDPR-compliant by design.

The platform supports your DPIA requirements by providing clear documentation of how marks are generated, confidence scores for every decision, and structured human review at every stage. Submissions are processed securely, with no student data used for model training. If you want to improve turnaround times and marking consistency without putting your centre's compliance at risk, trusted AI marking for CIPD is worth exploring today.

Frequently asked questions

Is AI-only marking ever permitted under GDPR for CIPD assessments?

No, GDPR requires meaningful human involvement in high-stakes assessment decisions. Article 22 prohibits solely automated decisions that significantly affect individuals, which includes CIPD qualification grades.

What is the role of a DPIA for AI-assisted educational marking?

A DPIA is legally required to identify and mitigate risks before you deploy AI in assessments. DPIAs are mandatory under Article 35 of UK GDPR whenever processing is likely to result in high risk to learners.

What should be included in a Data Processing Agreement with an AI marking provider?

A DPA must specify processing instructions, security standards, use of UK or EU data centres, and must explicitly prohibit student data being used to train models. DPAs are mandatory and define the controller-processor relationship clearly.

How does GDPR apply to biometric data or non-native speaker bias in marking?

Biometric data triggers Article 9 special category rules, and non-native speaker bias is a recognised high-risk factor requiring transparency and mitigation. Both scenarios carry dual obligations under GDPR and the EU AI Act, with emotion recognition outright prohibited.