TL;DR:

- Educational assessment is a continuous feedback process vital for teaching, learner progress, and quality assurance.

- Combining formative, criterion-referenced, and AI-assisted assessments enhances reliability and reduces failure rates.

- Responsible AI use involves initial automated grading supported by human review to ensure professionalism and accuracy.

Assessment is not something that happens at the end of a course. For CIPD educators and assessors, it runs through every stage of the learning journey, shaping how you teach, how learners progress, and how your centre demonstrates quality. Yet many training providers still treat it as a box-ticking exercise rather than a strategic tool. This guide breaks down what educational assessment truly means, walks through the key types you need in your toolkit, explains the principles that separate strong practice from weak, and explores how AI is changing the grading landscape for professional qualifications.

Table of Contents

- What is educational assessment and why does it matter?

- Types of educational assessment: getting the balance right

- Principles of effective educational assessment

- AI-assisted grading: capabilities, limits, and best practice

- A practical perspective: achieving rigorous, human-centred assessment

- Upgrade your assessment process with EduMark AI

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Assessment goes beyond grading | It supports teaching, learning, and leadership decisions at all levels. |

| Use a mix of methods | Balancing formative and summative assessment gives a clearer, fairer view of student progress. |

| Rigour matters | Adhering to validity and reliability ensures assessments are fair and meaningful. |

| AI enhances, but does not replace, humans | For best results, combine AI marking with expert human judgement, especially for complex or critical assignments. |

What is educational assessment and why does it matter?

Most people picture a test or an assignment when they hear the word assessment. The reality is far broader. Educational assessment is the systematic process of gathering, analysing, and interpreting evidence of student learning to inform instruction, evaluate effectiveness, and support decision-making for students, teachers, and policymakers. That definition shifts assessment from a measurement tool into something closer to a feedback engine for the entire educational system.

For CIPD centres specifically, this distinction matters enormously. Assessment does not just tell you whether a learner passed. It tells you whether your teaching is working, where learners are struggling before they fail, and whether your programme design is fit for purpose. It informs everything from individual coaching conversations to strategic decisions about course content.

Assessment is not the end of learning. It is the mechanism through which learning becomes visible, adjustable, and meaningful.

There are several functions assessment serves beyond grading:

- Diagnostic function: Identifying gaps before instruction begins so teaching can be targeted

- Formative function: Providing ongoing feedback that adjusts learning in real time

- Summative function: Confirming achievement at key milestones for certification or progression

- Evaluative function: Helping institutions judge the quality of their own programmes

- Policy function: Supplying data that informs national standards and qualification frameworks

A common misconception is that rigorous assessment means more tests. In practice, over-testing without acting on results is one of the most wasteful things a training centre can do. The value is not in the volume of assessments but in what you do with the evidence they generate. Platforms built around AI in assessment are beginning to make it easier to act on that evidence at scale, but the principle holds regardless of the tools you use. Quality structured feedback is what turns assessment data into genuine learner improvement.

Types of educational assessment: getting the balance right

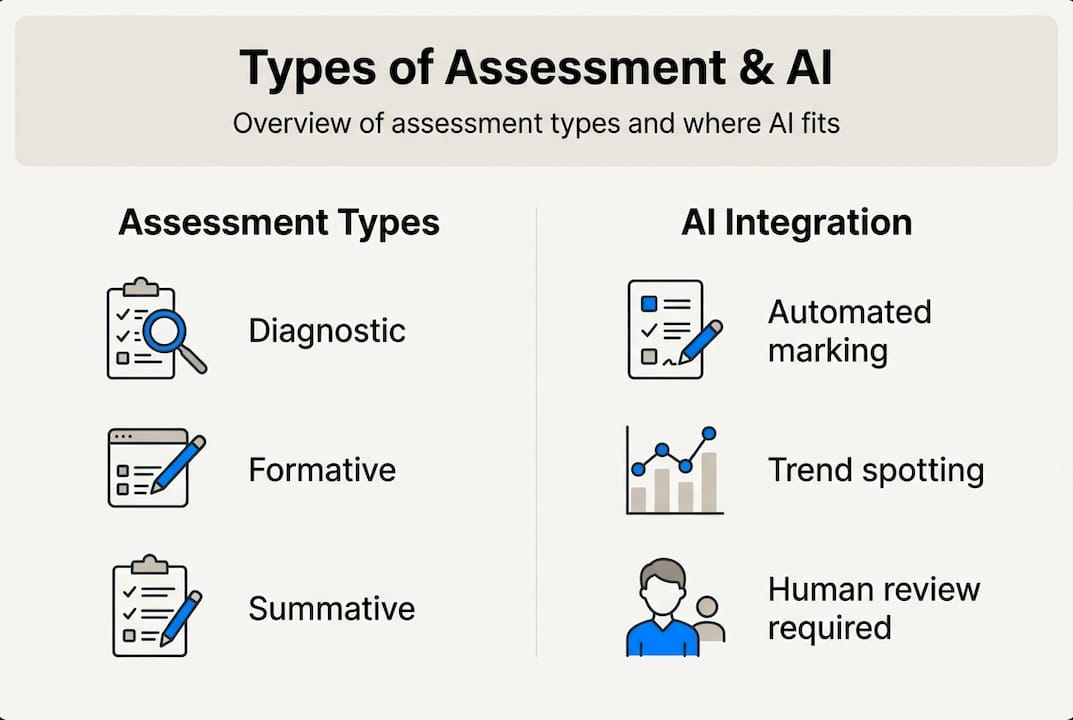

Not all assessments serve the same purpose, and using the wrong type at the wrong moment is a surprisingly common problem in professional training contexts. The OECD's assessment overview identifies key methodologies including diagnostic, formative, summative, ipsative, criterion-referenced, and norm-referenced assessments, each with a distinct role in the learning cycle.

Here is a quick comparison to help you decide which type fits which situation:

| Assessment type | When to use | What it measures | Key risk |

|---|---|---|---|

| Diagnostic | Before instruction begins | Prior knowledge and gaps | Ignored if results not acted on |

| Formative | Throughout learning | Progress and understanding | Underused in favour of summative |

| Summative | End of unit or qualification | Achievement against standards | Over-relied upon as the only measure |

| Ipsative | Ongoing | Individual improvement over time | Difficult to standardise at scale |

| Criterion-referenced | When standards matter | Performance against fixed criteria | Requires very clear rubric design |

| Norm-referenced | Comparative contexts | Performance relative to peers | Can demotivate lower-performing learners |

For CIPD qualifications, criterion-referenced and formative assessments tend to be the most valuable combination. Learners are measured against defined competency standards, not against each other, and ongoing feedback helps them reach those standards before the summative moment arrives.

Here is a practical sequence many effective CIPD centres follow:

- Use diagnostic assessment at enrolment to identify knowledge gaps and tailor induction

- Build regular formative checkpoints into each unit, not just at the end

- Use criterion-referenced summative assessments aligned to CIPD level descriptors

- Apply ipsative methods to help learners track their own development journey

- Review norm-referenced data at programme level to spot systemic issues in delivery

When comparing AI grading software options, it is worth checking which assessment types each tool is designed to support. Not all platforms handle open-ended, formative work as well as they handle structured summative tasks. Your assessment feedback guide should reflect the type of assessment being used, not apply a one-size-fits-all template.

Pro Tip: If your centre relies almost entirely on summative assessments, you are likely seeing avoidable fail rates. Introducing structured formative checkpoints at the midpoint of each unit can reduce final submission failures significantly, because learners know what is expected before the high-stakes moment arrives.

Principles of effective educational assessment

Knowing the types of assessment is only half the picture. How you design and deliver them determines whether they actually work. Effective assessment criteria require validity, reliability, usability, objectivity, and norms. Each principle addresses a different failure point in assessment design.

- Validity: Does the assessment actually measure what it claims to? A written essay on HR policy is not a valid measure of whether someone can conduct a difficult conversation.

- Reliability: Does the assessment produce consistent results across different assessors, times, and contexts? Inconsistent marking is one of the most damaging problems in professional qualifications.

- Usability: Is the assessment practical to administer, complete, and mark? Overly complex tasks create noise that obscures the signal you are trying to read.

- Objectivity: Are marks free from assessor bias, personal preference, or irrelevant factors like writing style or cultural expression?

- Norms and alignment: Are the standards benchmarked against recognised frameworks, such as CIPD level descriptors, so results are meaningful beyond your own centre?

Rubrics are the single most effective tool for improving reliability and objectivity simultaneously. A well-constructed rubric makes the expected standard explicit, reduces the cognitive load on assessors, and gives learners a clear target. Without one, two assessors marking the same piece of work can legitimately arrive at very different conclusions.

Bias is a subtler problem. It can creep in through assessor familiarity with a learner, assumptions about writing style, or even the order in which scripts are marked. Research into assessment for learning consistently shows that structured marking frameworks reduce these effects. Your CIPD assessment checklist should include a bias audit step, particularly for subjective, open-ended tasks. Pairing that with effective feedback methods ensures that even when marks differ slightly, the developmental guidance remains consistent and useful.

AI-assisted grading: capabilities, limits, and best practice

Artificial intelligence is changing what is possible in educational assessment, but it is not changing the underlying principles. Speed and scale are where AI genuinely excels. A platform can process hundreds of submissions in the time it takes a human assessor to mark a dozen, and it can flag inconsistencies across a cohort that a tired assessor might miss at 11pm on a Sunday.

| Capability | AI grading | Human-only grading |

|---|---|---|

| Speed | Very high | Moderate |

| Consistency across large cohorts | High | Variable |

| Handling nuanced arguments | Limited | Strong |

| Detecting plagiarism patterns | High | Low |

| Contextual judgement | Weak | Strong |

| Cost at scale | Low | High |

| Handling non-standard responses | Poor | Good |

The limitations are real and worth taking seriously. AI grading research shows that conservative AI grading leads to false fails, with one study finding a 92.6% miss rate on perfect answers in edge cases. AI also struggles with handwriting, complex maths notation, and argumentative tasks where the quality of reasoning matters more than keyword presence. These are not minor footnotes. For CIPD assessments, which frequently involve reflective writing and applied HR scenarios, these gaps are significant.

AI works best as a first-pass filter and consistency checker, not as the final word on professional competence.

The strongest AI assessment workflows combine automated initial marking with structured assessor review. AI handles the volume; humans handle the judgement. This is not a compromise. It is a better system than either approach alone. The human review in AI grading step is where professional expertise adds irreplaceable value, catching the cases where a technically correct answer misses the point entirely. Responsible AI ethics in assessment practice means being transparent with learners about how their work is evaluated and ensuring human oversight is built into every high-stakes decision.

Pro Tip: Before adopting any AI grading tool, test it against a sample of your most complex, open-ended submissions. If it struggles with your hardest cases, you need a robust human review layer built into the workflow from day one, not added as an afterthought.

A practical perspective: achieving rigorous, human-centred assessment

Here is something the assessment technology conversation often misses: the risk is not that AI will replace human judgement. The risk is that busy centres will let it, because the pressure to process more submissions faster is relentless.

We have seen this pattern with automated assessment for CIPD centres. The technology gets adopted for efficiency, and gradually the human review step gets compressed or skipped for lower-risk submissions. Then a cohort of learners receives feedback that is technically accurate but professionally hollow, and the centre's reputation takes a hit that takes years to recover from.

The most effective CIPD assessment practice we have observed blends formative checkpoints, criterion-referenced summative tasks, and AI-assisted marking with mandatory assessor sign-off. No single element carries the whole load. Formative assessment catches struggling learners early. Summative assessment confirms competence. AI speeds up the process and surfaces patterns. Human review ensures the judgement is sound and the feedback is meaningful. That combination is not just good practice. It is what professional learners deserve.

Upgrade your assessment process with EduMark AI

If you are looking to bring this kind of rigour and efficiency to your CIPD centre without sacrificing quality, EduMark.ai is built precisely for that challenge.

EduMark combines AI-assisted marking with mandatory human review, giving assessors structured feedback tools, confidence checks, and transparent rationale embedded directly into Word documents. Every submission is handled within a GDPR-compliant environment, so your centre stays protected as well as productive. Whether you are processing ten submissions a week or several hundred, AI-assisted CIPD grading through EduMark scales with your volume while keeping expert judgement at the centre of every decision. Find out how EduMark can help your team mark faster, more consistently, and with greater confidence.

Frequently asked questions

What are the three main types of educational assessment?

The three primary types are diagnostic, which identifies prior knowledge before instruction; formative, which provides ongoing feedback during learning; and summative, which measures achievement at the end of a unit or qualification.

How does AI improve the assessment process for CIPD educators?

AI speeds up initial marking and helps identify trends across large cohorts, but edge cases and nuanced tasks require human review to avoid false fails and ensure professional judgement is applied where it matters most.

What makes an assessment 'valid' and 'reliable'?

Validity means the assessment measures what it is designed to measure, while reliable assessment produces consistent results across different assessors, times, and learner groups.

Why do CIPD centres need both formative and summative assessments?

Formative assessments allow learners to develop and adjust before high-stakes moments, while summative assessments confirm competence for certification. The OECD recommends balancing both for a complete and fair picture of learner achievement.