Many educators assume AI will one day mark every assignment without human input. That assumption is already shaping policy debates across UK training centres and universities, but it misses the point entirely. In the UK, AI-assisted marking is designed to support human judgement, not replace it. This guide walks through how AI actually works in assessment, what the evidence says about accuracy, where the real risks lie, and how your institution can adopt it responsibly.

Table of Contents

- Setting the scene: why AI matters in UK assessment

- How AI-assisted assessment actually works

- Accuracy and efficiency: what the evidence shows

- Limits, risks and regulatory realities

- Balancing efficiency with integrity: contrasting viewpoints

- Practical steps to implement AI assessment responsibly

- Explore AI tools for compliant, efficient assessment

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI assists, not replaces | In the UK, AI supports marking but human judgement remains essential to meet regulations and quality standards. |

| High accuracy for objective tasks | AI tools are extremely reliable for marking straightforward responses like MCQs and short answers, saving time and improving consistency. |

| Compliance is key | Institutions must use GDPR and Ofqual-compliant AI solutions and always ensure human oversight of marking decisions. |

| Understand risks and limits | Bias, transparency, and fairness are known risks of AI; using mixed-method assessment reduces these threats. |

| Start with practical steps | Success relies on piloting, staff training, and ongoing review using fully compliant, sector-approved tools. |

Setting the scene: why AI matters in UK assessment

The UK's regulatory environment shapes everything about how AI can be used in marking. Ofqual sets strict rules on assessment integrity, and GDPR governs how learner data is processed and stored. Any AI tool that touches student work must satisfy both. That is not a barrier to adoption; it is a framework that makes adoption safer and more defensible.

Across the sector, the pressure to improve feedback quality while managing assessor workloads has never been greater. Training centres handling CIPD qualifications, for instance, often process hundreds of submissions per cohort. Turnaround times affect learner progression, and inconsistent marking affects trust. AI offers a practical route to address both, provided it is used within a clear governance structure.

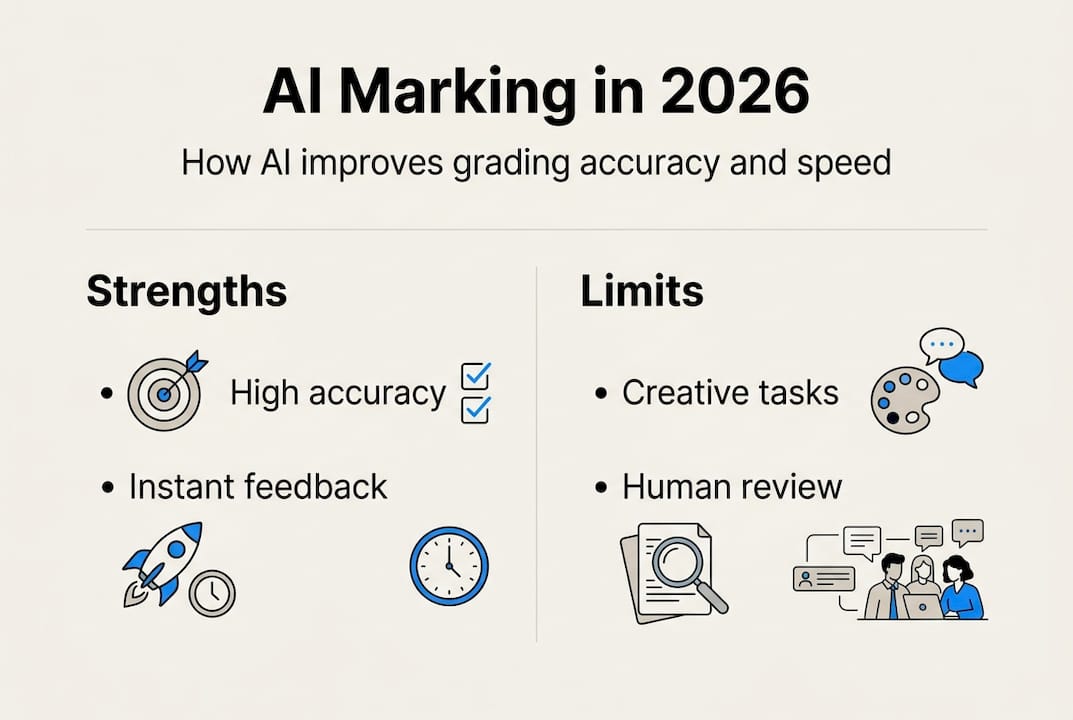

The principles of AI use in marking published by the UK government confirm that AI can enhance grading accuracy and speed up feedback, but only when human oversight is built into the process. That oversight is not optional. It is a regulatory requirement.

Key drivers pushing UK institutions towards AI-assisted assessment include:

- Consistency: Reducing marker-to-marker variation across large cohorts

- Speed: Cutting feedback turnaround from weeks to days

- Compliance: Meeting GDPR and Ofqual requirements with auditable workflows

- Scalability: Supporting growing learner numbers without proportional increases in assessor time

Understanding AI ethics in UK assessment and AI compliance for assessments are essential starting points before any tool is selected or piloted.

How AI-assisted assessment actually works

AI does not read an essay the way a human does. It analyses patterns, compares text against rubric criteria, flags keywords, and generates structured feedback based on what it has been trained to recognise. The human assessor then reviews, adjusts, and approves. That final step is not a formality; it is where professional judgement lives.

The mechanics typically involve rubric embedding, keyword detection, comparative judgement, and generative AI for feedback drafting, using approved tools like Claude AI or Microsoft Copilot. Each tool handles different parts of the workflow, and no single platform does everything.

Here is how a typical AI-assisted marking process unfolds:

- Rubric input: Assessment criteria are uploaded and mapped to mark bands

- Submission upload: Learner work is ingested by the platform

- Automated analysis: AI flags relevant content, gaps, and potential marks

- Feedback drafting: Generative AI produces structured written comments

- Human review: The assessor reads, edits, and approves the final mark and feedback

- Output delivery: Marked work with inline comments is returned to the learner

The table below shows how AI-assisted marking compares to traditional human-only marking across key dimensions:

| Dimension | Human-only marking | AI-assisted marking |

|---|---|---|

| Consistency | Variable across markers | High, with human check |

| Speed | Slow for large cohorts | Significantly faster |

| Feedback depth | Dependent on assessor | Structured and detailed |

| Compliance audit trail | Manual | Automated and documented |

| Suitability for creative work | High | Limited without oversight |

Pro Tip: Before selecting any AI marking tool, verify that it is approved for use under Ofqual guidelines and that its data processing is fully GDPR-compliant. Review the assessment criteria and tools checklist to ensure alignment before piloting.

For training centres considering a move to automated marking, the workflow above provides a clear model to adapt.

Accuracy and efficiency: what the evidence shows

The data on AI marking accuracy is genuinely impressive for the right types of tasks. For multiple-choice questions, AI achieves 100% accuracy in controlled conditions. For short-answer questions, the average deviation is less than one mark. Crucially, AI is often more consistent than human markers, with studies showing a 0.22 mark average difference for AI compared to 0.55 for human-to-human variation.

The QMUL pilot data is particularly striking. Institutions using AI-assisted tools reported a 50 to 60% reduction in marking time. For a training centre processing 400 CIPD submissions per quarter, that translates to dozens of assessor hours saved per cycle.

| Assessment type | AI accuracy | Human consistency (avg. deviation) | AI consistency (avg. deviation) |

|---|---|---|---|

| Multiple-choice questions | 100% | 0.55 marks | 0.22 marks |

| Short-answer questions | High (less than 1 mark off) | Variable | More stable |

| Extended written work | Moderate | Variable | Requires human review |

| Creative or reflective tasks | Low | High | Not recommended alone |

"AI-assisted tools are not about removing the assessor. They are about giving the assessor better information, faster, so their judgement is more informed and their time is better spent."

That said, the evidence also shows clear limitations. AI performs poorly on GCSEs and A-level creative writing, where nuance, voice, and originality matter. The importance of human review cannot be overstated in these contexts. Knowing where AI adds value and where it does not is the foundation of a responsible implementation strategy. Institutions that want to give effective feedback at scale will find AI most useful for structured, criteria-referenced tasks.

Limits, risks and regulatory realities

AI cannot be used as the sole marker for high-stakes assessments. Ofqual is explicit on this point. The principles of AI use in marking prohibit AI from making final grading decisions without human oversight, particularly where results affect learner progression or qualification outcomes.

Beyond regulation, there are genuine technical risks that every institution must understand:

- Bias in training data: If the AI was trained on a narrow dataset, it may systematically disadvantage certain learner groups

- Lack of transparency: Many AI systems cannot explain why they assigned a particular mark, creating a "black box" problem

- Non-determinism: The same submission may receive slightly different outputs on different runs

- Demographic fairness: AI tools must be tested across diverse learner populations before deployment

- Creative and subjective work: AI struggles with tasks that require contextual, cultural, or emotional understanding

"Transparency is not a nice-to-have in AI marking. It is a legal and ethical requirement under UK data protection law and Ofqual's assessment integrity standards."

Pro Tip: Audit your AI tool's outputs regularly against a sample of human-marked submissions. If you notice patterns of under or over-marking for specific learner groups, pause and investigate before continuing. Review AI marking ethics and assessment compliance guidance to build a robust review process.

Data security is equally important. Learner submissions contain personal data, and any platform processing that data must comply with UK GDPR. Always review data security in assessments before onboarding a new tool.

Balancing efficiency with integrity: contrasting viewpoints

Not everyone in UK education is enthusiastic about AI in assessment. The debate is real, and both sides have legitimate points. Understanding the full spectrum of opinion helps institutions make better decisions.

The case for AI-assisted marking is strong on efficiency grounds. A 50 to 60% time saving in marking time is not trivial. Faster feedback improves learner outcomes. Greater consistency reduces appeals and disputes. For large training centres, these benefits are operational necessities, not luxuries.

The concerns are equally substantive:

- Trust: Learners and employers may question the legitimacy of AI-marked qualifications

- Student integrity: AI tools that generate feedback may inadvertently reward surface-level compliance over genuine understanding

- Over-reliance: Assessors who defer too readily to AI outputs may lose the critical edge that makes human marking valuable

- Transparency: Learners have a right to understand how their work was assessed

"The institutions getting this right are not choosing between AI and human judgement. They are designing workflows where each does what it does best."

The most effective approach treats AI as a first-pass tool that surfaces information, and human assessors as the decision-makers who act on it. Platforms that support improving feedback with AI and maintain a clear human oversight role in every workflow are best positioned to satisfy both efficiency and integrity requirements.

Practical steps to implement AI assessment responsibly

Moving from interest to implementation requires a structured approach. Rushing into AI marking without proper governance creates compliance risk and erodes assessor confidence. The following steps reflect what leading UK institutions and training centres are doing in 2026.

- Define scope: Identify which assessment types are suitable for AI assistance, starting with structured, criteria-referenced tasks

- Select compliant tools: Choose platforms that are GDPR-compliant and aligned with Ofqual guidance, such as those used in the QMUL pilot

- Run a controlled pilot: Test the tool on a sample cohort and compare outputs against human-marked benchmarks

- Document everything: Record your rationale, tool selection criteria, and pilot outcomes for internal quality assurance and external review boards

- Train your assessors: Ensure staff understand how to interpret, challenge, and override AI outputs

- Monitor continuously: Set up regular audits comparing AI and human marks, and track feedback quality over time

- Stay current: Subscribe to updates from Ofqual and the ICO to catch any regulatory changes that affect your workflow

Pro Tip: Update assessor training at least once per academic year. Regulatory guidance on AI in assessment is evolving quickly, and staff who were trained 18 months ago may be working with outdated assumptions. Use the assessment implementation checklist and AI assessment ethics resources to keep your team current.

Documentation is often underestimated. If your institution is ever subject to an Ofqual review or a GDPR audit, a clear paper trail showing how AI was used, reviewed, and governed will be your strongest defence.

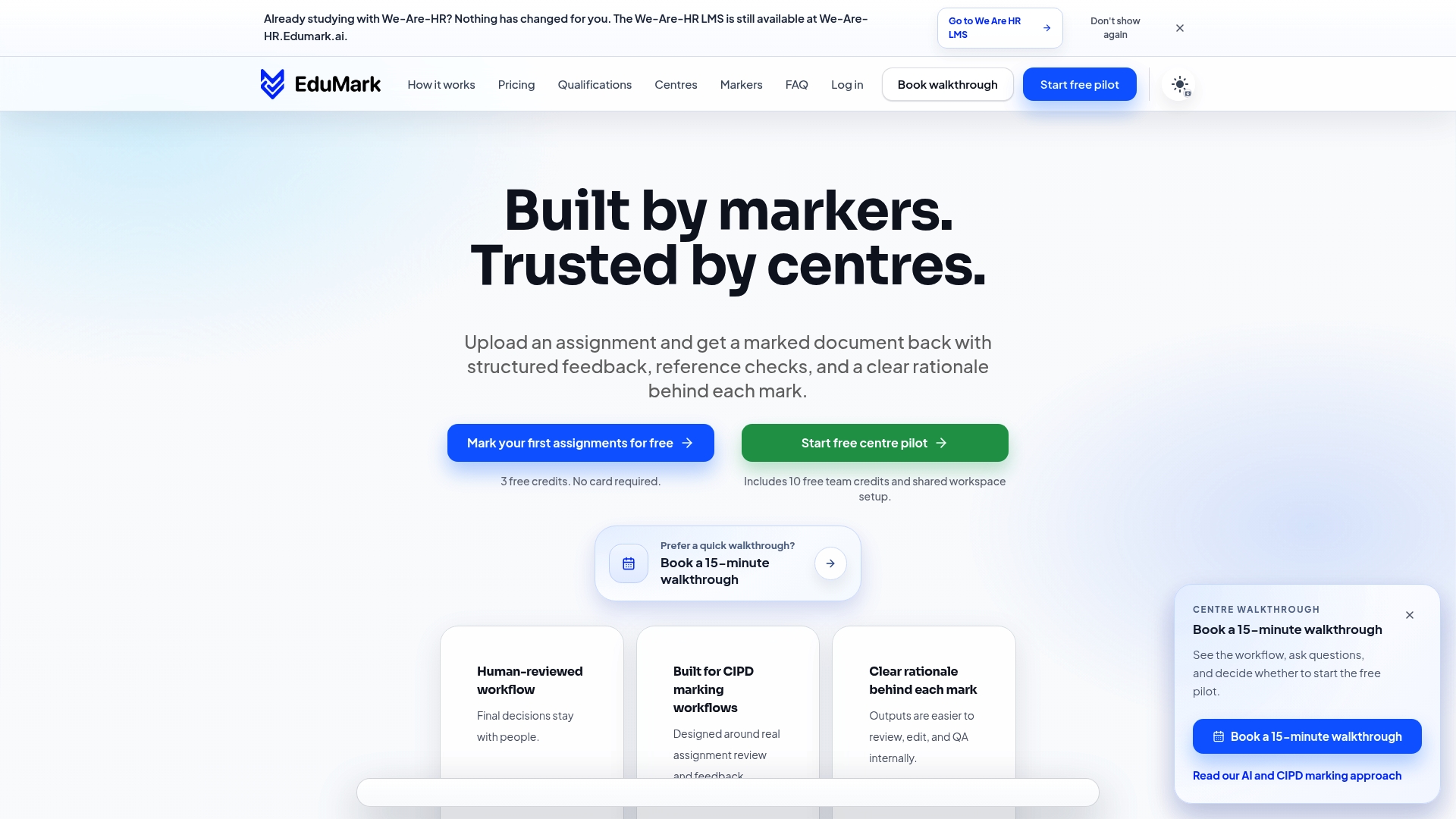

Explore AI tools for compliant, efficient assessment

If your training centre or institution is ready to move beyond theory and into practice, the tools you choose will define your results. EduMark.ai is built specifically for CIPD qualifications and UK assessment compliance, offering AI-assisted marking with full human review, inline feedback, confidence checks, and GDPR-compliant data handling built in from the ground up.

The platform's workflow mirrors the responsible implementation steps outlined in this guide, with structured rationale behind every mark and transparent audit trails for quality assurance teams. Whether you are piloting AI marking for the first time or scaling an existing workflow, EduMark's AI-assisted marking solutions are worth exploring. You can also review real-world outcomes through EduMark case studies to see how similar organisations have improved accuracy, speed, and compliance in their assessment processes.

Frequently asked questions

Can AI fully automate student marking in UK institutions?

No. UK rules require that human assessors retain final judgement and oversight, so AI is used to assist, not replace, the marking process. Fully automated grading without human review is not compliant with Ofqual standards.

What types of assessments does AI mark most accurately?

AI is highly accurate for objective tasks such as multiple-choice and short-answer questions, where benchmarks show near-perfect accuracy and greater consistency than human markers. It is far less reliable for creative, reflective, or subjective work.

How do institutions ensure AI marking is fair and secure?

They use GDPR-compliant platforms, require human review at every stage, and avoid deploying AI alone for high-stakes or creative assessments. GDPR-compliant tools with documented audit trails are the standard expectation in 2026.

Are there any risks to using AI in assessment?

Yes. Risks include bias from training data, lack of transparency in how marks are generated, and potential demographic fairness issues. Ongoing oversight and regular auditing are essential to manage these risks responsibly.