TL;DR:

- AI-assisted marking surpasses human reliability benchmarks in some UK assessments, especially structured tasks.

- Compliance requires transparent logs, bias audits, rubric embedding, and human oversight for AI tools.

- Hybrid workflows—AI for initial assessment and humans for review—maximize accuracy and fairness.

AI-assisted marking is already outperforming human reliability benchmarks in some UK contexts, yet most assessment centres are still treating it as experimental. That gap between evidence and adoption is costly. AI-enhanced Comparative Judgement yields reliability correlations of 0.91 to 0.96 for KS3 English Literature essays, surpassing established human benchmarks. For UK assessment centres and training providers navigating compliance pressures, staff capacity constraints, and learner expectations, this is not a marginal gain. This guide cuts through the noise: what AI feedback actually is, where the evidence stands, what UK regulators require, and how to implement it without creating new risks.

Table of Contents

- What is AI feedback in educational assessment?

- AI reliability and limitations: Evidence and benchmarks

- Managing compliance and fairness: UK guidelines for AI in assessment

- Practical implementation: Steps for successful AI feedback integration

- A fresh perspective: What most articles miss about AI feedback in education

- Connect: Streamline your assessment with trusted AI tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI reliability proven | AI-assisted marking surpasses human benchmarks for objective tasks and speeds up assessment. |

| Compliance is critical | UK centres must follow Ofqual guidelines by embedding rubrics, transparency logs, and bias audits in any AI tool. |

| Hybrid models work best | For high-stakes assessments, combine AI efficiency with human oversight to minimise risks and improve outcomes. |

| Practical integration matters | Successful AI feedback starts with low-stakes pilots, staff training, and GDPR compliance. |

What is AI feedback in educational assessment?

AI feedback in education refers to the automated analysis of student work using software that applies machine learning, natural language processing, or large language models to evaluate responses and generate commentary. It is not a single tool or method. It spans a spectrum from simple multiple-choice scoring engines to sophisticated systems that can read, interpret, and comment on extended written work.

In UK assessment centres, AI feedback is already being applied in several practical ways:

- Objective grading: Scoring structured responses against fixed mark schemes with high speed and consistency

- Discrepancy flagging: Identifying where two human markers have diverged significantly, prompting a third review

- Formative feedback support: Generating draft commentary that human assessors can review, edit, and approve before sharing with learners

- Moderation assistance: Comparing submissions against a calibrated sample to support standardisation

The technology behind these tools varies. Some platforms use rule-based systems trained on historical marking data. Others use large language models capable of interpreting open-ended responses. The most advanced use retrieval-augmented generation (RAG), which grounds the model's output in specific rubric content rather than general training data, reducing the risk of irrelevant or inaccurate feedback.

What makes AI feedback genuinely useful for assessment centres is consistency. Human markers, however skilled, are subject to fatigue, mood, and unconscious bias. AI applies the same criteria every time. For certain tasks, AI delivers reliability scores of 0.91 to 0.96 in marking, a level of consistency that most human marking teams struggle to match at scale.

Understanding AI integration in assessment is the first step toward making informed decisions about which tools are appropriate for your context. Not every AI feedback tool is suited to every assessment type, and the distinction matters enormously for compliance and learner outcomes.

Key point: AI feedback is not a replacement for assessor expertise. It is a layer of analytical support that, when implemented correctly, makes the whole marking process more defensible and efficient.

AI reliability and limitations: Evidence and benchmarks

The evidence base for AI marking reliability is growing, but it is not uniform. Performance varies significantly depending on the assessment type, the quality of the rubric, and the sophistication of the tool being used.

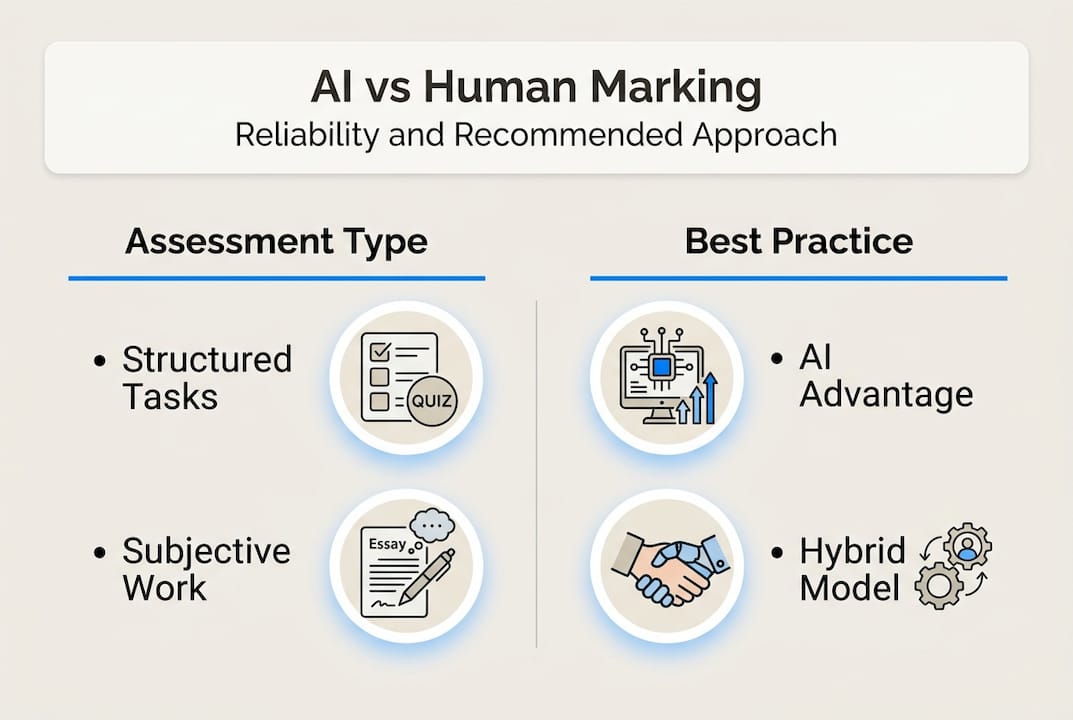

For structured, objective tasks, AI performs exceptionally well. AI reliability for KS3 English essays surpasses Ofqual human benchmarks of 52% true grade agreement, which is a striking result. It means AI is not just fast; it is more consistent than the human marking it is designed to support.

However, the picture changes for subjective, creative, or culturally nuanced work. LLMs struggle with nuance, creativity, irony, and cultural context, meaning that a learner who takes a genuinely original approach may be penalised by an AI that cannot recognise the merit in an unconventional answer.

| Assessment type | AI reliability | Human reliability | Recommended approach |

|---|---|---|---|

| Structured written tasks | Very high (0.91 to 0.96) | Moderate (approx. 52%) | AI-led with human review |

| Multiple-choice and short answer | High | High | AI-led |

| Creative and discursive essays | Moderate | Variable | Human-led with AI support |

| Portfolio and reflective work | Low to moderate | Variable | Human-led |

Another important limitation is non-determinism. Large language models do not always produce the same output for the same input. Run the same essay through twice and you may get slightly different feedback. This is not a flaw that can be engineered away entirely; it is a property of how these models work. For high-stakes assessments, this unpredictability is a serious concern.

Pro Tip: Always review AI grading accuracy data specific to your assessment type before committing to a tool. Generic reliability figures can be misleading if they come from a different educational context.

The most reliable approach in 2026 remains a hybrid model: AI handles the initial pass, flags borderline cases, and generates draft feedback, while a trained human assessor reviews and approves the final output. Reviewing AI grading software comparisons can help you identify which platforms are best suited to this layered workflow.

Managing compliance and fairness: UK guidelines for AI in assessment

Compliance is not optional, and the regulatory expectations for AI in UK assessment are becoming more specific. Ofqual stresses empirical validation, fairness checks, transparency, and bias audits before any AI tool is deployed for marking. These are not aspirational guidelines; they are the baseline.

For assessment centres, this means several concrete obligations:

- Rubric embedding: The AI must be operating against a clearly defined, validated mark scheme, not making interpretive judgements in the absence of criteria

- Transparency logs: Every AI-generated mark or piece of feedback must be traceable, with a record of how it was produced

- Bias audits: Tools must be tested across different learner demographics to ensure no group is systematically disadvantaged

- Human oversight: For high-stakes qualifications, a trained assessor must review AI outputs before they are used to determine learner outcomes

- GDPR compliance: Learner data processed by AI tools must be handled in line with UK data protection law, including clear data retention and processing agreements

The Ofqual principles for AI marking also emphasise that assessment centres bear responsibility for validating tools in their own context. A tool that performs well in one setting may not transfer reliably to another without local testing.

Pro Tip: Before signing any contract with an AI marking provider, request their bias audit documentation and ask specifically how their tool handles edge cases in your assessment type. If they cannot answer clearly, that is a red flag.

For centres delivering CIPD qualifications, understanding AI ethics for UK assessors and staying current with CIPD regulatory standards is essential. Compliance with CIPD feedback requirements adds another layer of specificity that generic AI tools may not address without configuration.

Practical implementation: Steps for successful AI feedback integration

Knowing the compliance requirements is one thing. Putting them into practice across a live assessment operation is another. Hybrid models combining AI and human oversight are recommended, with implementation starting in low-stakes contexts and scaling gradually. Here is a structured approach:

- Pilot in low-stakes assessments first. Choose formative tasks or practice submissions where the consequences of error are manageable. Use this phase to calibrate the tool against your existing mark scheme.

- Train your staff properly. Assessors need to understand what the AI is doing, where it is likely to be wrong, and how to override it confidently. This is not a one-hour briefing; it requires structured professional development.

- Embed your rubric explicitly. Do not assume the tool will interpret your criteria correctly. Map each criterion into the system and test outputs against known exemplars before going live.

- Monitor for bias continuously. Run regular checks across demographic groups. If certain learner profiles are consistently scoring lower with AI than with human markers, investigate before scaling.

- Maintain human review as standard. Especially for CIPD and other professional qualifications, human review in AI marking should be a non-negotiable step in your workflow, not an optional extra.

- Document everything. Transparency logs, override decisions, bias audit results, and staff training records all form part of your compliance evidence trail.

| Implementation stage | Key action | Common pitfall |

|---|---|---|

| Pilot | Low-stakes testing with exemplars | Skipping calibration against existing marks |

| Rollout | Staff training and rubric embedding | Assuming tool interprets criteria correctly |

| Scaling | Ongoing bias monitoring | Treating initial audit as sufficient |

| Review | Annual tool revalidation | Assuming performance is static over time |

Tools that use RAG technology are worth prioritising because they ground AI outputs in your specific rubric content, significantly reducing the risk of hallucinations or off-topic feedback. Reviewing AI assessment workflow tips and using an assessment checklist for CIPD compliance can help you structure each stage systematically.

Pro Tip: Build your compliance evidence trail from day one, not retrospectively. Regulators and awarding bodies will want to see a documented history of validation, not a snapshot taken after the fact.

A fresh perspective: What most articles miss about AI feedback in education

Most commentary on AI marking falls into one of two camps: breathless enthusiasm or outright scepticism. Neither serves UK assessment centres well. The reality is more interesting and more demanding.

The genuine lesson from early adopters is that AI feedback is only as good as the human infrastructure around it. Centres that have seen the strongest results are not those with the most sophisticated AI tools; they are the ones with the clearest rubrics, the most consistent staff training, and the most disciplined review processes. The AI amplifies what is already there.

This matters because it reframes the investment decision. Buying an AI marking tool without first auditing your own marking consistency is like fitting a high-performance engine to a car with faulty steering. The human reviewer insights that come from a well-designed hybrid system are often more valuable than the AI output itself, because they surface inconsistencies in your existing practice that were previously invisible.

AI feedback is most effective as part of a layered system. Treat it as such, and it becomes a genuine asset.

Connect: Streamline your assessment with trusted AI tools

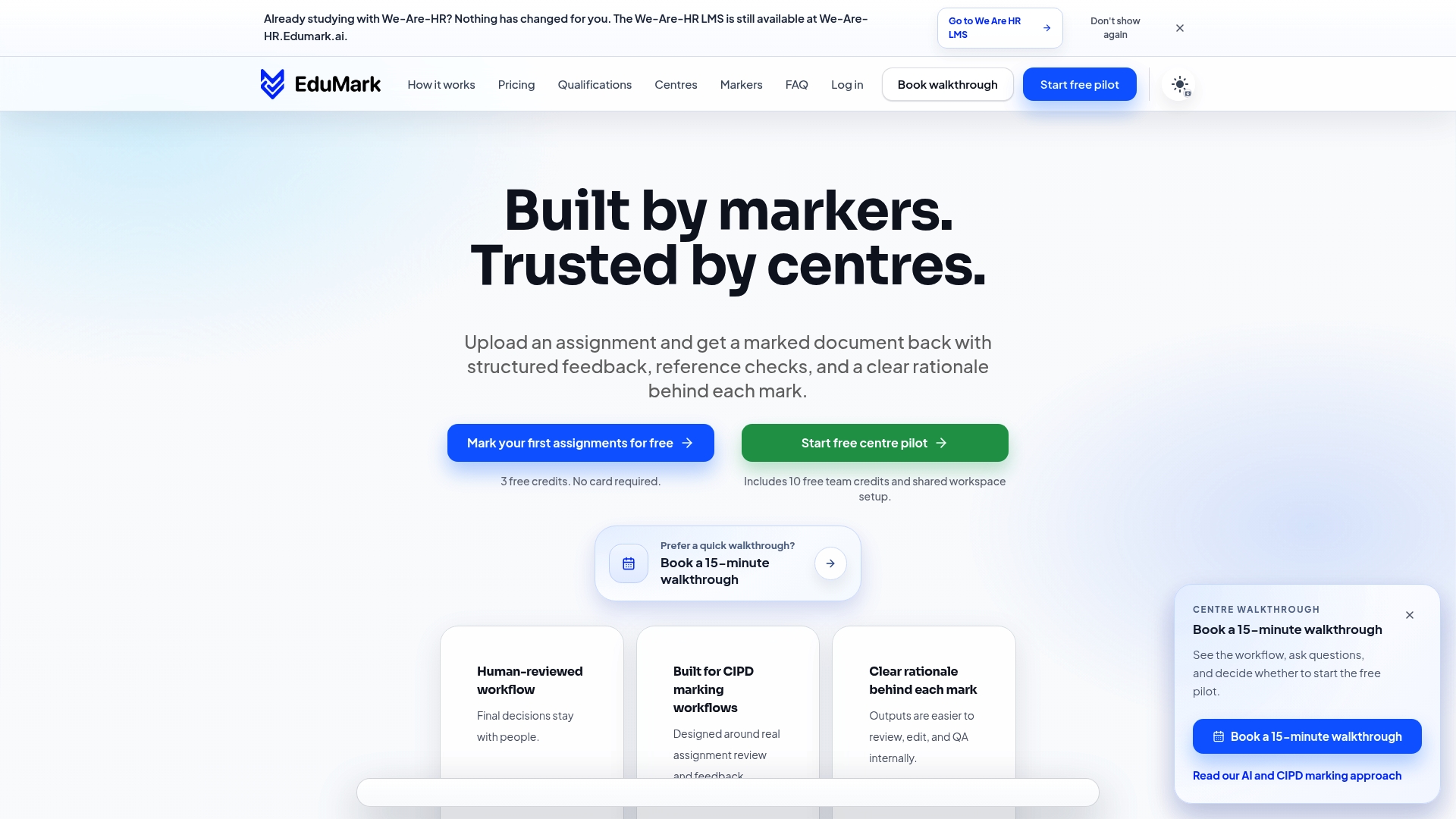

If you are ready to move from understanding AI feedback to implementing it with confidence, EduMark.ai is built specifically for UK assessment centres and CIPD training providers.

EduMark's AI marking solutions are designed around the compliance requirements you have just read about: rubric embedding, transparency logs, confidence checks, and human review workflows built into every submission. The platform handles GDPR-compliant data processing, generates inline comments directly in Word documents, and gives your assessors clear rationale behind every mark. Whether you are piloting AI feedback for the first time or scaling an existing workflow, EduMark provides the structure, auditability, and support to do it properly.

Frequently asked questions

Does AI marking meet UK regulatory standards?

AI marking can support compliance when tools embed rubrics, maintain transparency logs, and include bias audits. Ofqual requires human oversight for high-stakes assessments and does not currently permit AI as the sole decision-maker.

Can AI feedback improve marking reliability?

Yes, significantly for structured tasks. AI-enhanced tools have achieved reliability of 0.96 in KS3 English essay marking, outperforming established human benchmarks and reducing inter-marker variability at scale.

What are the main risks with AI feedback in education?

LLMs can struggle with nuance, creativity, and cultural context, and non-deterministic outputs mean the same submission may receive slightly different feedback on different runs, making human review essential.

How should assessment centres implement AI-assisted feedback safely?

Start with low-stakes pilots, train staff, and ensure GDPR compliance from the outset, maintaining human judgement as the final step in any high-stakes marking decision.