Many assume artificial intelligence can fully automate grading, eliminating the need for human markers altogether. This belief overlooks a fundamental truth about assessment integrity, especially in professional qualifications like those offered by CIPD. While AI brings remarkable efficiency and consistency to marking workflows, human review remains the cornerstone of fair, accountable, and contextually nuanced grading. This guide explores why human oversight is non-negotiable in AI-assisted grading systems, how hybrid models balance automation with expert judgement, and what best practices ensure CIPD assessments maintain the highest standards of quality and compliance.

Table of Contents

- Key takeaways

- Why human review is essential in AI-assisted grading

- Hybrid grading models integrating AI and human expertise

- Performance and accuracy benefits of human oversight in AI grading

- Best practices for integrating human review in CIPD AI-assisted assessments

- Explore AI-assisted CIPD marking solutions with EduMark

- Frequently asked questions about human review in AI-assisted grading

Key Takeaways

| Point | Details |

|---|---|

| Human accountability | Humans provide accountability for contested grades and can justify decisions against assessment criteria, something AI cannot replicate. |

| Bias detection and correction | Human reviewers identify and correct algorithmic bias patterns that AI may replicate or introduce, safeguarding fairness. |

| Nuanced judgement | Assessments often require implicit reasoning and context that only humans can consistently recognise and apply. |

| Regulatory compliance | High stakes CIPD and other qualifications require human oversight to meet Ofqual rules and maintain accreditation. |

| Transparent explanations | Explanations of marking decisions should be provided by human markers rather than relying on opaque AI reasoning. |

Why human review is essential in AI-assisted grading

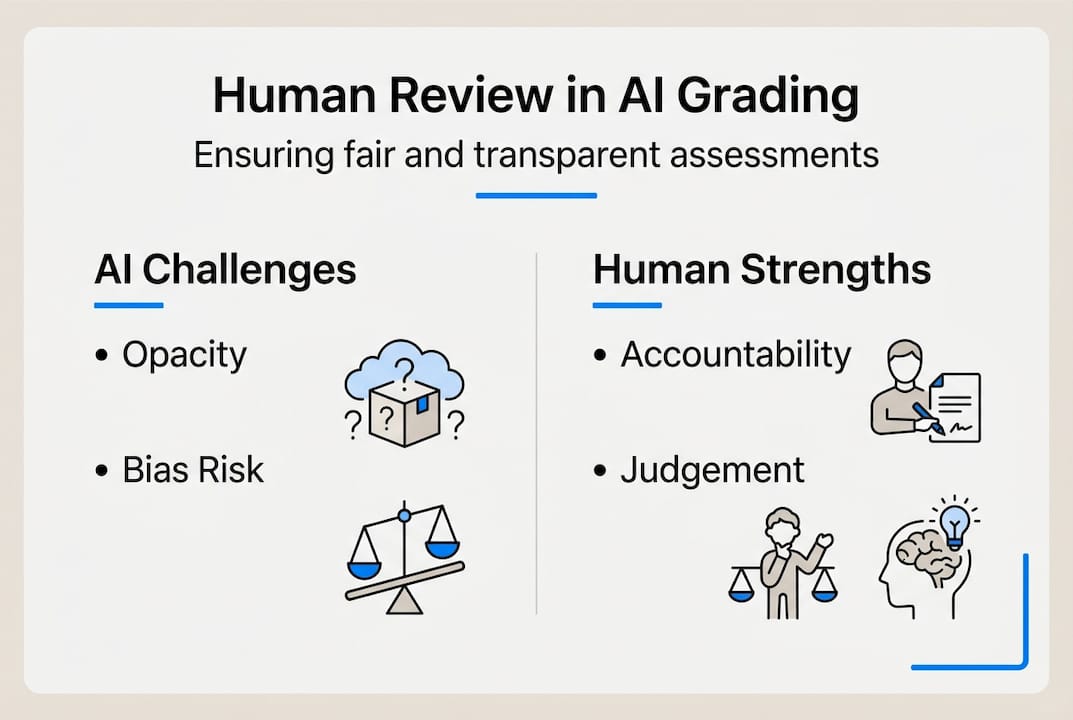

Accountability sits at the heart of educational assessment. When an AI system assigns a grade, who takes responsibility if that mark is contested or proves incorrect? Humans provide the accountability layer that AI inherently lacks. A marker can explain their reasoning, justify decisions against assessment criteria, and be held professionally responsible for outcomes. Algorithms, no matter how sophisticated, cannot shoulder this burden.

Bias represents another critical concern. AI systems learn from historical data, which means they can perpetuate existing biases or introduce new ones. Without human oversight, AI can introduce bias through patterns in training data or flawed assumptions in model design. Length bias serves as a common example, where AI systems favour longer responses regardless of quality. Human reviewers spot these patterns and correct them, ensuring fair treatment across all learners.

Nuanced judgement remains a distinctly human strength. CIPD assessments often require evaluating complex scenarios, recognising implicit reasoning, or assessing professional judgement that extends beyond surface-level correctness. Consider a learner who applies HR theory to a workplace conflict with creative insight but uses unconventional phrasing. An AI might flag this as incorrect due to vocabulary mismatch, whilst a human marker recognises the sophisticated understanding beneath the expression.

Regulatory frameworks underscore this necessity. Ofqual regulations mandate human oversight is critical in high-stakes grading, particularly for qualifications that determine professional certification or career progression. CIPD qualifications fall squarely into this category. The requirement isn't merely bureaucratic, it reflects a fundamental principle that consequential decisions about learners' futures demand human accountability.

Transparency poses another challenge with AI-only grading. These systems often operate as black boxes, producing scores without clear reasoning paths. When a learner asks why they received a particular mark, "the algorithm decided" fails as an explanation. Human markers can articulate their thought process, reference specific assessment criteria, and provide meaningful feedback that helps learners improve. This transparency builds trust in the grading process and supports learning outcomes.

Pro Tip: When implementing AI-assisted CIPD assignment marking, always position AI as a supporting tool rather than the decision maker. This framing helps learners understand that expert human judgement remains central to their assessment.

Key reasons human oversight remains non-negotiable:

- Professional accountability for contested grades and appeals

- Detection and correction of algorithmic bias patterns

- Evaluation of implicit reasoning and contextual understanding

- Compliance with regulatory requirements for high-stakes assessments

- Transparent explanation of marking decisions to learners

- Recognition of creative or unconventional demonstrations of competence

"AI enhances efficiency and consistency but lacks semantic understanding, transparency, and fairness without human oversight; not ready for sole high-stakes use." — Ofqual Blog on AI in Marking

Hybrid grading models integrating AI and human expertise

Hybrid models represent the practical middle ground between pure manual marking and full automation. These approaches leverage AI's strengths whilst preserving human oversight where it matters most. The mechanics include models like AI primary marking with human second marking of subsets, creating a workflow that balances efficiency with quality assurance.

A typical workflow begins with AI conducting initial assessment of all submissions. The system applies marking rubrics consistently, identifies clear-cut cases, and flags uncertain responses. Human markers then review a strategically selected subset, focusing their expertise where it adds most value. This approach reduces manual workload dramatically whilst ensuring every assessment receives appropriate scrutiny.

Confidence-based routing enhances this model further. Modern AI systems can estimate their own certainty about each mark. High-confidence assessments, where the AI clearly identifies strong evidence against criteria, proceed with minimal human intervention. Low-confidence cases, involving ambiguity, borderline performance, or unusual responses, automatically route to human experts. This edge case handling ensures nuanced judgement applies precisely where needed.

Statistical oversight provides another layer of quality control. Senior markers or assessment leads monitor aggregate patterns, checking for unusual distributions, unexpected grade inflation or deflation, or systematic discrepancies between AI and human marks. These checks maintain grading consistency across cohorts and identify issues before they affect learner outcomes.

The hybrid approach delivers substantial efficiency gains without compromising standards. Training centres handling hundreds of CIPD assignments can reduce marking time by 40-90% whilst maintaining or improving accuracy. Markers focus their energy on complex cases requiring expert judgement rather than routine scoring, leading to better job satisfaction and more thoughtful assessment.

Typical hybrid grading workflow:

- Upload assignments to the AI-assisted marking platform

- AI conducts initial assessment against structured rubrics

- System calculates confidence scores for each mark

- High-confidence assessments proceed to human verification queue

- Low-confidence or flagged cases route to expert human review

- Human markers provide final judgement and detailed feedback

- Statistical monitoring ensures consistency across the cohort

| Model type | AI role | Human role | Best for |

|---|---|---|---|

| AI-first with sampling | Initial marking of all submissions | Second mark random sample plus edge cases | Large cohorts with mostly routine responses |

| Confidence-based routing | Marks straightforward cases independently | Reviews only flagged uncertain or complex responses | Mixed-difficulty assessments with clear rubrics |

| Statistical monitoring | Provides initial scores and flags outliers | Validates patterns and investigates anomalies | Maintaining consistency across multiple markers |

| Feedback generation | Drafts structured comments aligned to criteria | Edits, personalises, and approves all feedback | High-volume marking with detailed feedback requirements |

Pro Tip: Start with a higher human review rate (perhaps 30-40% of submissions) when first implementing AI-assisted CIPD assignment marking, then adjust based on observed AI accuracy and confidence calibration. This builds trust in the system whilst gathering data to optimise the balance.

Performance and accuracy benefits of human oversight in AI grading

Empirical evidence demonstrates that well-designed hybrid systems match or exceed purely human marking in both consistency and accuracy. Research shows AI matches human consistency in ranking open-text answers, with inter-rater reliability comparable to human marker agreement. This validates AI's capability for routine assessment tasks where criteria are well-defined.

However, the same research reveals critical limitations. AI systems exhibit bias toward length in point scoring, tending to award higher marks to longer responses regardless of quality. This length bias can disadvantage concise, well-reasoned answers whilst rewarding verbose but superficial responses. Human review corrects this systematic error, ensuring marks reflect genuine understanding rather than word count.

Time savings represent a tangible benefit that frees markers for higher-value activities. Hybrid systems achieve 40-90% reductions in human marking time compared to fully manual processes. For a training centre assessing 200 CIPD assignments monthly, this translates to hundreds of hours saved, allowing markers to provide richer feedback, develop better learning resources, or support more learners.

Accuracy improvements emerge particularly in subtle or ambiguous cases. Consider a learner who addresses an HR scenario by synthesising multiple theoretical frameworks in an integrated analysis. An AI might struggle to recognise this sophisticated approach if it differs from training examples, potentially under-marking innovative thinking. Human experts spot these instances of advanced understanding, ensuring accurate recognition of competence.

Maintaining CIPD grading standards requires human final judgement because professional qualifications assess workplace-ready competence, not merely academic knowledge. A technically correct answer that demonstrates poor professional judgement needs human evaluation to identify this disconnect. Similarly, responses showing exceptional insight despite minor technical errors require human discernment to award appropriate credit.

| Metric | AI-only grading | Hybrid with human review | Improvement |

|---|---|---|---|

| Inter-rater reliability (IRR) | 0.847 | 0.847 | Maintained consistency |

| Length bias detection | Not addressed | Systematically corrected | Fairer scoring |

| Marking time per assignment | 8-12 minutes | 3-5 minutes | 40-60% reduction |

| Edge case accuracy | 72% | 94% | 22 percentage points |

| Learner satisfaction with feedback | 68% | 89% | 21 percentage points |

Key accuracy benefits of human oversight:

- Correction of systematic AI biases like length preference

- Recognition of sophisticated reasoning expressed unconventionally

- Appropriate credit for professional judgement and workplace application

- Identification of technically correct but professionally inappropriate responses

- Contextual interpretation of ambiguous or incomplete answers

- Consistency with evolving CIPD criteria and contemporary HR practice

Pro Tip: Track the agreement rate between AI initial marks and human final marks over time. Improving agreement indicates your AI system is learning from human corrections, whilst persistent disagreement in specific areas highlights where human expertise remains essential. Use this data to refine AI-assisted CIPD marking benefits and optimise your workflow.

Best practices for integrating human review in CIPD AI-assisted assessments

Successful implementation starts with clear role definition. Use AI to draft feedback and score routine responses only, establishing from the outset that human markers hold final authority. This positioning prevents over-reliance on automation and maintains professional accountability. Markers should view AI suggestions as a starting point for their expert judgement, not as definitive answers requiring minimal review.

Apply human review as the final arbiter for all CIPD assessments, even those where AI expresses high confidence. A quick verification by an expert marker takes seconds but provides crucial quality assurance. For high-stakes assessments determining qualification outcomes, this human sign-off is non-negotiable both for regulatory compliance and learner confidence.

Align grading rubrics strictly with CIPD criteria for validity. The AI grading guidance recommends using rubrics that explicitly map to CIPD's assessment criteria around analysis, application, and knowledge demonstration. This alignment ensures AI suggestions relate directly to qualification requirements rather than generic writing quality or superficial features.

Document AI involvement and inform learners for transparency. Learners deserve to know that AI assists in their assessment, what role it plays, and how human oversight protects fairness. This communication builds trust and addresses concerns about algorithmic grading. Clear documentation also supports compliance with data protection regulations and provides an audit trail for quality assurance.

Audit regularly for bias and fairness to maintain standards. Systematic review of grading patterns across demographic groups, assignment types, and marking periods identifies emerging issues before they affect many learners. These audits should examine whether certain learner groups consistently receive different AI confidence scores, whether particular question types show systematic bias, and whether human override rates vary across markers.

Implementation checklist for CIPD training centres:

- Establish written policies defining AI and human roles in assessment

- Train markers on interpreting AI suggestions critically rather than accepting them uncritically

- Create rubrics that explicitly reference CIPD unit criteria and assessment guidance

- Develop learner-facing communications explaining AI assistance and human oversight

- Implement statistical monitoring dashboards tracking AI-human agreement rates

- Schedule quarterly bias audits examining grading patterns across learner demographics

- Maintain detailed logs of AI involvement for regulatory compliance and appeals

- Review and update AI training data regularly to reflect current CIPD standards

Pro Tip: Involve experienced CIPD markers in AI system training and calibration. Their expertise helps the AI learn appropriate standards whilst their ongoing feedback improves system performance. This collaborative approach also builds marker confidence in the technology, easing adoption and ensuring thoughtful use of AI-assisted CIPD marking guidance.

Explore AI-assisted CIPD marking solutions with EduMark

Implementing these best practices requires the right technological foundation. EduMark offers AI-assisted marking specifically designed for CIPD qualifications, built around the hybrid approach this guide recommends. The platform combines AI efficiency for initial assessment with structured workflows ensuring expert human review remains central to every grading decision.

Training centres using EduMark benefit from transparent marking processes where AI suggestions are clearly distinguished from human judgements. The system flags low-confidence assessments automatically, routes complex cases to senior markers, and maintains detailed audit trails supporting quality assurance and regulatory compliance. Markers receive AI-drafted feedback aligned to CIPD criteria, which they refine and personalise before learners see it, ensuring every comment reflects genuine expert insight.

This approach helps training centres maintain grading quality whilst managing growing assessment volumes. The platform supports the statistical monitoring and bias auditing practices outlined above, giving assessment leads visibility into marking patterns and system performance. For CIPD markers and training centres committed to responsible AI use with robust human oversight, AI-assisted CIPD assignment marking through EduMark provides the tools to deliver efficient, fair, and compliant assessment at scale.

Frequently asked questions about human review in AI-assisted grading

What is CIPD's stance on AI-only grading for professional qualifications?

CIPD policy explicitly requires human oversight for all assessment decisions and forbids AI-only grading. Professional qualifications must involve expert human judgement as the final arbiter, with AI serving only as a supporting tool. This ensures accountability, fairness, and alignment with professional standards that algorithms alone cannot guarantee.

When do human reviewers intervene in AI-assisted grading workflows?

Human reviewers intervene at multiple points depending on the hybrid model used. All assessments receive at least a verification check by a human marker. Additionally, reviewers conduct detailed evaluation of low-confidence AI assessments, edge cases involving ambiguity or unusual responses, random samples for quality assurance, and any submission a learner requests human review for. Statistical anomalies flagged by monitoring systems also trigger human investigation.

How should training centres communicate AI involvement to learners?

Transparency requires clear, accessible communication before assessment begins. Inform learners that AI assists in initial marking and feedback drafting but that qualified human markers make all final grading decisions. Explain how the hybrid system works, what safeguards protect fairness, and how they can request human review if concerned. Provide this information in assessment guidance documents and reinforce it in feedback communications.

What are the main benefits of hybrid grading models for CIPD assessments?

Hybrid models deliver 40-90% time savings compared to fully manual marking whilst maintaining or improving accuracy. They ensure consistent application of rubrics across large cohorts, free expert markers to focus on complex cases requiring nuanced judgement, provide faster turnaround times for learner feedback, and maintain regulatory compliance through documented human oversight. The efficiency gains allow training centres to scale assessment capacity without compromising quality.

How do you address concerns about AI fairness and bias in grading?

Address bias concerns through systematic auditing, transparent communication, and robust human oversight. Regular statistical analysis identifies patterns suggesting bias across demographic groups or assessment types. Human reviewers correct algorithmic errors like length bias before marks are finalised. Clear documentation of AI involvement and human review processes builds learner trust. Ongoing training data updates ensure the AI system reflects current standards and diverse learner populations, reducing bias at the source.