TL;DR:

- Accurate marking is essential for maintaining CIPD qualification credibility and learner trust.

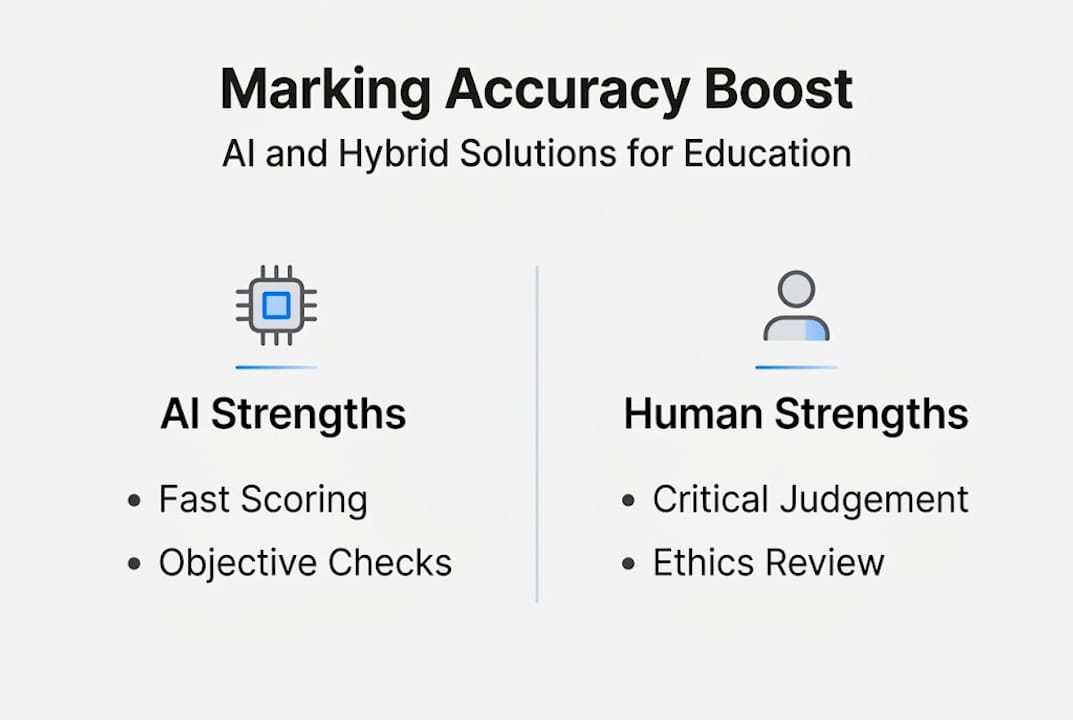

- Hybrid AI-human assessment systems improve reliability, fairness, and regulatory compliance.

- Certainty-Based Marking enhances assessment validity by penalizing guessing and rewarding true understanding.

Marking accuracy sits at the heart of every credible CIPD qualification. Yet many assessment centres operate under a quiet assumption: that their current grading processes are consistent enough. Research tells a different story. AI tools can reach up to 90% accuracy in formative tasks, yet they stumble badly on the nuanced, analysis-heavy work that defines CIPD assessments. This guide explores why accuracy matters, how AI and human expertise each contribute, and what practical frameworks help institutions achieve fair, compliant, and reliable grading at scale.

Table of Contents

- Marking accuracy in CIPD assessments: why it matters

- The role of AI in educational marking accuracy

- Hybrid marking systems: blending AI and expert judgement

- Alternative marking models: certainty-based marking and its impact

- The uncomfortable truth about marking accuracy: why hybrid solutions matter most

- Enhance marking accuracy with EduMark's AI-assisted CIPD solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Hybrid systems essential | Combining AI and human expertise delivers the most reliable marking accuracy for CIPD assessments. |

| AI excels in low-stakes tasks | Artificial intelligence achieves high accuracy for objective and formative assignments but needs human oversight for complex work. |

| Certainty-based marking improves fairness | CBM models enhance discrimination and perceived fairness despite lowering pass rates. |

| Compliance hinges on robust processes | Accurate marking and transparent workflows are key for accreditation and maintaining educational standards. |

Marking accuracy in CIPD assessments: why it matters

CIPD qualifications are not straightforward tick-box exercises. They require learners to demonstrate genuine understanding by connecting theory to workplace practice, evaluating evidence critically, and constructing well-reasoned arguments. Assessment criteria in CIPD qualifications emphasise analysis, application, and critical thinking through structured rubrics covering Pass, Merit, and Distinction bands. Markers are expected to identify theory-practice links and assess the credibility of the evidence learners present.

This complexity raises the stakes considerably for assessment centres. Inconsistent marking does not simply frustrate learners. It undermines the credibility of the qualification itself and exposes institutions to regulatory scrutiny. The types of educational assessments used across CIPD programmes vary widely, from written reports to case studies, and each format demands a different interpretive lens from the marker.

Rubrics help by setting clear expectations, but they are only as effective as the person applying them. A rubric that distinguishes between a Merit and a Distinction response requires skilled professional judgement, not just a checklist. When markers interpret the same rubric differently, inter-rater reliability (the degree to which two markers agree on the same work) drops, and with it, learner trust.

Key risks of inaccurate marking for CIPD assessment centres include:

- Inconsistent outcomes that disadvantage learners unfairly

- Reputational damage if moderation reveals systematic grading errors

- Compliance failures linked to accreditation requirements

- Increased appeals and administrative burden

- Loss of awarding body confidence

"Accurate marking is not just a quality assurance concern. It is a professional and ethical obligation to every learner who has invested time, money, and effort into their CIPD qualification."

Understanding assessment principles that underpin fair grading is the first step toward building a more reliable marking system. Having set the stage on why accurate marking is critical, we move to evaluate the role AI is playing in transforming assessment standards.

The role of AI in educational marking accuracy

Artificial intelligence has entered educational marking with considerable momentum, and the results are genuinely impressive in certain contexts. AI marking accuracy reaches up to 90% in formative and low-stakes assessments, where answers are relatively structured and criteria are objective. Spelling, grammar, citation presence, and factual recall are areas where AI performs reliably and at speed.

However, the picture changes sharply for complex, high-stakes work. Creative writing, critical analysis, and the kind of reflective professional commentary central to CIPD assessments challenge AI significantly. Nuanced judgements about whether a learner has genuinely applied theory to practice, or merely described it, remain difficult for algorithms to make with confidence.

A useful comparison helps clarify where AI adds value and where it falls short:

| Task type | AI suitability | Human suitability |

|---|---|---|

| Grammar and spelling checks | Excellent | Moderate |

| Factual recall questions | Excellent | Moderate |

| Structured short answers | Good | Good |

| Critical analysis and evaluation | Poor | Excellent |

| Theory-practice application | Poor | Excellent |

| Creative or reflective writing | Poor | Excellent |

Research into contrasting views on AI versus human grading confirms that AI delivers strong ROI (reportedly up to 797%) for repetitive, objective marking tasks, but should not be trusted for high-stakes or CIPD-style analytical assessments without human oversight.

The most effective approach follows a clear sequence:

- Use AI to process objective criteria and flag structural issues

- Generate a draft mark with a confidence rating for each criterion

- Route submissions with low confidence scores directly to human reviewers

- Human markers assess application, analysis, and critical thinking

- Final marks are reviewed against moderation benchmarks before release

Pro Tip: When selecting AI grading software, prioritise platforms that provide confidence scores alongside each mark. This transparency is essential for maintaining AI feedback reliability and supporting human reviewers in making informed decisions quickly.

Understanding AI's capabilities leads us to consider how marking systems can be optimised through human and algorithmic collaboration.

Hybrid marking systems: blending AI and expert judgement

Hybrid marking is not a compromise. It is the most defensible, accurate, and compliant approach available to CIPD assessment centres today. The model works by assigning each element of a marking task to the tool best suited to handle it, rather than forcing either AI or humans to work outside their strengths.

In practice, AI handles the objective layer: checking whether a learner has referenced relevant CIPD frameworks, met word count requirements, structured their argument logically, and cited credible sources. These are consistent, rule-based criteria that AI processes quickly and reliably. Human markers then focus their expertise on the interpretive layer: assessing the quality of the analysis, the depth of critical thinking, and the sophistication of theory-practice integration.

This division of labour produces measurable benefits:

| Benefit | AI contribution | Human contribution |

|---|---|---|

| Speed | Processes submissions instantly | Reviews flagged or complex cases |

| Consistency | Applies criteria uniformly | Applies professional judgement |

| Compliance | Documents every decision | Validates against accreditation standards |

| Fairness | Removes unconscious bias from objective checks | Contextualises learner circumstances |

For institutions seeking CIPD Chartered status or maintaining accreditation, inter-rater reliability (IRR) monitoring is non-negotiable. Hybrid systems support IRR by creating an auditable trail of AI-generated drafts and human verification decisions, making it straightforward to demonstrate consistency during moderation.

The practical workflow looks like this:

- AI generates a structured marking draft with criterion-level confidence scores

- Submissions scoring below a confidence threshold are escalated to senior markers

- Human reviewers add inline comments and adjust marks where professional judgement demands it

- A compliance check confirms the final mark aligns with accreditation standards

- The full rationale is documented and stored securely

Pro Tip: Build human review into every high-stakes submission as a mandatory step, not an optional quality check. This protects both the learner and the institution in the event of an appeal.

Understanding AI ethics for UK assessors and compliance in CIPD feedback should inform every workflow decision your centre makes. With the best practices of hybrid marking established, let's examine how alternative marking models further affect accuracy.

Alternative marking models: certainty-based marking and its impact

While hybrid AI-human workflows address the grading process, some institutions are also rethinking the structure of assessments themselves. Certainty-Based Marking (CBM) is one such model, particularly relevant for multiple choice question (MCQ) components within CIPD and professional qualifications.

In CBM, learners do not simply select an answer. They also declare how confident they are in that answer. High confidence in a correct answer earns more marks. High confidence in a wrong answer incurs a penalty. This design rewards genuine knowledge and penalises guessing, producing a more accurate picture of what a learner actually understands.

The evidence is compelling. CBM scoring in medical education shows that CBM reduces pass rates compared to traditional Number Right Scoring (from 84.4% down to 70%), but improves the discriminatory power of the assessment and receives strong student approval for perceived fairness.

Key finding: CBM pass rates dropped from 84.4% to 70% compared to traditional scoring, yet learners rated it as fairer (P<0.001).

"CBM does not make assessments harder for knowledgeable learners. It makes them harder for learners who rely on guessing, which is precisely the point."

A direct comparison of the two models:

| Feature | Traditional (NRS) | Certainty-Based Marking (CBM) |

|---|---|---|

| Pass rate | Higher (84.4%) | Lower (70%) |

| Discrimination | Moderate | Improved |

| Guessing penalty | None | Yes |

| Learner fairness perception | Variable | Positive |

For CIPD assessment centres, CBM is most appropriate for MCQ-based knowledge checks rather than analytical written work. Institutions must weigh the lower pass rates against the improved validity of results, particularly where accreditation bodies scrutinise assessment rigour. AI in assessment workflows can support CBM by automating the confidence-weighted scoring calculations, reducing administrative burden significantly.

In light of these models, it is time to consolidate our findings and outline how educators can enhance accuracy and compliance going forward.

The uncomfortable truth about marking accuracy: why hybrid solutions matter most

Here is something the technology industry rarely admits: AI alone cannot solve the marking accuracy problem for CIPD assessments. It can speed things up. It can reduce inconsistency in objective criteria. It can flag anomalies and generate useful drafts. But the moment a learner's work requires genuine professional interpretation, AI reaches its ceiling.

The institutions that achieve the highest marking accuracy are not those with the most sophisticated algorithms. They are the ones that have been honest about what AI can and cannot do, and have built workflows accordingly. They use AI where it excels and protect the human layer where it matters most.

There is also a compliance dimension that often goes undiscussed. GDPR, data protection obligations, and awarding body standards all require that marking decisions can be explained and justified. AI-generated marks without documented human oversight do not meet that standard. Human review in AI-assisted grading is not a luxury. It is a regulatory requirement for any institution serious about accreditation.

Investing in hybrid systems is not about hedging your bets. It is about building the only model that actually works at scale for complex, high-stakes qualifications.

Enhance marking accuracy with EduMark's AI-assisted CIPD solutions

If your assessment centre is ready to move beyond inconsistent grading and build a workflow that genuinely supports compliance and accuracy, EduMark.ai was designed for exactly this challenge.

EduMark's AI-assisted CIPD marking platform combines structured AI drafts with mandatory human review, confidence scoring, inline comments, and full audit trails, all embedded directly into Word documents for a seamless marker experience. Every submission is handled in line with GDPR and UK data protection standards. Whether you are managing ten submissions or ten thousand, EduMark scales with your institution. Explore how accurate AI grading works in practice, and request a demo to see the workflow firsthand.

Frequently asked questions

How accurate is AI in marking CIPD assessments?

AI achieves up to 90% accuracy in formative, low-stakes tasks, but CIPD assessments require human judgement for critical analysis, theory-practice application, and compliance verification.

What is Certainty-Based Marking and why is it used?

Certainty-Based Marking asks learners to declare confidence alongside their answers, which reduces pass rates but improves discrimination and fairness in MCQ-based assessments compared to traditional scoring methods.

Why are hybrid marking systems preferred over pure AI?

Hybrid systems assign objective tasks to AI and analytical judgements to humans, producing compliance-ready results that neither approach can achieve independently, particularly for high-stakes CIPD qualifications.

How does marking accuracy affect accreditation?

Accreditation bodies require documented, consistent marking decisions supported by IRR monitoring, which hybrid AI-human workflows provide through auditable draft marks and human verification records.