Artificial intelligence is transforming educational assessment in the UK, but it also introduces genuine ethical risks that many institutions underestimate. From the 2020 A-level algorithm scandal that disadvantaged state school students to persistent bias in automated feedback systems, AI marking tools can embed unfairness at scale if deployed without rigorous oversight. Educators and quality assurance teams face mounting pressure to adopt these technologies while maintaining integrity, transparency, and equity. This guide provides practical ethical frameworks aligned with UK regulations, helping you navigate AI-assisted marking responsibly and avoid the pitfalls that have plagued earlier implementations.

Table of Contents

- Key takeaways

- Understanding ethical principles for AI in educational assessment

- How AI-assisted marking works and its ethical challenges

- Performance and limitations of AI in UK educational assessments

- Implementing ethical AI use: practical guidance for UK educators and quality assurance

- Enhance your marking with EduMark's AI-assisted solutions

- Frequently asked questions about educational AI ethics

Key Takeaways

| Point | Details |

|---|---|

| Human oversight essential | UK guidance requires ongoing human oversight to ensure fairness and accuracy in high stakes assessments. |

| Transparent decision making | Marking processes must be documented and accessible to learners and regulators to enable scrutiny. |

| Equity and bias focus | Regular fairness audits should test AI performance across SEND learners, multilingual learners, and varied socioeconomic groups to reveal gaps. |

| Limited high stakes use | AI must not be the sole marker in high stakes assessments and should operate within validated safety tested workflows with human review. |

Understanding ethical principles for AI in educational assessment

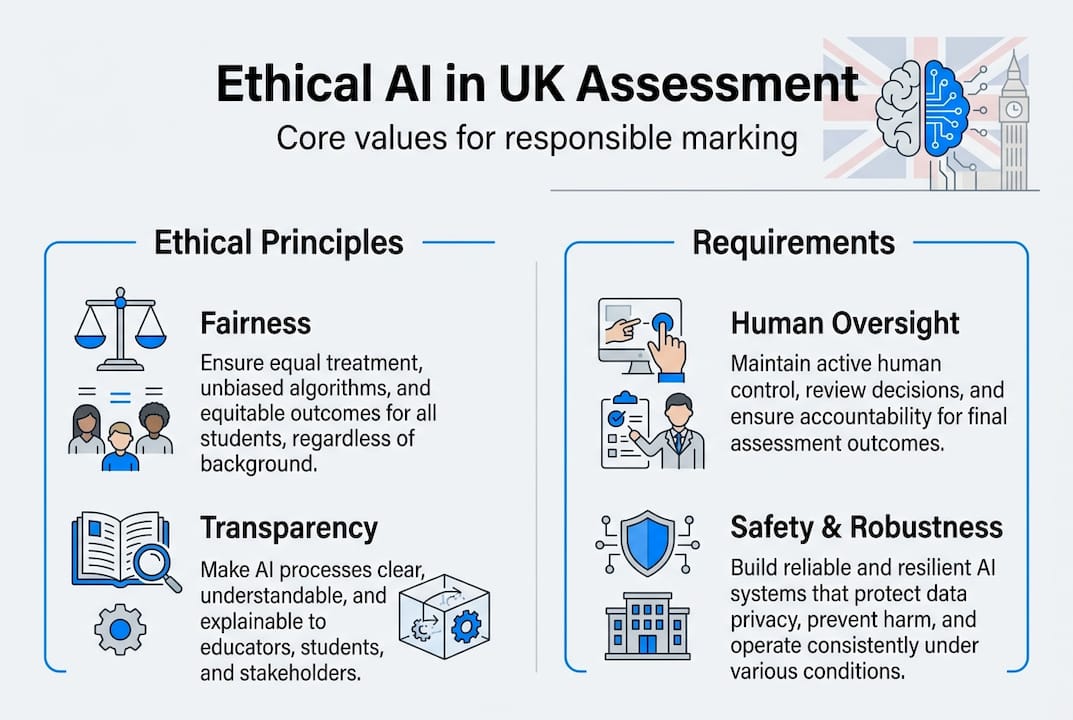

The UK government and Ofqual have established clear principles for AI in marking that centre on four core values: fairness, transparency, accountability, and safety. These aren't abstract ideals but practical requirements that shape how AI can legally and ethically support assessment in UK education. Fairness demands that AI systems treat all learners equitably, regardless of background, disability status, or protected characteristics. Transparency requires that marking processes remain understandable to students, educators, and regulators alike. Accountability ensures clear lines of responsibility when errors occur, while safety mandates robust testing before deployment.

Crucially, these principles establish that AI cannot serve as the sole marker in high-stakes assessments like GCSEs or A-levels. Human involvement remains mandatory, whether through hybrid models where AI handles specific objectives whilst humans assess others, or through comprehensive human review of all AI-generated marks. This human-in-the-loop requirement isn't merely regulatory box-ticking. It reflects genuine limitations in current AI capabilities, particularly around nuanced judgement, creativity assessment, and contextual understanding.

In practice, fairness means regularly auditing your AI tools against diverse learner populations, including SEND students and equity groups who may be underrepresented in training data. Transparency requires documenting how AI systems reach their conclusions and making this information accessible to learners who challenge their marks. Accountability demands clear protocols for human override and appeal processes. Safety necessitates thorough validity testing before rolling out AI marking to live assessments.

Pro Tip: Schedule quarterly fairness audits of your AI marking tools, specifically testing performance across SEND accommodations, multilingual learners, and students from varied socioeconomic backgrounds. Document any performance gaps and adjust training data or human oversight protocols accordingly.

As the official guidance states, "AI systems must be designed and deployed in ways that are safe, secure, and robust." This means treating AI-assisted assignment marking as a support tool that enhances human expertise rather than replacing professional judgement. When you maintain this perspective, AI becomes a powerful ally in delivering faster, more consistent feedback whilst preserving the irreplaceable human elements of educational assessment.

How AI-assisted marking works and its ethical challenges

AI-assisted marking typically operates through hybrid models where technology and human expertise combine in structured workflows. According to research on AI-assisted marking mechanics, common approaches include AI handling specific learning objectives whilst humans assess others, or AI providing initial marks that trained educators then review and validate. These models require careful validity evaluation to ensure AI outputs align with human expert judgement and meet assessment standards.

The ethical challenges emerge primarily from bias embedded in training data and algorithmic decision-making. The 2020 A-level grading scandal provides a stark warning: the algorithm disadvantaged state school students by relying on historical performance data that reflected existing inequalities rather than individual student achievement. This wasn't a technical glitch but a fundamental ethical failure in how the system was designed and validated. Similarly, research has identified gender bias in AI-generated feedback, where identical work receives different commentary depending on the perceived gender of the student.

Common sources of bias and error in AI marking systems include:

- Training data that underrepresents certain demographic groups, leading to poorer performance for SEND students, multilingual learners, or specific ethnic communities

- Algorithmic patterns that favour conventional writing styles over creative or culturally diverse expression

- Historical grade distributions that perpetuate institutional biases rather than reflect current cohort capabilities

- Limited contextual understanding that misinterprets legitimate alternative approaches as errors

- Inconsistent handling of edge cases where human judgement would recognise valid exceptions to general rules

AI excels at speed and consistency when marking straightforward, criteria-based responses. A well-trained system can process hundreds of assignments in the time a human marker handles a dozen, maintaining uniform application of rubric criteria without fatigue effects. However, AI struggles profoundly with assessing creativity, interpreting nuanced argumentation, recognising sophisticated critical thinking, and understanding context-dependent responses. These limitations make AI fundamentally unsuitable as a sole marker for complex, high-stakes assessments.

| AI strengths | AI limitations |

|---|---|

| Rapid processing of large volumes | Poor assessment of creativity and originality |

| Consistent rubric application | Limited contextual understanding |

| Reduced fatigue-related errors | Bias from historical training data |

| Scalable feedback generation | Struggles with nuanced argumentation |

| Objective criteria matching | Cannot recognise sophisticated critical thinking |

Pro Tip: Establish a systematic bias monitoring process by regularly testing your AI marking tools against representative datasets that include diverse learner profiles. Pay particular attention to performance variations across SEND accommodations, English as an additional language learners, and students from underrepresented backgrounds. Document any discrepancies and adjust human oversight protocols to compensate.

When implementing AI-assisted assignment marking, treat these limitations as design constraints rather than temporary inconveniences. Structure your workflows so human expertise handles the aspects where AI falls short, creating a genuinely complementary system rather than attempting to automate away professional judgement.

Performance and limitations of AI in UK educational assessments

Empirical evidence from UK educational contexts reveals both the promise and constraints of AI marking technology. Studies examining AI performance on GCSE essay marking demonstrate approximately 90% accuracy when assessed against human expert markers in controlled conditions. This sounds impressive until you consider what the remaining 10% represents: genuine student work incorrectly assessed, potentially affecting grades, progression opportunities, and learner confidence.

The accuracy figures also mask significant variation across different assessment types and student populations. AI performs best on structured responses with clear rubric criteria, such as short-answer questions or formulaic essay formats. Performance deteriorates markedly when assessing creative writing, sophisticated argumentation, interdisciplinary synthesis, or responses that demonstrate original thinking. These are precisely the higher-order skills that UK qualifications increasingly prioritise, creating a fundamental mismatch between AI capabilities and assessment objectives.

Typical error patterns in AI marking include:

- False positives in plagiarism or AI detection tools, flagging legitimate student work as potentially dishonest

- Misinterpretation of unconventional but valid approaches to problem-solving

- Overemphasis on surface features like grammar and formatting at the expense of conceptual understanding

- Failure to recognise sophisticated implicit argumentation that doesn't follow formulaic structures

- Inconsistent handling of subject-specific terminology or discipline-appropriate writing conventions

These errors aren't randomly distributed. They disproportionately affect students whose work diverges from the mainstream patterns in training data, including neurodivergent learners, multilingual students, and those from educational backgrounds underrepresented in the AI's development dataset.

| Assessment type | Typical AI accuracy | Key limitations |

|---|---|---|

| Multiple choice | 95-98% | Minimal, mainly technical errors |

| Short structured answers | 85-92% | Struggles with alternative valid phrasings |

| GCSE essays | 88-93% | Poor creativity and nuance assessment |

| A-level extended writing | 75-85% | Cannot assess sophisticated argumentation |

| Creative assignments | 60-75% | Fundamentally unsuitable for sole marking |

When interpreting AI marking results, maintain healthy scepticism about edge cases and unexpected scores. A student whose work consistently receives high marks from human teachers but low AI scores likely represents a limitation in the AI system rather than a sudden performance drop. Similarly, unusually high AI marks for work that seems inconsistent with a student's demonstrated capabilities warrant human investigation.

The lesson for UK educators and quality assurance teams is clear: treat AI accuracy figures as upper bounds achieved under ideal conditions rather than guaranteed performance in real-world deployment. Build your workflows around the assumption that human review remains essential, particularly for high-stakes decisions and students whose profiles differ from the AI's training population. When you approach AI-assisted marking solutions with this realistic understanding of capabilities and limitations, you can harness genuine benefits whilst protecting assessment integrity.

Implementing ethical AI use: practical guidance for UK educators and quality assurance

Translating ethical principles into operational practice requires systematic approaches that embed oversight, transparency, and fairness into your AI-assisted marking workflows. The DfE and Ofqual guidelines provide clear direction: prioritise human-in-the-loop control for all assessments, use only approved AI tools with proper training for staff, and conduct regular audits focused on bias in edge cases like SEND and equity groups.

Implementing these requirements effectively involves several concrete steps:

- Establish clear governance protocols that define when AI can support marking, what types of human oversight are required, and how decisions get escalated when AI and human judgements diverge significantly

- Train all staff involved in AI-assisted marking on both the technical operation of tools and the ethical principles governing their use, ensuring they understand limitations and bias risks

- Implement systematic validation processes that compare AI outputs against expert human marking across diverse student populations before deploying to live assessments

- Create transparent documentation of AI involvement in marking that students and parents can access, including clear appeal procedures for challenging AI-influenced decisions

- Schedule regular fairness audits that specifically examine AI performance across protected characteristics, SEND accommodations, and socioeconomic diversity

- Maintain detailed records of AI marking decisions, human overrides, and appeals to identify patterns that might indicate systematic bias or technical issues

The triadic ethics framework offers a useful structure for thinking through ethical considerations at different stages of your AI assessment pipeline. This framework divides ethics into physical ethics (data security and privacy), cognitive ethics (fairness and transparency in decision-making), and informational ethics (accuracy and appropriate use of information). Applying this lens helps you identify potential ethical issues before they affect students.

Physical ethics in AI marking means ensuring robust data protection that complies with GDPR and UK data protection laws, securing student work against unauthorised access, and maintaining clear data retention and deletion policies. Cognitive ethics requires validating that AI marking processes treat all students fairly, making decision-making transparent to stakeholders, and preserving human agency in final assessment decisions. Informational ethics demands accuracy in AI outputs, appropriate use of student data solely for legitimate educational purposes, and clear communication about AI involvement in assessment.

Pro Tip: Create a simple ethics checklist based on the triadic framework that staff complete before deploying any new AI marking tool or expanding AI use to new assessment types. This forces systematic consideration of physical, cognitive, and informational ethics risks before they materialise in practice.

When selecting AI marking tools, prioritise platforms that demonstrate explicit alignment with UK regulatory requirements and provide transparent documentation of their validation processes, bias testing, and human oversight mechanisms. Tools designed specifically for UK educational contexts, like those supporting CIPD qualifications, typically incorporate these requirements by design rather than as afterthoughts. The goal isn't to eliminate AI from assessment workflows but to deploy it responsibly within clear ethical boundaries that protect learner interests whilst capturing genuine efficiency and consistency benefits.

Remember that ethical AI use isn't a one-time implementation task but an ongoing commitment to monitoring, evaluation, and adjustment as both technology and student populations evolve. What works fairly for one cohort may introduce bias for another, and what seems transparent to educators may remain opaque to students and families. Regular stakeholder feedback, combined with systematic performance monitoring across diverse learner groups, helps you maintain ethical standards as your AI marking ethical guidance practices mature.

Enhance your marking with EduMark's AI-assisted solutions

Navigating the ethical complexities of AI in educational assessment doesn't mean abandoning the technology's genuine benefits. EduMark provides AI-assisted CIPD assignment marking specifically designed for UK educators and assessment centres, incorporating the ethical guidelines and human oversight requirements we've explored throughout this guide. Our platform combines AI efficiency with mandatory human review, ensuring faster turnaround times and consistent feedback whilst maintaining the professional judgement essential for fair, transparent assessment.

Every assignment processed through EduMark receives structured feedback with clear rationale, confidence indicators, and inline comments that students can understand and learn from. Our system supports GDPR-compliant data handling, detailed audit trails, and transparent documentation of AI involvement in marking decisions. Whether you're managing CIPD qualifications or other professional training assessments, our AI marking solutions by EduMark help you deliver reliable, efficient, and ethically sound assessment feedback at scale. Explore how our platform can complement your existing quality assurance processes whilst upholding the highest standards of educational integrity.

Frequently asked questions about educational AI ethics

What safeguards exist to prevent AI bias in marking?

UK regulations require human oversight for all AI-assisted marking, mandatory validity testing against diverse student populations before deployment, and regular audits examining performance across protected characteristics and SEND accommodations. Institutions must document AI involvement transparently and maintain clear appeal procedures for students challenging AI-influenced decisions.

Can AI replace human markers in high-stakes assessments?

No. Ofqual and DfE guidelines explicitly prohibit AI from serving as the sole marker in high-stakes qualifications like GCSEs and A-levels. Human involvement remains mandatory, either through hybrid models where AI handles specific objectives whilst humans assess others, or through comprehensive human review of all AI-generated marks before finalisation.

How can educators ensure transparency when using AI tools?

Maintain clear documentation of which assessments involve AI support, how AI outputs are validated by human markers, and what appeal processes exist for students. Communicate AI involvement to learners and parents in accessible language, explaining both capabilities and limitations. Provide detailed rationale for marks that students can understand regardless of AI involvement.

What training is recommended for staff using AI-assisted marking?

Staff need training on both technical operation of AI tools and ethical principles governing their use. This includes understanding AI limitations and bias risks, recognising when human override is necessary, interpreting AI confidence indicators, and following institutional protocols for escalating concerns about AI outputs. Regular refresher training ensures practices evolve with technology.

Are there examples of AI ethics failures in UK education to learn from?

The 2020 A-level algorithm scandal provides the most prominent example, where reliance on historical performance data disadvantaged state school students and embedded existing inequalities into grades. Research has also identified gender bias in AI-generated feedback and false positives in AI detection tools. These failures highlight why human oversight and regular bias auditing remain essential.

How often should institutions audit AI marking tools for fairness?

Quarterly audits provide a reasonable baseline, with additional reviews triggered by significant changes in student demographics, assessment formats, or AI tool updates. Audits should specifically examine performance across SEND accommodations, multilingual learners, and underrepresented groups, documenting any discrepancies and adjusting oversight protocols accordingly.