AI-supported marking is no longer a distant prospect for CIPD training centres. It is already delivering measurable results, with 50% reduction in marking time recorded in apprenticeship settings without any compromise to feedback quality. For assessment coordinators juggling large cohorts, tight turnaround windows, and ever-tightening regulatory expectations, that figure is hard to ignore. This guide cuts through the noise to explain how AI actually works inside assessment workflows, what it genuinely improves, and how your centre can adopt it responsibly and effectively.

Table of Contents

- Why AI in assessment workflows matters

- How AI works in assessment workflows

- Choosing the right AI tools for CIPD assessments

- Maintaining quality and ethics in AI-assisted marking

- Maximising feedback quality with AI support

- Overcoming barriers and practical steps for implementation

- Take the next step with AI-supported assessment

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI halves marking time | Automated tools like Bud Mark enable up to 50 percent faster assessment in CIPD contexts without loss of feedback quality. |

| Hybrid models work best | The strongest results come from combining AI efficiency with human judgement and tailored feedback. |

| Choose customisable solutions | Select AI tools that support custom rubrics and regulatory compliance for seamless integration into CIPD workflows. |

| Quality and ethics matter | Consistent human oversight preserves fairness and meets ethical standards in all automated assessment processes. |

Why AI in assessment workflows matters

Traditional assessment processes carry a familiar set of frustrations. Marking large volumes of CIPD submissions by hand is time-consuming, and when multiple assessors are involved, inconsistency creeps in. Feedback arrives late, candidates lose momentum, and coordinators spend hours chasing quality checks rather than improving learning outcomes.

AI directly addresses these pain points. It automates the repetitive steps that eat into assessor time, applies rubric criteria consistently across every submission, and flags potential issues before they reach the final review stage. The automated assessment benefits for CIPD centres go well beyond speed. Fairness improves because every learner is assessed against the same criteria, applied the same way, every time.

Tools built for vocational and HR training environments have demonstrated real reductions in workload. AI tools that zip through repetitive marking steps without skipping critical rubric points are already in active use across apprenticeship programmes. The evidence is there. The question is how to apply it well.

"The goal is not to replace assessors. It is to give them back the time and headspace to do the parts of their job that genuinely require human expertise."

- Faster turnaround times for candidates

- More consistent application of marking criteria

- Reduced administrative burden on assessment teams

- Earlier identification of common learner gaps

- Improved accurate grading with AI across large cohorts

How AI works in assessment workflows

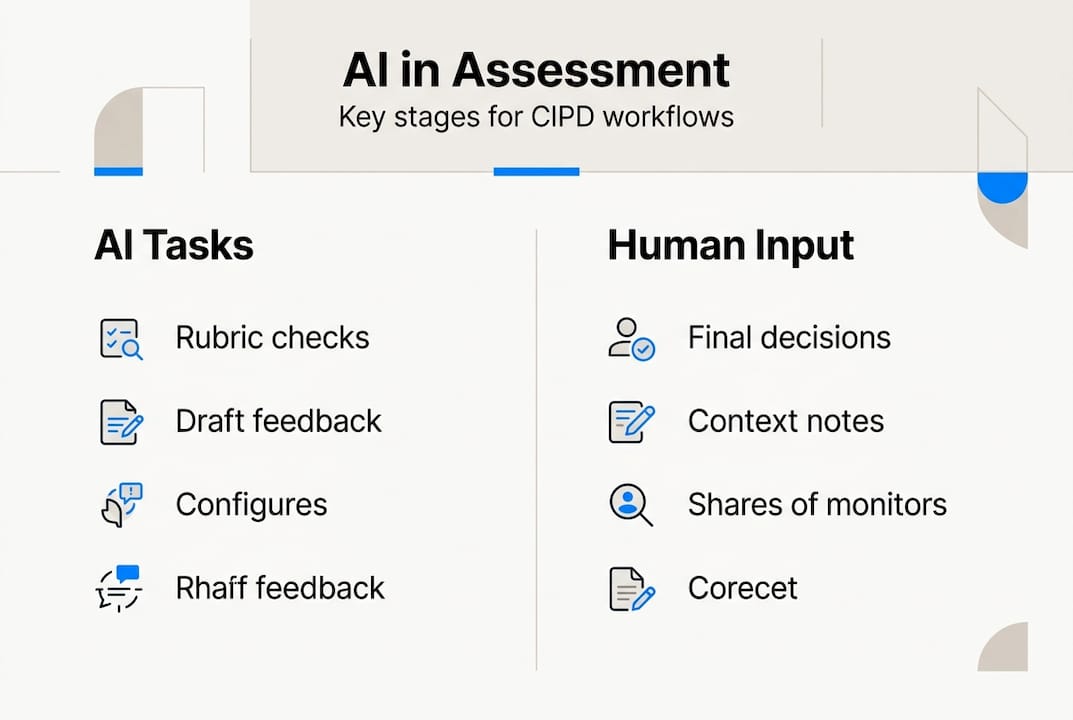

Understanding where AI fits into the process removes a lot of the anxiety around adoption. The workflow does not change entirely. AI slots into specific stages where volume and repetition are highest, leaving the judgement-heavy moments firmly with your assessors.

Here is how a typical AI-supported CIPD assessment workflow breaks down:

- Submission upload — The learner submits their assignment through the platform. The system captures metadata and prepares the document for analysis.

- Rubric application — AI maps the submission against pre-configured marking criteria, identifying which standards have been met, partially met, or missed.

- Automated flagging — Sections that are ambiguous, incomplete, or potentially non-compliant are flagged for human review rather than auto-marked.

- Draft feedback generation — The system produces structured, rubric-aligned feedback notes that the assessor can review, edit, and personalise.

- Human review and sign-off — The assessor reviews the AI output, applies contextual judgement, adds personalised commentary, and approves the final mark.

- Audit trail creation — Every decision, edit, and approval is logged for compliance and internal quality assurance purposes.

The key distinction is that efficient, scalable, and consistent assessment depends on pairing AI capability with human oversight. AI handles volume. Humans provide nuance. Neither works as well alone.

| Task | AI role | Human role |

|---|---|---|

| Rubric application | Automated and consistent | Final approval |

| Ambiguous submissions | Flagged for review | Contextual judgement |

| Feedback drafting | Structured first draft | Personalisation and tone |

| Compliance logging | Automated audit trail | Quality assurance sign-off |

Exploring the full range of key assessment tools available for CIPD contexts will help you map these workflow stages to the right technology.

Choosing the right AI tools for CIPD assessments

Not every AI marking tool is built with CIPD qualifications in mind. Choosing the wrong one creates more problems than it solves. Here is what to prioritise when evaluating your options.

Must-have features for CIPD contexts:

- Customisable rubrics that reflect CIPD unit criteria precisely

- GDPR-compliant data handling and secure storage

- Transparent rationale behind every mark or flag

- Inline commenting and structured feedback embedded in documents

- Full audit trail for internal and external quality assurance

- Integration with existing submission and tracking systems

The 50% marking time reduction achieved in Skills4 trials depended specifically on custom criteria support and seamless integration with established workflows. A tool that forces you to rebuild your process around it will cost more time than it saves.

| Feature | Why it matters for CIPD |

|---|---|

| Custom rubric builder | Aligns AI output to specific unit standards |

| GDPR compliance | Protects candidate data under UK law |

| Confidence scoring | Flags uncertain marks for human review |

| Inline comments | Delivers feedback in familiar document format |

| Audit trail | Supports internal and external verification |

Pro Tip: Before committing to any platform, run a small pilot with two or three assessors on a single CIPD unit. Measure time saved, feedback quality, and assessor confidence. Use that data to build your internal business case.

Reviewing the regulatory compliance guide for CIPD assessments will help you confirm that any tool you shortlist meets the standards your centre is held to.

Maintaining quality and ethics in AI-assisted marking

The most persistent concern about AI in assessment is that it will reduce the quality and fairness of marking. This concern is understandable, but it rests on a misunderstanding of how responsible AI tools actually work.

The best practice model is hybrid. AI handles the volume and consistency. Humans ensure fairness, context, and nuance. Neither replaces the other. Centres that treat AI as a full replacement for assessor judgement will encounter problems. Centres that use it as a structured support tool will not.

"Custom rubrics and human review are the foundation of ethical AI-assisted marking. Scale is only valuable when it does not come at the cost of fairness."

Key risks to manage actively:

- Over-reliance on AI output — Assessors must review, not simply approve, AI-generated marks and feedback.

- Rubric rigidity — Overly narrow criteria can disadvantage learners who demonstrate competence in unexpected ways.

- Data privacy — Candidate submissions must be handled in line with GDPR and UK data protection law at every stage.

- Bias in training data — AI systems should be regularly audited to ensure they do not systematically disadvantage particular learner groups.

The assessment ethics guide for UK assessors provides a solid framework for building these safeguards into your process. Understanding the importance of human review in AI-assisted grading is equally essential for any centre serious about maintaining standards.

Pro Tip: Schedule a quarterly review of your AI marking outputs. Compare AI-generated marks against final assessor decisions to identify any patterns of over-marking, under-marking, or systematic gaps in rubric coverage.

Maximising feedback quality with AI support

Speed is only part of the value AI brings to assessment. The bigger opportunity is in feedback quality. When assessors are not spending three hours marking a single submission, they have time to write feedback that actually moves learners forward.

AI contributes to feedback quality in several concrete ways:

- Instant formative feedback — Learners receive structured, rubric-aligned comments quickly, while the work is still fresh in their minds.

- Trend analysis — AI can identify patterns across a cohort, showing which criteria are consistently missed and where teaching input is needed.

- Gap highlighting — Specific sections of a submission that fall short of criteria are flagged clearly, making it easier for assessors to focus their written commentary.

- Consistency across assessors — Feedback structure remains uniform even when multiple assessors are involved, reducing the risk of learners receiving very different experiences.

The human role remains central. Assessors contextualise the AI output, add encouragement, clarify next steps, and ensure the tone is appropriate for each learner. Integrating AI and human input delivers faster, more consistent feedback that meets both regulatory and learner needs.

For practical guidance on structuring your feedback process, the resource on effective assessment feedback is worth reviewing alongside the feedback compliance tips specific to CIPD qualifications.

Overcoming barriers and practical steps for implementation

Knowing that AI works is one thing. Getting it live inside your centre is another. The barriers are real, but they are all manageable with the right approach.

The three most common obstacles are staff resistance to change, IT setup complexity, and uncertainty about how to measure success. None of these are insurmountable. They simply require a structured rollout rather than a big-bang launch.

Here is a practical implementation sequence:

- Identify a pilot module — Choose one CIPD unit with a manageable cohort size and a willing assessor. Keep the scope tight.

- Configure your rubrics — Work with your assessment lead to translate existing marking criteria into the AI platform's rubric builder.

- Run the pilot — Process a full cohort of submissions through the hybrid workflow. Collect data on time saved, assessor confidence, and feedback quality.

- Gather feedback — Survey both assessors and learners. Identify friction points and refine the process before scaling.

- Train your team — Run structured training sessions that focus on how to review AI output critically, not just how to use the software.

- Scale gradually — Add units one at a time, applying lessons from each pilot before expanding further.

Workflow transformation is most effective when piloted in selected modules, with monitoring for both quality and user satisfaction. Rushing the rollout is the single biggest risk to a successful adoption.

Pro Tip: Involve both your IT lead and your most experienced assessor from day one. Technical setup and pedagogical confidence need to develop together, not in sequence.

Reviewing your approach to assessment data security before you go live will save you significant headaches later, particularly around GDPR obligations and candidate data handling.

Take the next step with AI-supported assessment

If this guide has clarified how AI can genuinely improve your assessment workflows, the logical next step is finding a platform built specifically for CIPD contexts. EduMark.ai is designed precisely for this. It supports structured rubric application, inline feedback, confidence scoring, and full audit trails, all within a GDPR-compliant environment that keeps human review at the centre of every decision.

Whether you are exploring AI marking for the first time or looking to replace a tool that is not delivering, AI marking for CIPD is worth a closer look. EduMark offers a scalable, credit-based model that fits centres of all sizes, with no pressure to overcommit before you have seen results. Start with a pilot. See the difference. Then scale with confidence.

Frequently asked questions

How does AI reduce CIPD marking time without losing quality?

AI speeds up routine assessment checks and applies rubrics consistently across every submission, while retaining final human review for quality and nuance. In practice, 50% faster marking has been achieved in apprenticeship trials without any reduction in feedback depth.

What is the best way to blend AI and human review in assessment?

The ideal approach is a hybrid model where AI handles repetitive, volume-based tasks and humans focus on nuanced, context-led feedback. Hybrid models deliver consistency while preserving the ethical standards that CIPD assessment requires.

Are AI marking tools compliant with CIPD and UK regulations?

Leading AI tools are designed to meet UK educational regulations and support full audit trails when integrated correctly. Custom rubrics and secure data handling are core features of any compliant AI assessment platform.

What are the main barriers to adopting AI in assessment?

The biggest challenges are initial staff training, legacy workflows, and ensuring data security, all of which are manageable with a phased adoption approach. Successful pilots focus on training, structured feedback collection, and continuous improvement before scaling.