TL;DR:

- Choosing the right assessment mix is crucial for compliance, credibility, and learner development.

- Formative assessments provide ongoing feedback, while summative assessments certify achievement.

- Blending diagnostic, benchmark, and ipsative assessments offers targeted insights and supports personalized learning.

Choosing the wrong assessment approach does not just frustrate learners. It can compromise your compliance record, skew your quality data, and undermine the credibility of your entire training programme. For CIPD training centres, where regulatory scrutiny is constant and candidate outcomes matter enormously, getting the assessment mix right is a strategic priority. This guide walks you through the five main assessment types, from formative to ipsative, outlining how each works, where each fits, and how to blend them for both compliance and genuine learning impact.

Table of Contents

- How to evaluate and select assessment types

- Formative assessments: driving improvement in real time

- Summative assessments: certifying achievement and compliance

- Diagnostic, benchmark, and ipsative assessments: targeted insights

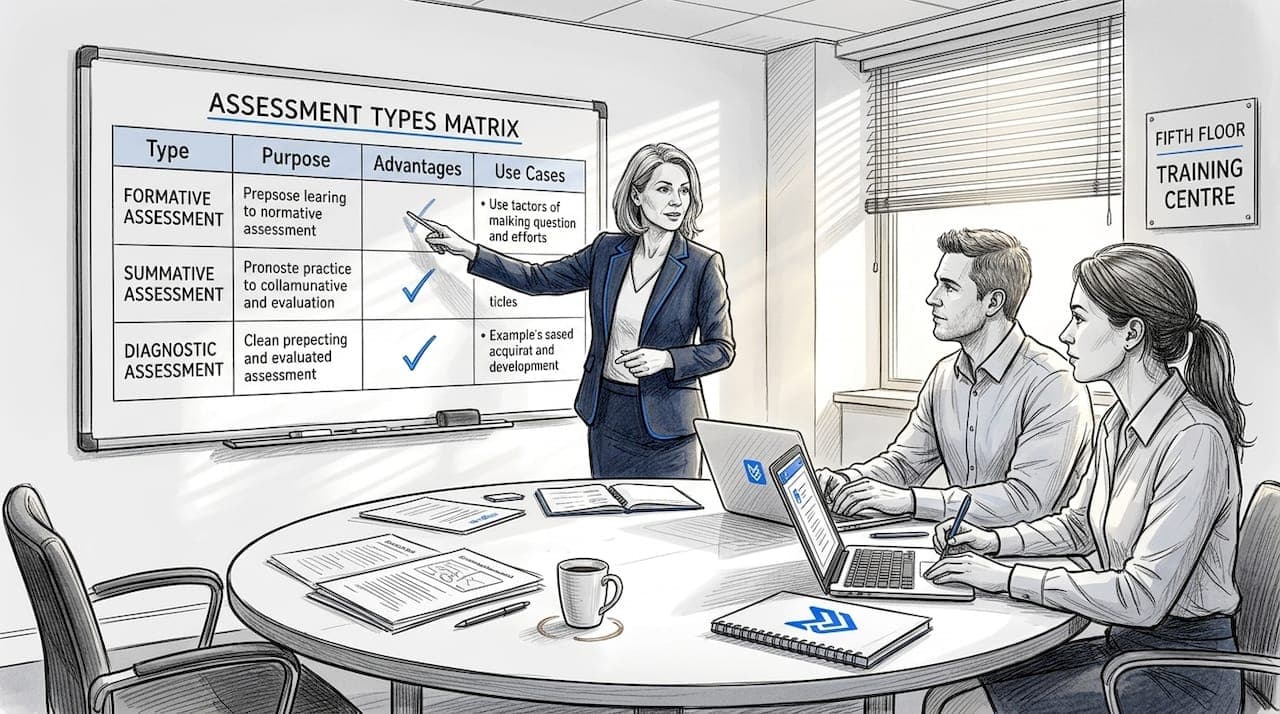

- Comparing assessment types: strengths, weaknesses and use cases

- Our perspective: moving beyond the checklist

- Enhance your assessment strategy with EduMark

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Comprehensive assessment mix | Blending formative, summative, diagnostic, benchmark, and ipsative assessments ensures robust and compliant evaluation. |

| Compliance made easier | Choosing the right method streamlines CIPD audits and meets regulatory requirements. |

| Formative boosts learning | Ongoing feedback through formative assessment directly improves training quality and outcomes. |

| Summative certifies achievement | Final assessments validate learning and satisfy formal criteria for progression or qualification. |

| Benchmark shows progress | Regular progress checks help meet standards and inform continuous improvement. |

How to evaluate and select assessment types

Before exploring each assessment type in detail, leaders should understand how to select the right mix for their context. The decision is rarely straightforward, and the consequences of a poor choice ripple through everything from learner motivation to audit outcomes.

When evaluating assessment methods, four core criteria should guide your thinking:

- Compliance requirements: What does your awarding body or regulatory framework demand? Summative evidence is often non-negotiable for certification.

- Assessment purpose: Are you measuring readiness, progress, or final achievement? Each calls for a different tool.

- Candidate profile: Consider learner experience, confidence levels, and prior knowledge. A diagnostic approach may be essential for mixed-ability cohorts.

- Resourcing: Some methods require significant assessor time. Others can be partially automated without sacrificing quality.

Main types include formative, summative, diagnostic, benchmark, and ipsative assessments, each serving a distinct function within a learning programme. Understanding this typology is the foundation of sound assessment design. For CIPD compliance, the general principle is to use low-stakes formative methods for ongoing evaluation and summative approaches for certification decisions.

An effective framework aligns each assessment method with specific training outcomes. Map your learning objectives first, then work backwards to identify which assessment type generates the evidence you need. Review your assessment checklist for CIPD compliance to ensure nothing is overlooked. For deeper guidance on methodology, explore CIPD assessment best practices to benchmark your current approach.

Pro Tip: Avoid relying on a single assessment type. Blending methods strengthens validity, captures a fuller picture of learner capability, and makes your programme more resilient during external review.

Formative assessments: driving improvement in real time

With a selection framework in place, the first assessment type to consider is formative methods. These are the low-stakes, ongoing activities that happen throughout a learning programme rather than at the end of it.

Formative assessments are designed to inform instruction, not to certify achievement. Their primary value lies in the feedback loop they create between assessor and learner. When used well, they surface misconceptions early, before they become entrenched.

Common formative methods include:

- Quizzes and knowledge checks: Short, frequent, and easy to administer. Ideal for testing recall and comprehension.

- Exit tickets: Brief end-of-session reflections that reveal whether key concepts have landed.

- Peer and self-review: Encourages metacognition and builds critical thinking alongside subject knowledge.

- Observations and discussions: Particularly valuable in CIPD contexts where professional behaviours are being assessed.

Formative assessments use quizzes, exit tickets, peer review, and observations to adjust instruction in real time. This adaptability is what makes formative methods so powerful for professional development programmes.

The main limitation is scalability. Without the right tools, gathering and acting on formative data across a large cohort becomes unwieldy. This is where structured feedback in formative assessment becomes critical, ensuring consistency without overwhelming assessors. Platforms offering AI grading for formative assessment can significantly reduce the administrative burden while maintaining feedback quality.

From a compliance standpoint, formative evidence also strengthens your audit trail. It demonstrates that ongoing evaluation is embedded in your programme, not just bolted on at the end.

Pro Tip: Digital tools that automate formative data collection free assessors to focus on interpretation and intervention, which is where the real professional value lies.

Summative assessments: certifying achievement and compliance

After formative assessment sets the learning path, summative methods deliver the certification. These are the high-stakes evaluations that mark the end of a learning period and carry significant weight for both learners and institutions.

Summative assessments typically take the form of:

- Final examinations: Standardised, time-limited, and often externally moderated.

- Assessed projects or assignments: Allow learners to demonstrate applied knowledge over a longer period.

- Standardised tests: Benchmarked against national or organisational norms.

Summative includes exams, projects, and standardised tests, though high-stakes formats can amplify stress and encourage score inflation. This is a real tension for CIPD leaders. The pressure to demonstrate outcomes can inadvertently narrow the curriculum or push learners towards surface-level preparation rather than deep understanding.

Research confirms this risk. High-stakes testing boosts maths performance in some contexts but narrows the curriculum and spurs inappropriate preparation strategies. For CIPD programmes, where professional judgement and applied competence matter more than rote recall, this distortion can be genuinely harmful.

Summative assessments are audit-critical. They provide the formal evidence that learners have met the required standard, which is why their design, marking, and moderation must be watertight.

For curriculum-aligned assessment examples that demonstrate how summative tasks can be contextualised effectively, or for standardised test examples that show how rigour and accessibility can coexist, external models offer useful reference points. The distinction between approaches is explored further in this overview of summative vs formative assessments.

Diagnostic, benchmark, and ipsative assessments: targeted insights

Beyond formative and summative, three specialised types offer additional insight and support. Each serves a distinct purpose, and together they fill gaps that the two main categories cannot address alone.

Diagnostic uses pre-tests and skills checklists to identify gaps before instruction begins. Benchmark assessments use periodic progress tests to track development over time. Ipsative assessments compare a learner against their own previous performance rather than against peers or a fixed standard.

Here is a comparison of their key features:

| Assessment type | Primary use | Key strength | Main limitation |

|---|---|---|---|

| Diagnostic | Pre-instruction gap analysis | Enables targeted teaching | Requires time before programme starts |

| Benchmark | Periodic progress tracking | Aligns with regulatory standards | NRS levels for adults.pdf), not universal in all contexts |

| Ipsative | Personal growth measurement | Motivates individual progress | Hard to aggregate for group reporting |

For CIPD training centres, ipsative approaches are particularly valuable in professional development contexts where individual growth trajectories matter more than cohort averages. Exploring self-assessment methods can provide practical techniques for embedding ipsative thinking into your programme design.

The practical steps for using these three types effectively are:

- Administer diagnostic assessments before the programme begins to segment learners by need.

- Schedule benchmark assessments at regular intervals, aligned to your reporting calendar.

- Introduce ipsative reflection at key programme milestones to build self-awareness and motivation.

- Use the data from all three to personalise support and demonstrate responsiveness to individual learner needs.

For centres looking to scale this approach, automated diagnostic benchmarking reduces the manual burden while maintaining the analytical rigour these methods require.

Comparing assessment types: strengths, weaknesses and use cases

To make final decisions, leaders need a direct comparison of all the assessment types discussed. The table below consolidates the key information.

| Assessment type | Best used when | Compliance value | Key risk |

|---|---|---|---|

| Formative | Ongoing skill development is the priority | Provides continuous audit evidence | Inconsistency without standardisation |

| Summative | Certification or end-point evaluation is required | High; directly meets regulatory requirements | Stress and narrow preparation focus |

| Diagnostic | Learner readiness is unknown or variable | Supports differentiated provision evidence | Resource-intensive to administer |

| Benchmark | Progress reporting to stakeholders is needed | Demonstrates measurable progress over time | Can feel repetitive to learners |

| Ipsative | Professional development and growth focus | Supports personalised learning records | Limited use for group-level compliance data |

Performance-based assessments improve validity through real tasks but require clear rubrics and careful calibration to function reliably. This is a growing trend across professional education, and CIPD programmes are well placed to lead on it.

The shift towards authentic, task-based assessment reflects a broader recognition that traditional formats alone cannot capture the full range of professional competence. For AI for reliable assessment, the evidence points to improved consistency when human oversight remains central. Equally, assessment data security is a non-negotiable consideration when adopting digital tools, particularly given GDPR obligations.

The most effective CIPD programmes do not pick one type and commit to it exclusively. They build a deliberate, documented blend that serves both learner development and regulatory accountability.

Our perspective: moving beyond the checklist

With the options compared, here is a candid view from experienced assessment leaders. The most common mistake we see is treating assessment design as a compliance exercise rather than a quality one. Centres tick the boxes, submit the evidence, and move on. But the programmes that genuinely develop capable HR professionals are the ones that treat assessment as a living system, not a fixed structure.

Calibration sessions, regular review of marking decisions, and actively seeking candidate feedback are not optional extras. They are the mechanisms that keep your assessment valid over time. Without them, even a well-designed blend of formative and summative methods will drift.

Automation and AI have a real role to play here, but only when they serve professional judgement rather than replace it. The risk is not that AI marks badly. The risk is that centres stop questioning whether the marks are right. Responsible use of tools like those discussed in AI ethics for assessment keeps human accountability at the centre of the process, which is exactly where it belongs.

Enhance your assessment strategy with EduMark

If the comparison above has highlighted gaps in your current approach, EduMark.ai is built to help CIPD training centres close them.

EduMark's AI-assisted marking supports formative, summative, and blended assessment workflows, delivering structured feedback, confidence checks, and transparent marking rationale directly into Word documents. The platform is designed for teams that need to scale without sacrificing consistency or compliance. For centres exploring automated assessment for CIPD, EduMark offers a practical, GDPR-compliant route to faster turnaround times and stronger audit trails. Book a demo to see how it fits your programme.

Frequently asked questions

What is the main difference between formative and summative assessments?

Formative assessments track ongoing progress and adjust teaching in real time, while summative assessments measure achievement at the conclusion of a learning period and are typically used for certification decisions.

How do diagnostic assessments benefit CIPD training programmes?

Diagnostic assessments identify skills and knowledge gaps before instruction begins, enabling tutors to tailor their approach and improve outcomes from the very first session.

What are the limitations of ipsative assessments?

Ipsative is self-comparison focused, which means it tracks individual progress effectively but cannot be used for group-level benchmarking or aggregated compliance reporting.

Why are benchmark assessments important for compliance?

Benchmarks use periodic checks.pdf) to demonstrate measurable learner progress over time, providing the kind of documented evidence that satisfies regulatory and organisational reporting requirements.

Is it effective to use multiple types of educational assessments?

Yes. Combining assessment types strengthens overall validity, supports a wider range of learner needs, and produces a more robust evidence base for compliance purposes in CIPD programmes.