Many CIPD educators remain hesitant about automated assessment, clinging to traditional marking methods despite mounting evidence of AI's advantages. This uncertainty stems from valid concerns about fairness, compliance, and maintaining the human touch in professional development evaluation. Yet research consistently demonstrates that well-implemented automated systems deliver remarkable consistency, with correlation coefficients matching human agreement rates whilst dramatically reducing turnaround times. This guide explores why automated assessment has become essential for CIPD training centres, examining how AI-assisted marking enhances integrity, accelerates feedback, and supports compliance without compromising educational quality.

Table of Contents

- Key takeaways

- Understanding automated assessment and its role in CIPD training

- Benefits of automated assessment for educators and learners

- Addressing challenges and maintaining assessment integrity

- Implementing automated assessment in CIPD training centres: practical tips

- Discover AI-assisted marking solutions for your CIPD training centre

- Frequently asked questions about automated assessment

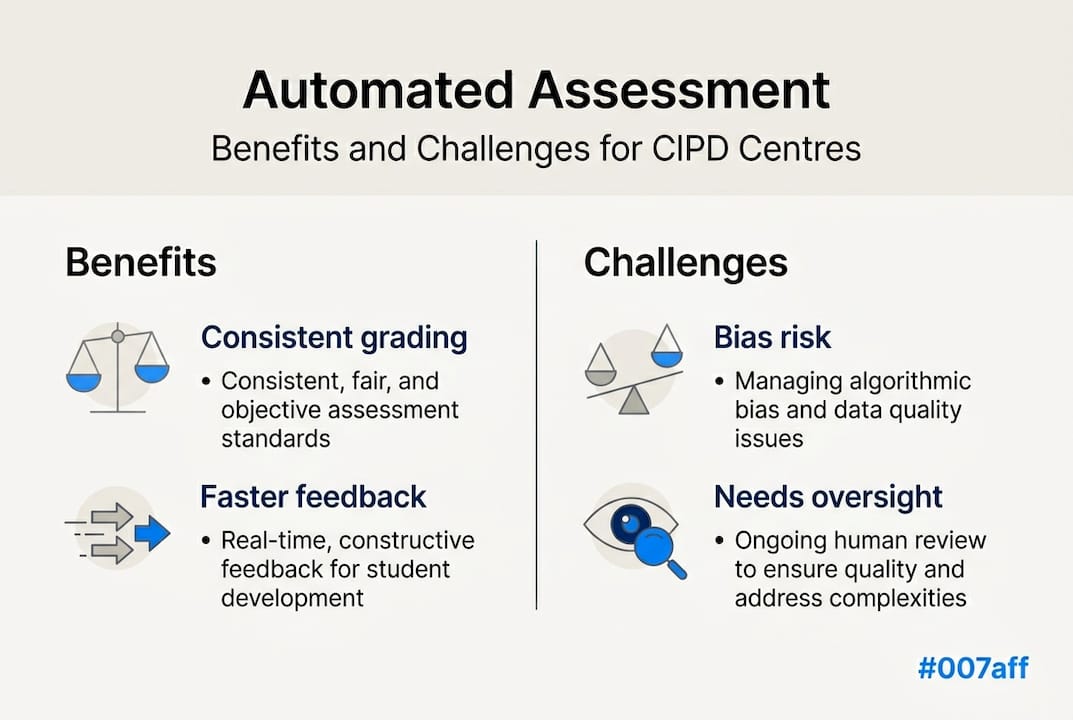

Key Takeaways

| Point | Details |

|---|---|

| Consistent grading | Automated assessment delivers reliability comparable with expert grades, reducing variation caused by fatigue or bias. |

| Instant feedback | Immediate feedback accelerates learner understanding and engagement by guiding improvements quickly. |

| Audit trails | Comprehensive audit trails and clear rubrics increase transparency and support quality assurance in automated marking. |

| Scenario based assessments | Scenario driven tasks connect CIPD competencies to real workplace challenges, improving assessment validity. |

| Hybrid human AI | Hybrid approaches combine algorithmic consistency with human judgement to mitigate bias and preserve professional oversight. |

Understanding automated assessment and its role in CIPD training

Automated assessment refers to technology-driven evaluation systems that analyse learner submissions against predefined criteria, generating marks and feedback with minimal human intervention. For CIPD training centres, this typically involves uploading assignments to platforms that apply sophisticated algorithms to measure competency demonstrations, theoretical understanding, and practical application of people development principles.

Three primary methodologies shape AI assessments in professional education contexts. Rubric-based systems evaluate submissions against detailed marking schemes, assigning scores to specific criteria such as evidence quality, critical analysis depth, and professional language use. Scenario-based assessments present learners with realistic workplace situations, measuring their ability to apply CIPD frameworks to practical challenges. Hybrid human-AI approaches combine algorithmic consistency with marker expertise, flagging submissions that require nuanced judgement whilst automating straightforward evaluations.

The scenario-based methodology proves particularly valuable for CIPD qualifications because competency demonstration requires contextual understanding rather than rote memorisation. When learners analyse a fictional redundancy situation or design a performance management intervention, AI can assess whether their responses demonstrate appropriate application of employment law, ethical considerations, and evidence-based practice. This mirrors how qualified HR professionals approach real workplace challenges.

Transparency remains crucial for building trust in automated systems. Effective platforms provide detailed rubrics visible to both learners and markers, explaining exactly which criteria the AI evaluates and how different response qualities map to grade boundaries. When learners understand assessment logic, they can target their efforts more effectively whilst markers gain confidence that technology supports rather than replaces professional judgement.

Key components of effective automated assessment for CIPD contexts include:

- Clear rubric alignment with CIPD assessment criteria and learning outcomes

- Confidence scoring that flags borderline or complex submissions for human review

- Detailed feedback generation explaining strengths and development areas

- Reference checking capabilities to verify citations and detect potential plagiarism

- Audit trails documenting all automated decisions for quality assurance purposes

For centres exploring AI-assisted CIPD assignment marking, understanding these foundational elements helps identify platforms that genuinely enhance rather than merely automate the assessment process.

Benefits of automated assessment for educators and learners

Consistency represents perhaps the most compelling advantage of automated assessment. Human markers, regardless of experience, exhibit natural variation in their judgements due to fatigue, mood, and unconscious bias. Research demonstrates that automated systems achieve reliability scores between 0.85 and 0.95 when correlating with expert human grades, matching or exceeding typical inter-rater agreement amongst experienced markers. This consistency proves especially valuable for large cohorts where multiple markers might otherwise introduce unwanted grade variation.

Bias reduction extends beyond mere consistency. Traditional marking can inadvertently favour certain writing styles, penalise non-native speakers, or reflect marker preconceptions about learner ability based on previous submissions. Well-designed AI systems evaluate submissions against objective criteria without accessing learner identity or history, ensuring each piece receives fresh consideration purely on its demonstrated merit. This fairness particularly matters for CIPD qualifications where diverse professional backgrounds enrich learning but shouldn't influence assessment outcomes.

The speed advantage transforms learner experience fundamentally. Studies indicate that instant feedback improves outcomes by up to 30% because learners can immediately apply insights to subsequent work rather than waiting weeks for marked assignments. For working professionals balancing CIPD study with demanding careers, rapid turnaround means maintaining momentum and addressing misconceptions before they become entrenched.

"AI-powered assessment tools provide immediate, personalised feedback that helps students identify strengths and areas for improvement in real-time, fostering a more dynamic and responsive learning environment." This observation from educational technology research captures how automation shifts assessment from summative judgement to formative development tool.

Engagement naturally increases when learners receive timely, detailed feedback. Traditional marking often provides brief comments due to time constraints, leaving learners uncertain about how to improve. Automated systems can generate extensive feedback addressing multiple criteria, explaining precisely why responses met or missed standards, and suggesting specific development strategies. This richness helps learners take ownership of their progression through CIPD qualifications.

Data analytics represent an often-overlooked benefit for CIPD marking solutions. Automated platforms generate dashboards showing class performance patterns, common misconceptions, and individual learner trajectories over time. Educators can identify which topics require additional teaching input, which learners need targeted support, and whether assessment tasks effectively measure intended outcomes. This intelligence supports continuous improvement of both teaching and assessment design.

The impact on learning outcomes extends beyond grades. When learners receive consistent, unbiased, timely feedback with clear improvement guidance, they develop stronger self-regulation skills and deeper understanding of professional standards. These metacognitive benefits prove especially valuable for CIPD qualifications that aim to develop reflective practitioners capable of continuous professional development throughout their careers.

Addressing challenges and maintaining assessment integrity

Critics rightly highlight potential pitfalls of automated assessment. Concerns include algorithmic bias, over-reliance reducing critical thinking, and systems failing to recognise innovative responses that deviate from training data patterns. These risks demand serious consideration rather than dismissal, particularly for professional qualifications where assessment integrity directly impacts workforce competence.

Bias can emerge when training data reflects historical inequities or when algorithms inadvertently penalise certain demographic groups. CIPD centres must scrutinise how automated systems were developed, demanding evidence of bias testing across diverse learner populations. Effective platforms provide bias auditing tools showing grade distributions by protected characteristics, enabling centres to identify and address problematic patterns before they affect learner outcomes.

Over-reliance represents another legitimate concern. If learners discover they can achieve passing grades through formulaic responses that satisfy AI criteria without demonstrating genuine understanding, assessment validity collapses. Similarly, if markers defer entirely to automated judgements without applying professional expertise to complex cases, the human oversight that ensures contextual appropriateness disappears.

Maintaining critical thinking whilst using automation requires deliberate design choices:

- Incorporate open-ended questions requiring synthesis and evaluation rather than recall

- Use scenario-based assessments demanding application of multiple frameworks to novel situations

- Implement confidence thresholds triggering human review when AI uncertainty exceeds acceptable limits

- Require markers to verify a sample of automated grades, comparing AI rationale with their professional judgement

- Regularly update assessment tasks to prevent pattern recognition replacing genuine competency demonstration

Transparency builds accountability and trust. When CIPD marking platform features include detailed rationales explaining why specific grades were assigned, both learners and quality assurance teams can evaluate whether decisions reflect sound professional judgement. This openness also helps identify when AI misinterprets responses, enabling system refinement over time.

Pro Tip: Establish clear escalation protocols defining which submission characteristics automatically trigger human review. Factors might include confidence scores below 0.75, detection of potential plagiarism, exceptional performance warranting distinction consideration, or learner appeals requesting marker verification. These protocols ensure automation enhances rather than replaces professional expertise.

Ethical AI use requires ongoing vigilance. Training centres should establish governance frameworks specifying acceptable automation boundaries, data protection measures, and regular auditing schedules. GDPR compliance matters particularly for CIPD contexts where assignments often reference real workplace situations potentially containing sensitive personal data. Platforms must demonstrate robust anonymisation, secure storage, and clear data retention policies aligned with regulatory requirements.

Implementing automated assessment in CIPD training centres: practical tips

Deciding whether automated assessment suits your centre requires honest evaluation of current marking practices and future aspirations. Consider this comparison:

| Aspect | Manual marking | Automated assessment |

|---|---|---|

| Turnaround time | 2-4 weeks typical | Minutes to hours |

| Consistency | Varies by marker fatigue and experience | Uniform application of criteria |

| Feedback detail | Limited by time constraints | Comprehensive coverage of all criteria |

| Scalability | Requires proportional marker hours | Handles volume without linear cost increase |

| Bias risk | Unconscious human bias possible | Algorithmic bias requires monitoring |

| CIPD compliance | Depends on marker expertise | Built into rubric design |

| Cost structure | Fixed hourly rates | Variable per-submission pricing |

Selecting appropriate technology demands careful evaluation. Effective AI assessments for CIPD compliance should demonstrate proven accuracy with similar qualification levels, transparent methodology allowing quality assurance scrutiny, and integration capabilities with your existing learning management systems. Request pilot programmes assessing sample assignments alongside your experienced markers to validate correlation before committing to full implementation.

Anti-cheating measures become increasingly sophisticated with AI assistance. Scenario-based assessments naturally resist plagiarism because each learner receives unique workplace situations requiring original analysis. Advanced platforms detect suspicious patterns such as responses matching online resources too closely, writing style inconsistencies suggesting external assistance, or submission timestamps indicating implausible completion speeds. These safeguards protect assessment integrity whilst respecting learner privacy.

Performance dashboards transform how centres monitor quality. By generating analytics for class insights, automated systems reveal which learning outcomes learners consistently struggle with, whether particular assignment types effectively measure competency, and how individual progression compares to cohort norms. This intelligence supports targeted interventions, curriculum refinement, and evidence-based conversations with external verifiers about assessment effectiveness.

Implementation success requires stakeholder engagement. Markers need training on interpreting AI confidence scores, understanding when to override automated judgements, and using feedback generation tools effectively. Learners benefit from clear communication about how automation enhances fairness and feedback quality whilst maintaining human oversight for complex decisions. Senior leadership must champion the cultural shift from viewing assessment as purely summative judgement toward recognising its formative development potential.

Pro Tip: Start with lower-stakes formative assessments before applying automation to summative examinations. This approach allows markers and learners to build confidence in the technology whilst identifying any rubric refinements needed before high-stakes decisions depend on automated judgements. Monitor correlation between AI and human grades closely during this pilot phase.

Ongoing professional development ensures your team maximises automation benefits. Consider CPD upskilling courses focused on AI literacy, assessment design for automated evaluation, and interpreting learning analytics. As technology evolves, maintaining current knowledge helps your centre leverage new capabilities whilst avoiding common implementation pitfalls.

For centres ready to explore how automation might enhance their assessment practices, investigating platforms specifically designed for professional qualifications offers the most relevant insights. Generic educational technology often lacks the nuanced understanding of competency-based assessment that CIPD qualifications demand, making specialised solutions worth the additional investment.

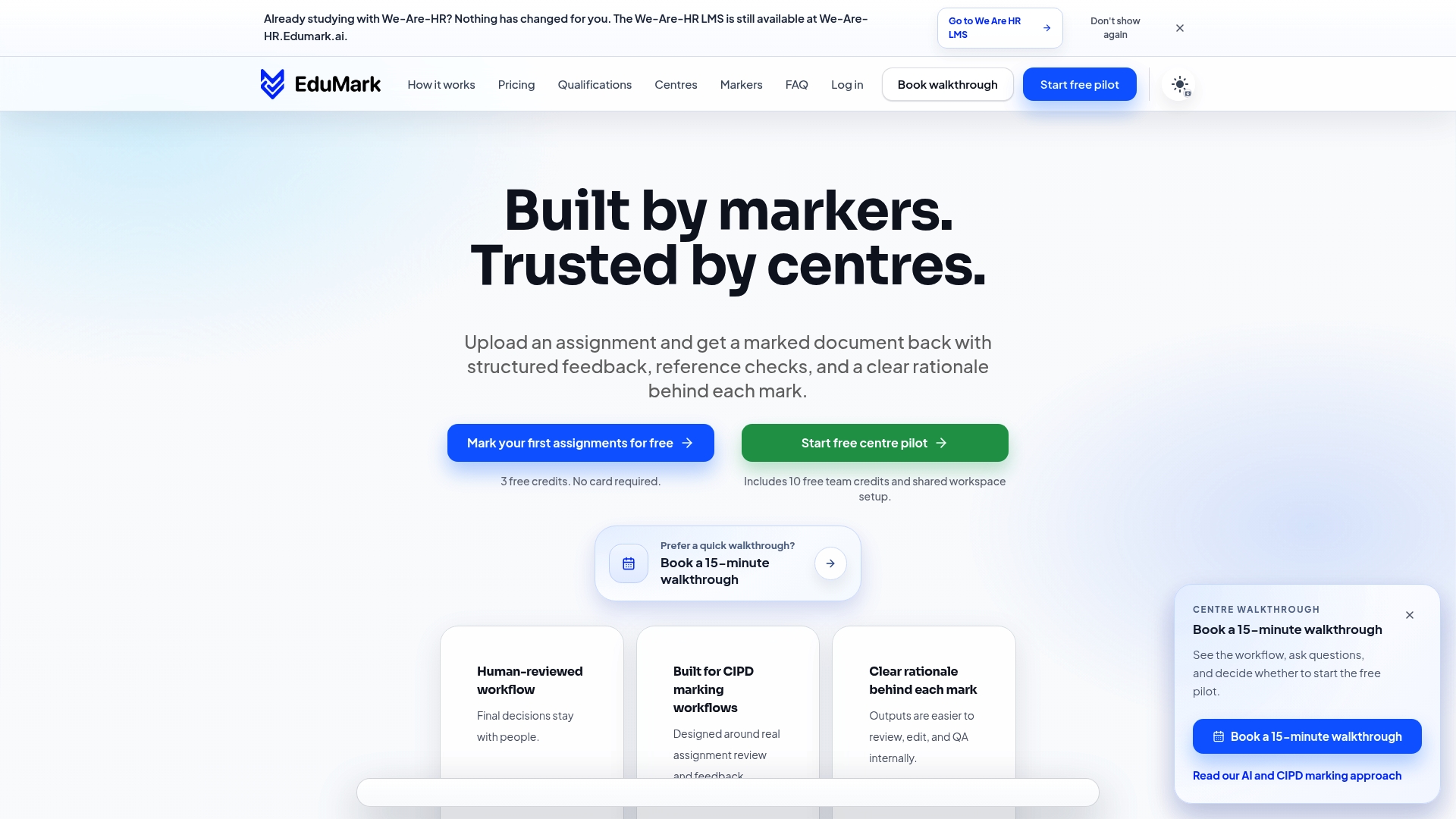

Discover AI-assisted marking solutions for your CIPD training centre

EduMark.ai provides purpose-built technology for CIPD training centres seeking to enhance assessment efficiency without compromising quality. The platform combines sophisticated AI analysis with transparent human oversight, delivering consistent marking that accelerates feedback whilst maintaining the professional judgement essential for competency evaluation. Features include detailed rubric alignment with CIPD standards, confidence scoring that flags complex submissions for marker review, and comprehensive analytics supporting continuous improvement.

Centres using AI-assisted CIPD assignment marking report significant reductions in turnaround times alongside improved marker satisfaction and learner engagement. The platform's credit-based pricing scales with your assessment volume, avoiding the fixed costs of traditional marking whilst providing predictable budgeting. Explore how EduMark.ai might support your centre's commitment to fair, efficient, and compliant assessment practices.

Frequently asked questions about automated assessment

Can automated assessment ensure CIPD compliance?

Yes, when properly configured. Automated systems evaluate submissions against detailed rubrics mapping directly to CIPD assessment criteria and learning outcomes. Platforms designed for professional qualifications incorporate regulatory requirements into their evaluation logic, ensuring consistency with awarding body standards. Human oversight remains essential for borderline cases and appeals, but automation handles the majority of submissions whilst maintaining compliance.

How does AI prevent cheating in assessments?

AI employs multiple detection methods including plagiarism checking against online sources and previous submissions, writing style analysis identifying inconsistencies suggesting external assistance, and scenario-based tasks requiring original application of concepts to unique situations. These combined approaches make cheating significantly more difficult whilst respecting learner privacy and avoiding false accusations.

What support exists for markers using automated tools?

Reputable platforms provide comprehensive training on interpreting confidence scores, understanding when to override AI judgements, and using feedback generation features effectively. Technical support addresses system issues promptly, whilst ongoing professional development helps markers stay current with evolving capabilities. Quality CIPD marking solutions treat markers as expert partners rather than attempting to replace their professional judgement.

Is human oversight still needed?

Absolutely. Automated assessment works best as a marker support tool rather than replacement. Humans must review borderline submissions, handle appeals, validate that AI rationales reflect sound professional judgement, and make final decisions on high-stakes assessments. This balanced approach combines AI consistency and speed with human contextual understanding and ethical reasoning.

How quickly can feedback be provided to learners?

Automated systems generate initial feedback within minutes of submission, though human review may add hours or days depending on confidence scores and centre protocols. Even with human verification, total turnaround typically reduces from weeks to days, dramatically improving the learning experience. Instant preliminary feedback allows learners to begin reflection immediately whilst awaiting final confirmed grades.