Delivering assessment feedback that is timely, specific, and fully compliant is one of the most persistent challenges facing CIPD training centres. As learner numbers grow and marking workloads intensify, the pressure to maintain quality without cutting corners becomes very real. AI-assisted marking tools are reshaping how centres approach this challenge, but they introduce their own compliance and oversight requirements. Effective feedback must be timely, specific, actionable, and aligned with rubrics to close performance gaps. This guide walks you through every stage of that process, from understanding what structured feedback actually means to integrating AI tools responsibly.

Table of Contents

- Understanding structured assessment feedback

- Gathering your requirements: Tools, rubrics, and compliance

- Step-by-step: Delivering structured feedback using the PEEL method

- Integrating AI tools: Workflow and best practices

- Common pitfalls and how to verify feedback quality

- Streamline assessment feedback in your CIPD centre

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Structure is vital | Using rubrics and frameworks ensures feedback is clear and compliant. |

| Blend human and AI | A hybrid model offers speed from AI and expertise from educators. |

| Compliance matters | Documenting requirements and human oversight is non-negotiable. |

| Transparency with learners | Always disclose AI involvement and link comments to clear criteria. |

Understanding structured assessment feedback

Before you can improve your feedback process, you need a clear picture of what good feedback looks like in a CIPD context. It is not simply a matter of writing more comments. Structure, relevance, and alignment with assessment criteria are what separate useful feedback from noise.

CIPD's own guidance is instructive here. CIPD emphasises performance feedback in appraisals, learning evaluation using Kirkpatrick levels, and aligning learning and development with business objectives. That same logic applies directly to assessment feedback: every comment should connect to a learning outcome or competency, not just describe what a learner did or did not do.

Structured feedback has several defining characteristics:

- Clarity: Comments are written in plain language, free from jargon that could confuse learners.

- Relevance: Each point maps directly to a specific assessment criterion or rubric descriptor.

- Actionability: Feedback tells learners what to do differently, not just what went wrong.

- Timeliness: Feedback is returned within a timeframe that allows learners to act on it.

- Compliance: Comments are documented, consistent, and auditable.

Generic feedback such as "good effort" or "needs more detail" fails on almost every one of these measures. Criterion-referenced feedback, by contrast, anchors every comment to a specific standard. This matters enormously for compliance with assessment feedback in regulated qualifications.

"Feedback that is not linked to clear criteria leaves learners guessing and assessors exposed to challenge. Structure is not a luxury; it is a professional obligation."

For a deeper grounding in the principles, the evidence-based feedback guidance from the New South Wales Centre for Education Statistics and Evaluation offers a practical framework that translates well into CIPD settings. You can also explore effective feedback principles specifically applied to CIPD qualifications.

Gathering your requirements: Tools, rubrics, and compliance

Effective feedback does not begin when you open a learner's submission. It begins well before that, when you assemble the tools, standards, and documentation that make consistent marking possible.

A clear rubric is the single most important asset in your toolkit. Without one, marking becomes subjective and feedback becomes inconsistent. Rubric-based AI tools are prioritised for compliance in CIPD centres precisely because they ensure structured, criterion-referenced feedback that reduces subjectivity. The same principle applies to human marking.

Before any marking begins, your centre should have the following in place:

| Requirement | Purpose | Notes |

|---|---|---|

| Assessment rubric | Anchors all feedback to criteria | Must be shared with learners in advance |

| Fair marking policy | Ensures consistency across assessors | Should include moderation procedures |

| AI tool configuration | Aligns automation with rubric | Requires human review checkpoint |

| GDPR compliance documentation | Protects learner data | Mandatory for UK-based centres |

| Human review checkpoint | Maintains oversight | Essential for high-stakes assessments |

Documenting these requirements is not bureaucratic box-ticking. It is what protects your centre during external verification and quality audits. A thorough assessment checklist for CIPD can help you confirm every element is in place before marking begins.

Pro Tip: Build an editable digital checklist that assessors complete before opening any submission. This takes under two minutes and prevents the most common compliance oversights.

Step-by-step: Delivering structured feedback using the PEEL method

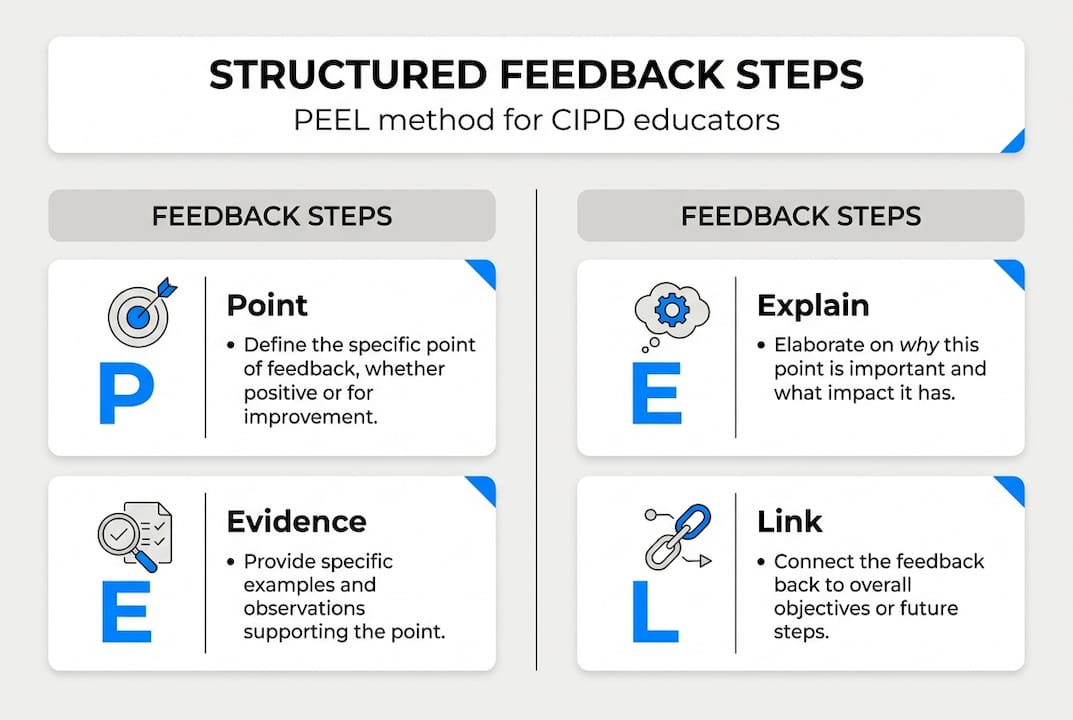

Once your requirements are assembled, you need a reliable framework for writing the feedback itself. The PEEL method, widely used in professional assessment contexts, provides exactly that. PEEL (Point, Evidence, Explanation, Link) is recommended for structured delivery in appraisal and assessment settings.

Here is how to apply it step by step:

- Point: State clearly what the learner has done well or what needs improvement. Be specific. "Your analysis of the recruitment process demonstrates sound understanding of selection criteria" is a point. "Good work" is not.

- Evidence: Reference the rubric or the learner's own submission to support your point. "This is evidenced in your comparison of structured versus unstructured interviews in section two" grounds the feedback in something concrete.

- Explanation: Clarify why this matters. "Demonstrating this understanding is central to the 5CO02 unit outcome on evidence-based practice" connects the comment to the qualification framework.

- Link: Suggest a specific next step. "To strengthen this further, consider incorporating data from your own organisation's selection outcomes" gives the learner something actionable to do.

In a hybrid workflow, AI tools can reliably generate the Evidence and Explanation steps by cross-referencing submissions against rubric descriptors. The human assessor then confirms the Point and crafts the Link, which requires contextual judgement that AI cannot replicate. This division of labour is both efficient and compliant. For more on applying this in practice, see effective feedback steps.

Pro Tip: Draft your feedback in four labelled blocks (P, E, E, L) before writing the final comment. This prevents you from accidentally skipping a step under time pressure.

Integrating AI tools: Workflow and best practices

AI-assisted marking is no longer experimental. The evidence base is growing. AI tools save educators 5.9 hours per week on average, and 57% of teachers report improved feedback quality when using them. That is a meaningful efficiency gain for any centre managing large cohorts.

However, the hybrid human-AI model is considered optimal for expert assessment contexts. AI excels at scale and timeliness but lacks the contextual nuance required for complex professional qualifications like CIPD. It cannot read between the lines of a learner's organisational context or weigh up mitigating circumstances.

Here is a comparison of the three main approaches:

| Approach | Speed | Compliance risk | Quality assurance |

|---|---|---|---|

| Pure AI marking | Very fast | High without oversight | Inconsistent on nuance |

| Human-only marking | Slower | Low | High but resource-intensive |

| Hybrid model | Fast | Low with proper workflow | High with human sign-off |

Best practices for integrating AI into your marking workflow include:

- Always include a human review stage before feedback is released to learners.

- Use only UK GDPR-compliant tools and confirm data processing agreements are in place.

- Communicate clearly with learners about how AI is used in their assessment process.

- Retain human sign-off for any high-stakes or borderline decisions.

- Audit a sample of AI-generated feedback regularly to check for drift or inconsistency.

The impact of AI tools on CIPD marking workflows is significant, but only when human review in AI grading is treated as non-negotiable rather than optional.

Common pitfalls and how to verify feedback quality

Even well-intentioned feedback processes can fall short. Knowing where things typically go wrong is the first step to preventing it.

The most common mistakes in assessment feedback include vague comments that do not reference criteria, feedback that describes performance without suggesting improvement, and over-reliance on AI output without human review. Each of these undermines both learner development and your centre's compliance position.

"Human oversight is essential for AI feedback to avoid bias and hallucinations; transparency with students is required; AI should not be used for high-stakes final judgements."

To verify that your feedback meets the required standard, run through these practical checks before releasing any feedback:

- Does every comment reference a specific criterion or rubric descriptor?

- Is there at least one actionable suggestion per area of weakness?

- Is the language clear enough for a learner to act on without further explanation?

- Has a human assessor reviewed and approved the feedback?

- Is the learner informed about any AI involvement in the marking process?

Internal audits should be conducted on a sample basis each marking cycle. Select a random cross-section of submissions and review the feedback against your rubric and fair marking policy. This is also where data security in educational assessments becomes relevant: ensure that any data processed during the audit is handled in line with UK GDPR requirements.

Transparency with learners is not optional. If AI tools have been used in marking, learners have a right to know. This should be stated clearly in your assessment information and reiterated when feedback is returned.

Streamline assessment feedback in your CIPD centre

Putting all of this into practice requires the right infrastructure. A robust feedback process is only as effective as the tools and workflows that support it.

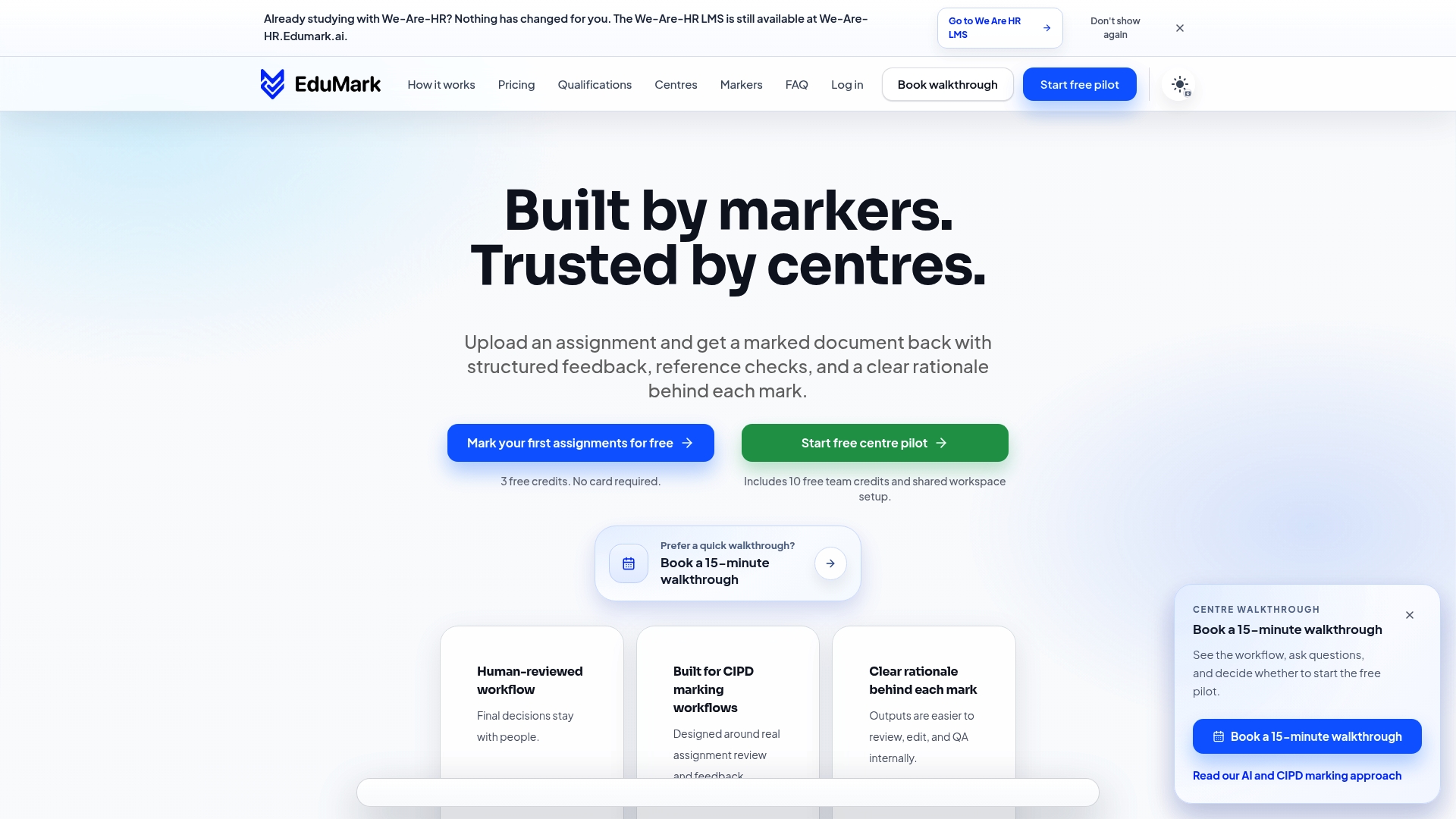

EduMark's AI-assisted CIPD feedback platform is built specifically for the challenges CIPD training centres face. It combines structured AI-generated feedback with mandatory human review checkpoints, inline comments embedded directly into Word documents, and full UK GDPR compliance. Every submission is processed transparently, with a clear rationale behind each mark. Whether you are managing ten learners or ten thousand, the workflow scales without sacrificing quality or compliance. Explore the essential CIPD checklist to see how EduMark aligns with your centre's requirements, or book a demonstration to see the platform in action.

Frequently asked questions

What makes assessment feedback effective?

Effective feedback is timely, specific, actionable, and aligned with clear criteria, helping learners identify and close gaps in their performance. Without all four elements, feedback loses its power to drive improvement.

Is AI-assisted marking allowed in CIPD assessments?

Yes, provided human oversight is maintained and AI use is communicated transparently to learners. AI should not make final judgements on high-stakes outcomes; that responsibility remains with a qualified human assessor.

How much time can AI tools save on assessment marking?

AI marking tools save educators up to 5.9 hours per week, and 57% of teachers report improved feedback quality as a direct result of using them. The time savings are most significant in large cohort settings.

What are must-haves for compliant feedback?

You need a clear rubric, explicit marking criteria, student transparency about AI use, and a human review stage alongside any automated assistance. Rubric-based tools are specifically recommended for reducing subjectivity and maintaining compliance in CIPD centres.