Balancing speed, accuracy, and regulatory compliance in CIPD assignment review is one of the most persistent pressures facing training providers today. Institutions are expected to deliver consistent, fair grading at scale, yet CIPD's position on AI marking makes clear that full automation is not an option. The stakes are high: a poorly managed review process risks learner outcomes, assessor credibility, and regulatory standing. This guide walks through the entire assignment review lifecycle, explains where AI-assisted tools can legally and ethically support your process, and highlights the safeguards every institution must have in place.

Table of Contents

- Understanding the assignment review process for CIPD assessments

- Preparing for efficient assignment review: Tools, teams, and compliance

- Step-by-step guide to the assignment review process with AI assistance

- Common pitfalls and how to ensure compliance in assignment reviews

- What most educators miss about AI in assignment review

- Streamline your CIPD assignment review with EduMark

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI-assisted feedback only | CIPD regulations prohibit full AI marking but permit AI support for assignment feedback. |

| Human judgement required | Only qualified humans may make final grading decisions in CIPD assignment reviews. |

| Efficiency with compliance | Blending AI support and clear human oversight leads to greater speed without risking integrity. |

| Avoid common pitfalls | Document AI’s role and communicate clearly to maintain standards and build trust. |

Understanding the assignment review process for CIPD assessments

Before introducing any technology into your workflow, it helps to map out how the assignment review process actually flows. Each stage carries its own compliance obligations, and understanding the sequence makes it far easier to identify where AI support is appropriate and where human judgement is non-negotiable.

The typical lifecycle looks like this:

- Submission: Learner uploads or sends their assignment via the agreed channel.

- Initial check: Assessor or support staff confirm the submission is complete, correctly formatted, and meets word count requirements.

- Feedback stage: Detailed written feedback is prepared, identifying strengths, gaps, and areas for development.

- Grading: A qualified human assessor assigns the grade based on CIPD criteria.

- Moderation and verification: A second assessor or internal verifier reviews a sample of marked work to confirm consistency.

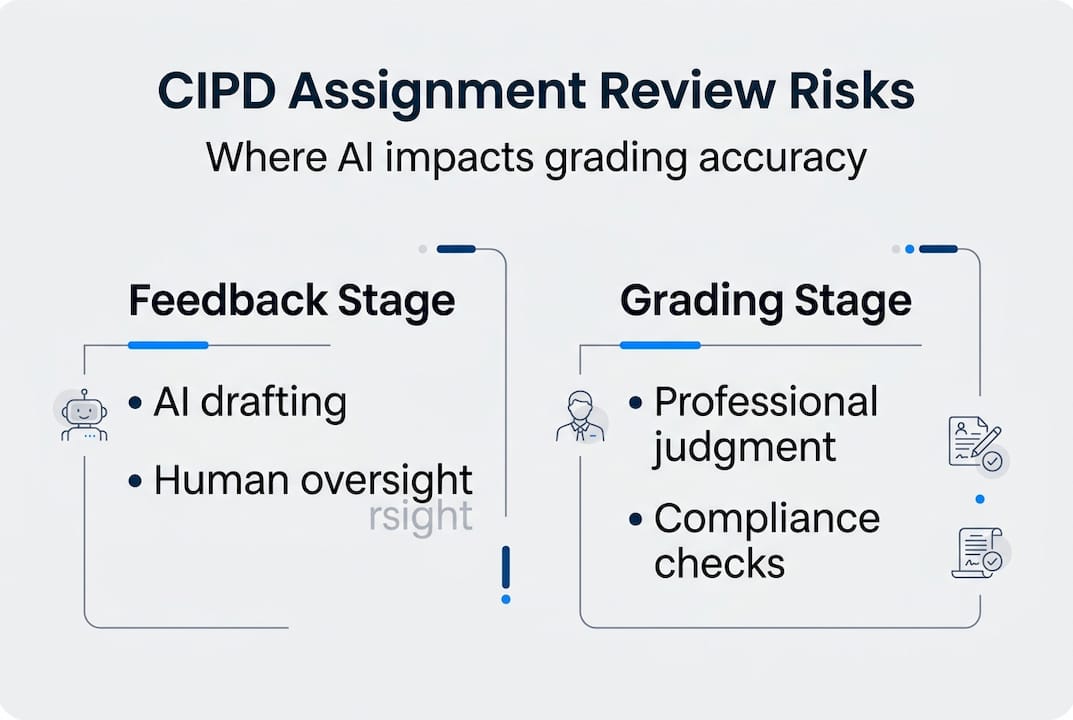

The critical boundary sits between the feedback stage and grading. CIPD does not allow AI marking, but AI-assisted feedback can be used carefully in certain steps. This distinction matters enormously. Generating draft feedback, flagging missing references, or summarising key observations are all tasks where AI tools can add genuine value. Deciding whether a learner passes or fails is not.

The risks of blurring this line are real. If an AI system influences grade outcomes without transparent human oversight, your centre may face sanctions from CIPD or fail an external quality assurance visit. The importance of human review in AI-assisted grading cannot be overstated, particularly when assessors are under time pressure.

There is also the question of grading accuracy with AI. AI tools are trained on patterns, not professional judgement. They can miss nuance, misread context, or apply feedback inconsistently across different learner cohorts. Human assessors catch these errors. AI tools, used correctly, reduce the volume of repetitive drafting work that slows assessors down.

Pro Tip: Create a written protocol that explicitly names which steps in your review process involve AI tools and which are human-only. Share this with all assessors and keep it on file for quality assurance purposes.

Preparing for efficient assignment review: Tools, teams, and compliance

Having outlined the 'what' and 'why' of assignment review, next is the 'how': setting up your review workflow effectively. Good preparation is what separates centres that manage AI-assisted review confidently from those that struggle with inconsistency and compliance gaps.

People and roles

Every effective review team needs three types of contributor:

- Human markers: Qualified assessors who make all final grading decisions and sign off on feedback.

- Compliance officer: Responsible for maintaining CIPD alignment, managing audit trails, and overseeing data protection under GDPR.

- AI support specialist: A staff member trained to configure and monitor AI-assisted tools, review AI outputs before they reach assessors, and flag anomalies.

This is not necessarily three separate people, particularly in smaller centres. But the responsibilities must be clearly assigned.

Technology: AI-assisted vs manual-only

| Feature | AI-assisted workflow | Manual-only workflow |

|---|---|---|

| Feedback drafting speed | Fast (minutes per submission) | Slow (30 to 60 minutes per submission) |

| Consistency across markers | High, with human review | Variable |

| Compliance documentation | Automated audit trails | Manual record-keeping |

| Cost per submission | Scalable credit model | Fixed staff time cost |

| Risk of integrity breach | Low with proper oversight | Low, but slower to scale |

Platforms like EduMark provide AI-assisted feedback while maintaining compliance with CIPD rules, offering inline comments, reference checks, and detailed summaries embedded directly into Word documents. Reviewing an AI grading tool comparison before committing to a platform will help you identify which features align with your centre's volume and workflow.

Documents and checklists

Before going live with any AI-assisted review process, prepare the following:

- An AI usage policy aligned with CIPD's assessment integrity guidelines

- A GDPR-compliant data processing agreement with your technology provider

- Assessor training records confirming familiarity with the tool and its limitations

- A sample moderation log template for quality assurance visits

Adopting structured feedback practices from the outset ensures that AI-generated draft feedback meets the standard your assessors expect before they begin editing.

Step-by-step guide to the assignment review process with AI assistance

With your setup complete, here's how to run a compliant, effective review using both human expertise and AI support.

- Collect submissions. Receive assignments through a secure, auditable channel. Log submission dates and confirm receipt with learners.

- Run initial checks. Confirm word count, referencing format, and submission completeness. AI tools can automate parts of this check, flagging missing citations or formatting issues instantly.

- Generate AI-assisted draft feedback. Upload the assignment to your AI-assisted platform. The tool produces draft feedback highlighting strengths, development areas, and potential gaps against the marking criteria.

- Human assessor review. The assessor reads the draft feedback critically, edits for accuracy and tone, and adds professional judgement where the AI has missed context or nuance.

- Human grading decision. The assessor assigns the grade independently. AI tools like EduMark can aid in feedback, but human input is essential for compliance. The grade is never derived from or confirmed by the AI output.

- Moderation. A second assessor reviews a sample of graded work. Any discrepancies are resolved before results are released.

- Release and record. Finalised feedback and grades are sent to learners. All AI involvement is documented in the audit log.

Using effective feedback strategies at step four is where assessors add the most value. AI drafts are a starting point, not a finished product.

Most institutions report at least 20% time saved when using AI for initial feedback preparation. That efficiency gain compounds quickly across high-volume cohorts. For centres processing hundreds of submissions per term, streamlining workflows with AI can meaningfully reduce assessor burnout without cutting corners.

Pro Tip: Set a rule that no AI-generated feedback is sent to a learner without at least one full read-through by the assigned assessor. This single step prevents the majority of accuracy and tone errors.

Common pitfalls and how to ensure compliance in assignment reviews

Even with good systems, mistakes can jeopardise compliance. Here's what to watch out for and how to stay on track.

Common compliance mistakes

- Allowing AI output to directly influence grade decisions without documented human override

- Failing to disclose AI involvement in feedback to learners when asked

- Using AI tools that store learner data outside the UK without a valid data transfer agreement

- Skipping moderation because AI-assisted feedback appears consistent

- Treating AI confidence scores as equivalent to assessor professional judgement

Documentation is your strongest protection. Every time an AI tool is used in the review process, log which tool was used, which step it supported, and which assessor reviewed its output. This audit trail is what external verifiers and CIPD quality assurance teams will look for.

Transparency with learners also matters. If a learner asks whether AI was used in preparing their feedback, your centre should have a clear, honest answer ready. Vague or evasive responses erode trust and can escalate into formal complaints.

"CIPD assessment must remain a human-led process; AI use is strictly limited to supportive roles."

Reviewing guidance on AI ethics in education is a practical step every centre should take before deploying any AI tool in their review workflow. Similarly, understanding how to ensure compliance with AI tools for CIPD qualifications will help you build a process that holds up under scrutiny.

One often-overlooked risk is assessor drift. When AI feedback is consistently good, assessors can gradually reduce their scrutiny of AI outputs. Regular internal audits and refresher training prevent this from becoming a systemic problem.

What most educators miss about AI in assignment review

There is a temptation, understandable given the workload pressures in assessment centres, to treat AI as a replacement for human effort rather than a reduction of repetitive tasks. That framing creates risk.

AI tools are genuinely excellent at pattern recognition, consistency checking, and rapid drafting. They are not good at reading the intent behind a learner's argument, recognising when a technically correct answer misses the spirit of the question, or applying the kind of contextual professional judgement that CIPD qualifications demand. Overestimating what AI can deliver leads to under-reviewed feedback and, eventually, compliance failures.

The centres that use AI most effectively treat it as a first-pass tool, not a final answer. They invest time in training assessors to critically evaluate AI outputs rather than simply accept them. They also communicate openly with learners about how AI is used, which builds rather than undermines trust.

Reviewing automated assessment insights reveals a consistent pattern: the efficiency gains are real, but they only materialise when human oversight remains rigorous. Cutting corners on the human review step to chase speed is the single most common mistake we see. The irony is that it usually creates more work downstream when errors surface during moderation or external quality assurance.

Streamline your CIPD assignment review with EduMark

Ready to put these insights into action? EduMark is built specifically for CIPD assessment centres that want the efficiency benefits of AI without compromising on compliance or integrity.

The platform generates structured draft feedback, embeds inline comments directly into Word documents, and maintains a full audit trail of AI involvement at every step. Human assessors remain in control of all grading decisions. EduMark operates within UK GDPR requirements, so your data handling obligations are covered. Whether you are processing fifty submissions a month or five hundred, the credit-based model scales with your volume. Explore EduMark for CIPD assignment review and see how your centre can reduce feedback turnaround times while keeping every compliance requirement firmly in place.

Frequently asked questions

Can AI be used to fully mark CIPD assignments?

CIPD does not allow AI marking. Only qualified human reviewers can decide grades, though AI tools may support the feedback preparation stage.

Is using AI for assignment feedback in CIPD assessments compliant?

Yes, provided the final grading decision is made by a qualified human assessor. AI-assisted feedback is permitted when humans retain full control over grade outcomes.

How can we maintain compliance during AI-assisted assignment review?

Keep detailed records of AI involvement at each stage, ensure all grading is completed by a human assessor, and review your processes regularly against current CIPD compliance requirements.

What tools help streamline the assignment review process?

AI-assisted platforms like EduMark accelerate feedback drafting and provide audit trails, while compliance checklists and moderation logs help centres maintain CIPD standards throughout.