TL;DR:

- CIPD assessments require structured academic writing, critical analysis, and Harvard referencing.

- Human oversight remains essential as AI supports but cannot replace qualified assessors for grading.

- Strong documentation, moderation, and compliance tools ensure assessment fairness and regulatory adherence.

Keeping CIPD assessments compliant, fair, and efficient is one of the most pressing challenges facing UK training providers right now. Regulatory scrutiny from Ofqual is intensifying, learner numbers are growing, and the promise of AI-assisted grading is tempting but fraught with risk if handled poorly. Get the process wrong and you face failed audits, inconsistent marking, and reputational damage. Get it right and you deliver faster turnaround, defensible decisions, and a better experience for every learner. This guide walks you through the essentials of CIPD assessment design, the tools that support compliant delivery, the role AI can legitimately play, and the quality checks that protect your centre from scrutiny.

Table of Contents

- Understanding CIPD assessment essentials

- Tools and resources for compliant assessment

- Designing, delivering and grading CIPD assessments

- AI in CIPD assessment: benefits, limits and regulatory guidance

- The uncomfortable truth about technology in assessment

- Make assessment simpler and more robust with EduMark

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Compliance is critical | Ofqual and CIPD enforce strict standards—cutting corners on evidence or structure risks non-compliance. |

| AI supports, not replaces | AI can help streamline assessment, but human oversight must be maintained at every stage. |

| Structure and referencing | PEEL academic writing and Harvard referencing underpin credible, defensible CIPD grading. |

| Continuous quality assurance | Moderation, recordkeeping, and peer review protect fairness and prepare your centre for audits. |

Understanding CIPD assessment essentials

Before you can build an efficient grading workflow, you need a firm grip on what CIPD assessments actually require. These are not generic vocational qualifications. They carry specific academic writing standards, evidence requirements, and regulatory obligations that shape every stage of the marking process.

CIPD qualifications are typically assessed through written assignments, work-based projects, and presentations. Each format demands a different approach from assessors, but all share a common thread: structured academic writing with critical analysis is non-negotiable, and referencing is crucial. That means learners must demonstrate they can apply theory to practice, not simply describe it.

The methodology most widely used across CIPD submissions is the PEEL writing structure (Point, Evidence, Explanation, Link). When assessors understand this structure deeply, they can identify where a learner has met the criterion and where the argument breaks down. Harvard referencing is the required citation format, and its absence or misuse is one of the most common reasons for borderline marks.

From a regulatory standpoint, Ofqual sets the overarching framework for vocational qualifications in England. You can explore the full scope of CIPD regulatory standards to understand what transparency and consistency obligations apply to your centre. Ofqual expects assessment decisions to be auditable, justifiable, and free from bias.

The types of educational assessments used across CIPD levels vary considerably, from reflective accounts at Level 3 to strategic reports at Level 7. Knowing which format applies to each unit helps you build marking schemes that are genuinely fit for purpose.

Here is a quick comparison of the core CIPD assessment types and what each demands from assessors:

| Assessment type | Key assessor focus | Common compliance risk |

|---|---|---|

| Written assignment | Critical analysis, referencing | Inconsistent grade boundaries |

| Work-based project | Evidence of practice | Weak audit trail |

| Presentation | Verbal reasoning, application | Subjective scoring without rubric |

Key foundations every assessor should confirm before marking:

- Assessment criteria reviewed before any marking begins

- Marking scheme aligned to the qualification level

- Evidence of learner understanding, not just description

- Referencing checked against Harvard conventions

- Moderation log prepared for quality assurance

Pro Tip: Always read the assessment criteria before designing or reviewing a marking scheme. Starting with the criteria rather than the submission prevents assessor bias from creeping into your judgements.

Tools and resources for compliant assessment

Once you understand the requirements, the next question is practical: what systems and materials do you need to deliver and quality-assure CIPD assessments at scale?

Most approved centres operate with a combination of a learning management system (LMS), an e-portfolio platform, and document-based submission tools. Each plays a different role. The LMS manages enrolment and communication. The e-portfolio captures learner evidence over time. Document tools handle the actual submission and feedback exchange.

What ties these together is your compliance infrastructure. Assessment data security is a legal obligation under UK GDPR, not an optional extra. Any platform storing learner submissions must offer encrypted storage, role-based access controls, and clear data retention policies.

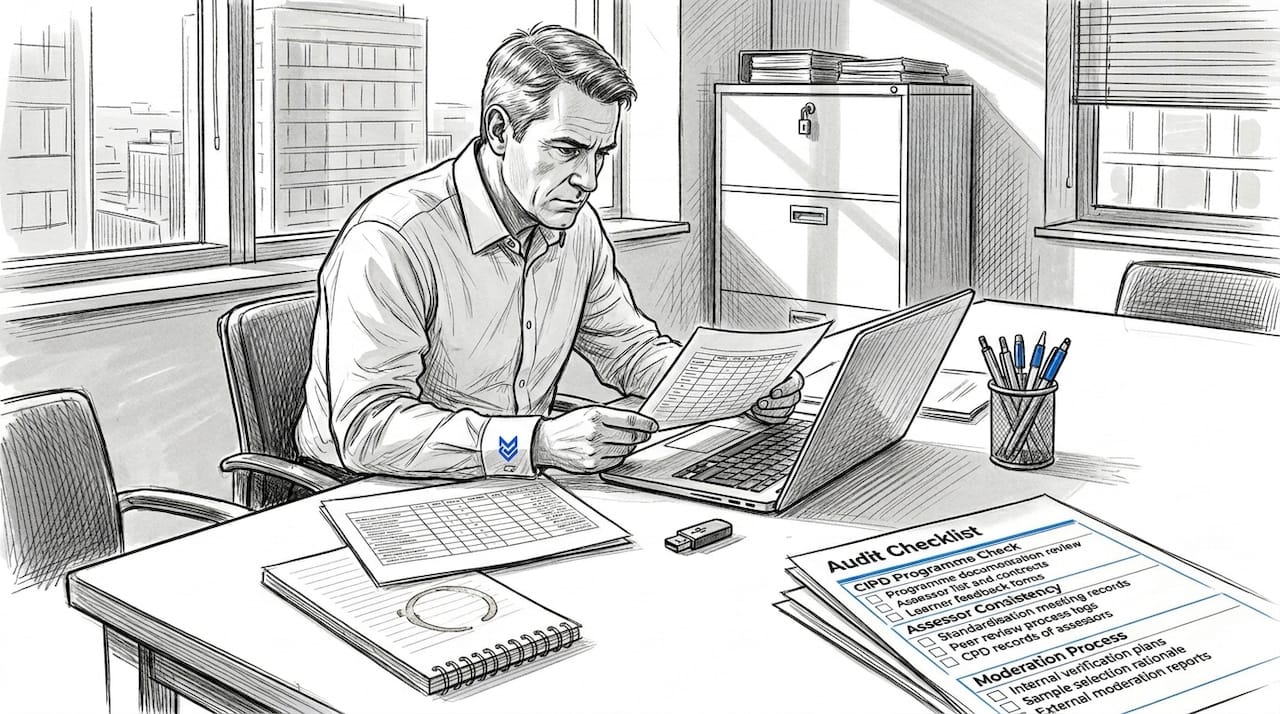

Ofqual regulates vocational qualifications like CIPD and expects centres to maintain robust audit trails for every assessment decision. That means your tools must generate timestamped records of who marked what, when, and on what basis.

Here is how common tools map to compliance functions:

| Tool or feature | Compliance function | Risk if absent |

|---|---|---|

| Anonymisation feature | Reduces assessor bias | Unfair marking claims |

| Audit trail log | Supports external QA | Failed moderation review |

| Moderation record template | Standardises decisions | Inconsistent outcomes |

| Secure document storage | GDPR compliance | Data breach liability |

| AI-assisted feedback tool | Speed and consistency | Over-reliance without oversight |

AI tools are increasingly used as a supplementary layer in this stack. They can flag inconsistencies, support moderation, and speed up logistics. But they are not a replacement for qualified human judgement, particularly for summative assessments. Your assessment checklist should confirm that every tool in your workflow has a defined role and a human checkpoint attached to it.

The most common oversight we see in centres is weak evidence-tracking. Assessors make sound decisions but fail to document the rationale clearly enough to survive external scrutiny. Good document control is not bureaucracy. It is your defence when an external quality assurer asks why a particular grade was awarded.

Designing, delivering and grading CIPD assessments

With the right tools in place, the focus shifts to the practical workflow of assessment design, delivery, and marking. CIPD-approved centres must follow standards for assessment delivery, and those standards begin well before a learner submits anything.

Designing a valid assessment task means ensuring it genuinely tests the learning outcomes for that unit. Vague briefs produce vague submissions. Clear, well-structured briefs that reference the assessment criteria directly give learners the best chance of demonstrating competence and give assessors a clear basis for judgement.

Following academic writing guidance when briefing learners is particularly important at Levels 5 and 7, where the expectation of critical analysis is highest. Learners who understand PEEL structure and Harvard referencing before they begin produce submissions that are far easier to mark consistently.

Here is a practical delivery and marking workflow with compliance checkpoints built in:

- Design the assessment task aligned to unit learning outcomes and Ofqual standards

- Brief learners clearly, including referencing requirements and word count expectations

- Anonymise submissions before distributing to assessors where your process allows

- Apply the marking scheme consistently, using the criteria as the primary reference

- Record your rationale for each mark in a moderation-ready format

- Conduct internal moderation by sampling a percentage of marked work

- Retain all documentation including feedback, mark sheets, and moderation logs

- Prepare for external quality assurance with a complete audit trail

Compliant feedback is specific, criterion-referenced, and actionable. Vague comments like "good effort" or "needs more analysis" do not meet the standard. Your structured feedback should tell the learner exactly which criterion they met, how they met it, and what they would need to do differently to improve.

Review your assessment best practices regularly to keep your team aligned, particularly when new assessors join.

Pro Tip: Standardisation meetings before a marking round are one of the most effective ways to reduce inter-assessor variability. Even a 30-minute session reviewing sample submissions against the marking scheme can prevent significant inconsistency.

AI in CIPD assessment: benefits, limits and regulatory guidance

AI has genuine value in the assessment process. But understanding where that value begins and ends is essential for any centre serious about compliance.

What AI does well in a CIPD marking context:

- Identifying structural inconsistencies in submissions

- Flagging missing referencing or formatting errors

- Supporting moderation by comparing marks across assessors

- Generating draft feedback for human review and refinement

- Reducing administrative burden on experienced assessors

What AI cannot do, at least under current regulatory expectations, is act as the sole decision-maker for summative grading. AI cannot be the sole marker for high-stakes assessments due to transparency and fairness risks. That is not a temporary limitation. It reflects a principled position on accountability.

Critical note: Ofqual's guidance is clear that AI must remain supplementary in high-stakes assessment contexts. Any centre using AI tools without documented human oversight risks non-compliance with quality assurance requirements.

A hybrid model works well in practice. AI reviews a submission for structural and referencing issues, generates a draft feedback summary, and flags it for a qualified assessor. The assessor reviews the AI output, applies professional judgement, and finalises the mark with a documented rationale. This approach supports consistent assessment moderation without sacrificing speed.

The case for automated assessment in CIPD is strongest when it is positioned as a quality assurance aid rather than a grading engine. Centres that use AI to catch what humans miss, rather than to replace human review, consistently perform better in external quality assurance visits.

Transparency is non-negotiable. If AI contributes to a grading decision, that contribution must be documented. External reviewers will ask. Your audit trail must answer.

The uncomfortable truth about technology in assessment

Here is what many technology vendors will not tell you: efficiency gains from AI tools can actually increase your compliance risk if they are not paired with rigorous human oversight.

When centres adopt new tools quickly, the audit trail often suffers. Assessors trust the AI output without questioning it. Moderation records become thinner. And when an external quality assurer arrives, the documentation does not reflect the quality of the decisions actually made.

What external reviewers are really looking for is not a slick platform. They want a transparent human audit trail that shows qualified professionals made defensible judgements. Technology that obscures that trail, however efficient it feels, is a liability.

We have seen assessments challenged not because the marks were wrong, but because the rationale was not documented clearly enough to withstand scrutiny. The fix is not less technology. It is better integration of technology with human accountability.

Ensuring compliance with feedback processes is the area where most centres have room to improve. The sustainable strategy for 2026 and beyond is pairing smart tools with expert human review, every time, without exception.

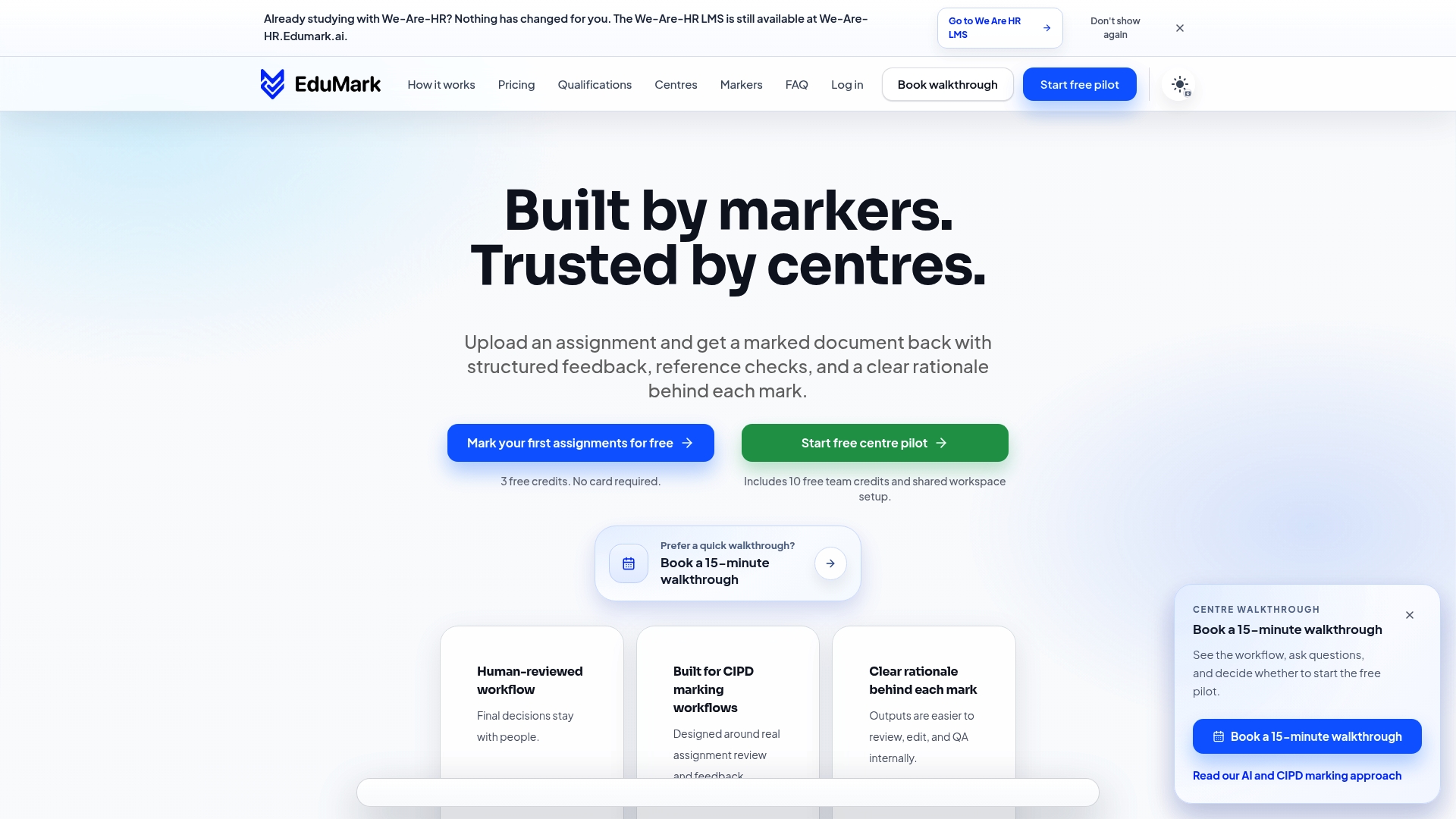

Make assessment simpler and more robust with EduMark

If the workflows in this guide feel complex to implement alone, EduMark is built to make them manageable.

AI-assisted CIPD marking through EduMark gives your assessors structured feedback tools, inline comments, reference checks, and transparent mark rationales embedded directly into Word documents. Every submission passes through a human-reviewed workflow, so your audit trail stays clean and your compliance position stays strong. Explore case studies from centres already using EduMark to reduce turnaround times and improve moderation consistency. If your next CIPD audit cycle is approaching, now is the right moment to future-proof your assessment process with tools designed specifically for the job.

Frequently asked questions

What makes CIPD assessment different from other vocational qualifications?

CIPD assessments require strict structured academic writing, including PEEL structure, evidence-based practice using CIPD factsheets and journals, and Harvard referencing, all governed by Ofqual regulations. The emphasis on critical analysis rather than description sets them apart from most other vocational qualifications.

Can AI fully automate CIPD assessment grading?

No. Ofqual's position is that AI cannot act as the sole marker for high-stakes CIPD assessments due to bias risks, transparency concerns, and regulatory non-compliance. Human oversight is required at every summative grading stage.

What is the best way to moderate assessment consistency across assessors?

Use standardised marking guides, documented moderation records, and structured quality assurance checks. CIPD-approved centres are expected to follow defined standards for assessment delivery that include both digital and peer review processes.

Is Harvard referencing mandatory for all CIPD submissions?

Yes. Harvard referencing is the required format for CIPD assignments and supports the robust evidence and audit trails that Ofqual expects from approved centres.

How can we ensure up-to-date compliance in our assessment process?

Regularly consult Ofqual updates, review your marking protocols against current guidance, and adopt checklist and data security practices that reflect the latest regulatory expectations.