TL;DR:

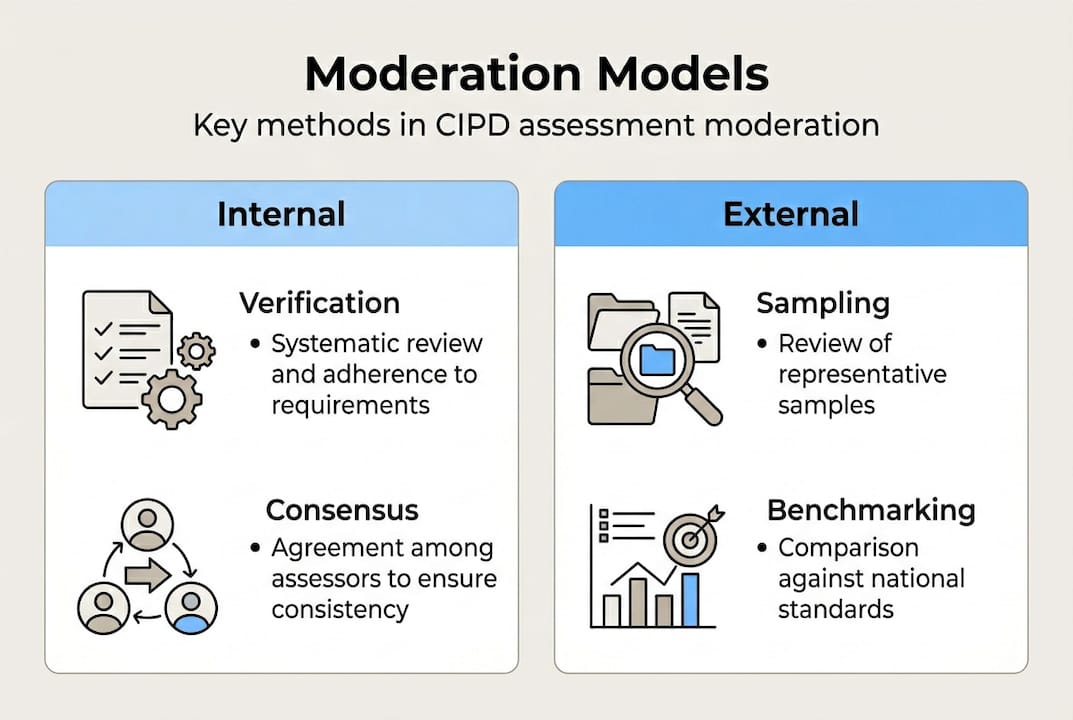

- Assessment moderation ensures fair, consistent marking aligned with standards through internal and external checks.

- Different models like consensus, verification, and comparative judgement suit varied assessment needs and timeframes.

- Strong moderation culture involves trust, calibration, and use of AI tools to support fairness and reduce bias.

Assessment moderation is one of those terms that everyone in a CIPD training centre uses, yet few agree on what it actually involves in practice. Many coordinators conflate it with second marking, others treat it as a box-ticking exercise before results are released, and some are genuinely unsure where their centre's responsibility ends and CIPD's begins. That confusion is costly. Inconsistent moderation exposes your centre to compliance risks, learner appeals, and reputational damage. This article cuts through the ambiguity, explaining what moderation really means in the CIPD context, how the main models work, where bias creeps in, and what practical steps you can take to run a tighter, fairer process.

Table of Contents

- What is assessment moderation in CIPD training?

- Core moderation processes and models

- Ensuring consistency and minimising bias

- CIPD's moderation workflow: from centre marking to results

- Practical strategies and common pitfalls

- What most guidance misses about assessment moderation

- Explore smarter moderation solutions for your centre

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Dual moderation layers | CIPD centres must combine robust internal moderation with CIPD’s external sampling to ensure compliance and fairness. |

| Key moderation methods | Consensus, verification, and comparative judgement each offer strengths—choose the right model for your context. |

| Bias reduction strategies | Use benchmarking, regular calibration, and AI tools to minimise errors while keeping human oversight central. |

| Stepwise moderation workflow | Follow structured steps from marking to CIPD validation to avoid delays and compliance failures. |

| Continuous improvement | Practical checks and reflective practice drive lasting gains in assessment validity and reliability. |

What is assessment moderation in CIPD training?

At its core, moderation is the process of checking that marking is fair, consistent, and aligned with agreed standards. It is not the same as marking itself. Where marking applies judgement to a learner's work, moderation scrutinises that judgement and asks whether another assessor would reach the same conclusion.

In the CIPD context, assessment moderation involves two distinct layers: internal moderation carried out by your centre, and external moderation sampling carried out by CIPD to ensure fair, valid, and consistent marking compliant with qualification standards. Both layers are mandatory, and understanding where one ends and the other begins is essential for any assessment coordinator.

Internal moderation is your centre's responsibility. It means reviewing a sample of marked work before results are submitted, checking that assessors have applied the marking criteria correctly, and documenting any adjustments. External moderation is CIPD's role. They sample your centre's marking across units, validate your process, and only release results to learners once they are satisfied. Think of it as a quality audit of your quality audit.

For coordinators, this dual structure is not just bureaucratic overhead. It is your primary defence against learner appeals, your evidence of compliance with CIPD regulatory standards, and the mechanism that builds trust with learners and employers alike. Reviewing a solid CIPD assessment checklist before each moderation round can help you stay on top of every requirement.

| Responsibility | Internal (centre) | External (CIPD) |

|---|---|---|

| Who carries it out | Centre assessors and moderators | CIPD quality team |

| When it happens | Before submission | After submission, in moderation window |

| What is reviewed | Sample of marked work | Sample of centre's moderation |

| Output | Adjusted marks, documented rationale | Validated results released to learners |

Following moderation best practices consistently across both layers is what separates centres that sail through external review from those that face queries and delays.

"Moderation ensures fairness, reduces assessor bias, and provides a reliable basis for results that learners and employers can trust."

- Moderation ensures marking is applied fairly across all learners

- It reduces the risk of individual assessor bias skewing results

- It provides documented evidence of compliance with qualification standards

Core moderation processes and models

With moderation defined, we can now compare the main approaches your centre might use. Not all moderation is the same, and choosing the right model for your context makes a significant difference to both quality and workload.

Consensus moderation brings assessors together to discuss and agree on marks for a shared sample of work. It is particularly useful when introducing new assessment criteria or onboarding new assessors, because it builds a shared understanding through dialogue. The downside is time: it requires everyone in the room at once, which is rarely easy to arrange.

Verification moderation is the most common model in CIPD centres. A second assessor reviews a sample of already-marked work and checks whether the marks are defensible. It is faster than consensus, but it can become superficial if the verifier feels reluctant to challenge a colleague's judgement. The mechanics of verification work best when the verifier is genuinely independent and the process is documented clearly.

Comparative judgement takes a different approach entirely. Rather than marking against a rubric, assessors rank pieces of work relative to each other. Research suggests this can be highly reliable, but it is less common in CIPD settings because it does not map neatly onto pass/refer decisions.

Benchmarking with exemplars before marking begins is strongly recommended regardless of which model you use. It aligns assessors before they diverge, rather than trying to reconcile differences afterwards. Reviewing assessment principles as a team before each round reinforces that alignment.

The marking and moderation toolkit from LSE's Eden Centre is a useful reference for understanding how these models apply in practice.

| Model | Focus | Time required | Bias reduction | Best for |

|---|---|---|---|---|

| Consensus | Group agreement | High | Strong | New criteria, new assessors |

| Verification | Second check | Medium | Moderate | Routine moderation cycles |

| Comparative judgement | Rank ordering | Medium | Strong | Holistic quality checks |

A typical internal moderation workflow runs as follows:

- Assessors mark their allocated submissions independently

- Moderation coordinator selects a representative sample

- Second assessor reviews the sample against marking criteria

- Discrepancies are discussed and documented

- Adjustments are applied and rationale is recorded

- Final marks are submitted to CIPD within the moderation window

Pro Tip: Run a short calibration exercise using two or three anonymised submissions before your main marking round. It takes thirty minutes and prevents hours of retrospective disagreement.

Ensuring consistency and minimising bias

Now that you know the core processes, let's focus on how to ensure your moderation is truly consistent and fair. This is where most centres underestimate the challenge.

Bias in marking is not always deliberate. Teacher bias persists even in experienced assessors: markers can be harsher on work from learners they do not know personally, or conversely, overly generous when moderating a colleague's marking. Both tendencies are human. Neither is acceptable in a compliant moderation process.

Pre-marking benchmarking is the single most effective tool against bias. When assessors agree on what a pass looks like before they start marking, they are less likely to drift. Calibration meetings, where assessors mark the same piece independently and then compare, surface disagreements early. Effective calibration strategies also include using clear rubrics that focus on the work rather than the learner, and involving learners in understanding the criteria so they can self-assess.

AI tools are increasingly used to support consistency. They can flag anomalies in marking patterns, identify submissions that sit close to grade boundaries, and apply criteria without the fatigue that affects human markers after a long marking session. However, AI in marking requires human oversight. AI does not understand context the way an experienced assessor does, and new or nuanced criteria need fuller human discussion before AI outputs can be trusted. The importance of human review in any AI-assisted process cannot be overstated.

Pro Tip: Always calibrate with comparative exemplars before major assessment rounds. Choose one strong pass, one borderline pass, and one refer, and have your team mark all three before touching the real submissions.

Common pitfalls to watch for:

- Implicit bias towards or against unfamiliar learners

- Rubrics that are too vague to apply consistently

- Moderators who accept marks without genuine scrutiny

- Undocumented adjustments that cannot be evidenced later

- AI outputs used without human verification

CIPD's moderation workflow: from centre marking to results

Let's connect these strategies directly to the stages of moderation your CIPD centre must follow. Understanding the sequence helps you plan your internal process around CIPD's external requirements, rather than scrambling to catch up.

Centres mark assessments and conduct internal moderation before submitting to CIPD. CIPD then performs moderation sampling and validates centre marking before releasing results to learners. That validation step is not a formality. CIPD samples centre marking per unit to ensure fairness and validity, and this occurs within a defined moderation window after submission. If your internal moderation is weak, CIPD's sampling will find it.

"CIPD's external moderation is not a second opinion. It is a structured quality check on your centre's entire moderation process, not just individual marks."

The workflow for coordinators runs as follows:

- Assessors complete marking for all submitted units

- Coordinator conducts internal moderation on a representative sample

- Discrepancies are resolved and documented with clear rationale

- Marks and moderation evidence are submitted to CIPD

- CIPD samples the submission during the moderation window

- CIPD validates the centre's marking and process

- Results are released to learners

Timing is critical. The moderation window is fixed, and late or incomplete submissions delay results for learners. Building your internal moderation schedule backwards from the submission deadline, rather than forwards from the marking completion date, is a practical habit that prevents last-minute pressure. Understanding how AI in grading can accelerate your internal review without compromising quality is worth exploring as submission volumes grow.

Practical strategies and common pitfalls

Finally, let's get practical: what can you do this term for more reliable and compliant moderation?

The purpose of moderation is to develop a shared understanding of standards, ensure validity and reliability, reduce bias, and support assessor development. That last point is often overlooked. Moderation is a professional development opportunity, not just a compliance exercise. When assessors discuss why a piece of work passes or refers, they sharpen their own judgement for the next round.

However, moderation is time-intensive, and the trade-offs between consensus and verification models are real. Centres under pressure often rush the process, which is precisely when errors occur. Building moderation time into your assessment calendar as a non-negotiable block, rather than fitting it around other commitments, is the most straightforward improvement most centres can make. Exploring AI workflow tools can also reduce the administrative burden without cutting corners on quality.

Pro Tip: Schedule a pre-marking review meeting and a post-moderation debrief for every major assessment round. The debrief is where learning happens and where next round's calibration exemplars are identified.

Top pitfalls to avoid:

- Rushing moderation to meet submission deadlines

- Using untrained or unfamiliar moderators for complex units

- Failing to document the rationale for mark adjustments

- Ignoring patterns in AI-flagged anomalies

- Treating moderation as a one-person task rather than a team process

Shared criteria, consistent evidence standards, overt benchmarking, and structured feedback loops between assessors are the foundation of a moderation process that holds up under external scrutiny.

What most guidance misses about assessment moderation

Putting the official workflow aside, here is what most experienced coordinators know but guidance rarely covers: no system totally eliminates inconsistency. Bias and interpretation are always present, even in the most carefully designed process. Moderation is both a science and a craft. It is relational, not just procedural. The quality of your moderation depends as much on the trust and openness between assessors as it does on the rubric they are using.

The most effective moderation cultures we see are ones where assessors feel safe to be challenged and to challenge others without it becoming personal. That psychological safety does not come from a policy document. It comes from leadership that models intellectual humility and treats disagreement as evidence of rigour, not conflict.

AI tools, including those that support ethical AI use in moderation, are genuinely useful for consistency. But they surface patterns; they do not replace the dialogue that turns those patterns into improved practice. The centres that get the most from AI-assisted moderation are the ones that use it to prompt better conversations, not to avoid them.

Explore smarter moderation solutions for your centre

Moderation complexity does not have to mean moderation chaos. The strategies in this article give you a solid framework, but implementing them consistently across busy assessment cycles is where most centres struggle.

EduMark's AI-assisted CIPD marking platform is built specifically for this challenge. It supports both human and AI-driven moderation workflows, with structured feedback, inline comments, confidence checks, and detailed review summaries embedded directly into Word documents. Every mark comes with a transparent rationale, making your internal moderation evidence stronger and your external CIPD sampling smoother. If your centre is ready to raise its moderation standards without adding to your team's workload, EduMark is worth a closer look.

Frequently asked questions

What is the main purpose of assessment moderation?

Assessment moderation ensures marking is fair, consistent, and aligned with agreed standards across all assessors, while also supporting assessor development and reducing bias.

Who conducts moderation in CIPD qualifications?

CIPD centres conduct initial internal moderation, and the CIPD itself carries out external sampling to validate centre marking before results are released to learners.

How does AI contribute to moderation?

AI improves consistency by supporting benchmarking and identifying marking anomalies, but human oversight remains essential for contextual judgement and nuanced assessment decisions.

What are common moderation pitfalls to avoid?

Key pitfalls include rushed moderation checks, unclear rubrics, lack of pre-marking benchmarking, and unchallenged bias among markers that persists despite structured processes.