TL;DR:

- Detailed AI feedback must be timely, specific, actionable, and balanced to maximize learning impact.

- Research shows AI-generated detailed feedback improves exam scores, passing rates, and learner engagement significantly.

- A hybrid approach combining AI efficiency with human oversight ensures quality, reduces risks, and enhances learner uptake.

Speed is the promise of AI grading tools, but speed without substance risks shortchanging learners. Many assessment centres assume that faster turnaround means shallower responses, yet the evidence suggests the opposite is achievable. Feedback effect size data from Hattie and Timperley's meta-analysis places detailed, well-structured feedback at an effect size of 0.73, meaning it is among the most powerful interventions available to educators. This article examines what truly detailed feedback looks like in an AI-assisted context, how it measurably improves student outcomes, and what practical strategies assessment centres can use right now to maximise its impact.

Table of Contents

- What makes feedback truly 'detailed'?

- How does detailed feedback impact student outcomes?

- Proven strategies for delivering actionable feedback with AI

- Addressing limitations and ensuring quality feedback

- A fresh perspective on feedback: prioritising uptake over automation

- Transform your assessment outcomes with AI-powered feedback

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Detailed feedback matters | Rich, actionable insights improve learning more than simple scores or grades. |

| AI supports strong outcomes | Evidence shows AI feedback can raise exam scores and pass rates significantly. |

| Hybrid models are best | Blending AI with teacher input addresses risks and retains the value of human judgement. |

| Student engagement is vital | Even the best feedback is only effective if students read and act on it. |

What makes feedback truly 'detailed'?

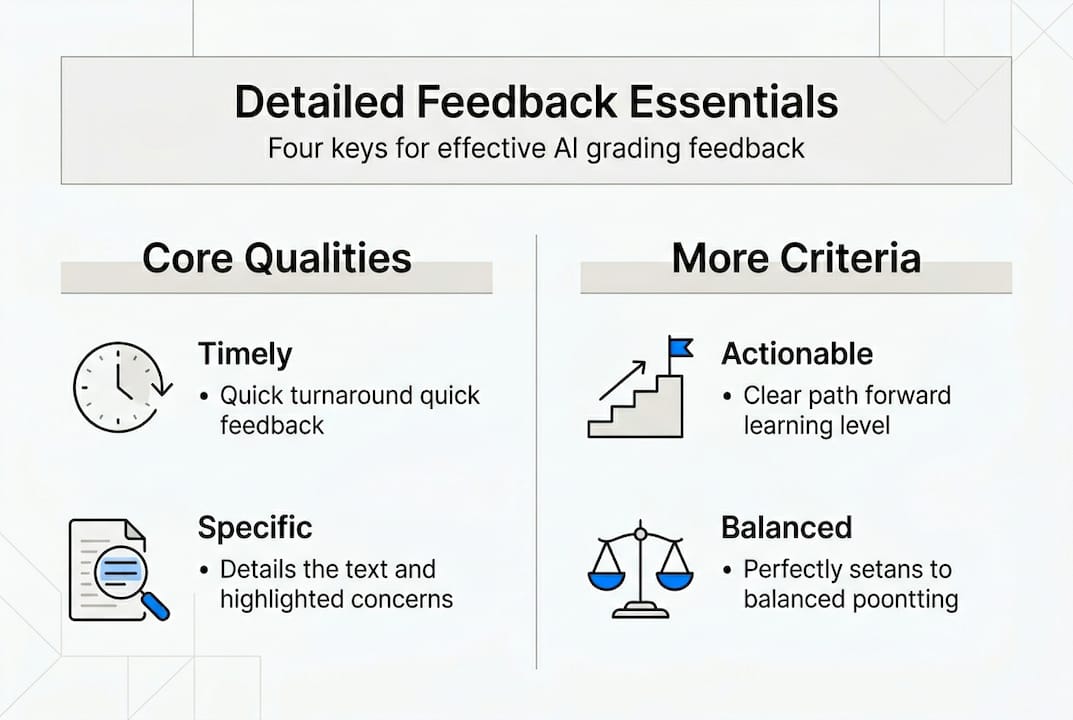

Not all feedback is created equal. A grade and a single sentence of commentary may tell a learner they fell short, but it rarely tells them how to do better next time. Genuinely detailed feedback has four consistent characteristics: it is timely, specific, actionable, and balanced.

Timely means the learner receives it while the work is still fresh in their mind. Specific means it refers to identifiable moments in the submission rather than vague impressions. Actionable means it points toward concrete next steps. Balanced means it acknowledges both strength and gap, so learners are motivated to continue rather than simply told they failed.

Research confirms this matters enormously. Detailed AI feedback that meets these four criteria carries an effect size of 0.73, ranking it far above many other educational interventions in terms of impact on learning gains. For assessment centres using AI-assisted tools, this is not a minor detail. It is the difference between a marking process that simply records performance and one that actively builds capability.

| Weak feedback | Detailed feedback |

|---|---|

| "Good attempt but needs improvement." | "Your analysis in section two is well structured. To strengthen it, link your argument back to the specific CIPD unit criteria in paragraph three." |

| "Referencing is poor." | "Three citations are missing page numbers. Use CIPD's referencing guide and recheck sources in paragraphs four and six." |

| "Lacks critical thinking." | "Consider opposing viewpoints. For example, counter the stakeholder model with a resource-based view to demonstrate deeper analysis." |

Hattie and Timperley also identified three levels at which feedback is most effective: task level, process level, and self-regulation level. Feedback that only addresses the final product (task level) is the least powerful. Feedback that guides how learners approached the problem (process level) and helps them manage their own learning (self-regulation level) produces the deepest gains.

Features of effective AI-driven feedback include:

- Inline comments tied to specific passages rather than summary remarks only

- Rubric-aligned rationale so learners understand how marks were assigned

- Suggested resources or revision steps embedded within the response

- Confidence indicators that signal where the AI is certain versus where human review is advised

Pro Tip: When configuring AI feedback tools, instruct the system to address at least one process-level and one self-regulation-level point per submission. This single adjustment meaningfully increases the practical usefulness of every response.

Used thoughtfully, AI tools can improve outcomes with feedback that rivals the depth of experienced human assessors, provided the feedback architecture is designed with these principles in mind. Platforms built around accurate grading with AI give institutions the scaffolding needed to meet this standard consistently.

How does detailed feedback impact student outcomes?

The case for detailed feedback is not theoretical. A growing body of empirical research now quantifies its effect across multiple performance indicators, giving assessment centres concrete benchmarks to work toward.

AI feedback benchmarks from recent studies demonstrate that AI-generated feedback produces a learning gain effect size of Cohen's d = 0.56, assessment accuracy with a correlation of 0.847 against expert judgement, exam score improvements of up to 10%, and passing rate increases of approximately 15%. For essay grading specifically, Quadratic Weighted Kappa of 0.68 indicates strong alignment with human markers.

These are not marginal gains. A 15% improvement in passing rates can be the difference between a cohort succeeding and a training centre having to redesign its entire curriculum.

Key outcome metrics, ranked by typical impact:

- Exam and assessment scores (up to 10% improvement with targeted AI feedback)

- Passing rates (15% increase documented in structured feedback trials)

- Learner engagement (higher when feedback is specific and timely)

- Revision and resubmission rates (increase when actionable steps are clearly stated)

- Long-term retention (process-level feedback supports knowledge transfer)

| Feedback type | Accuracy vs experts | Score improvement | Engagement |

|---|---|---|---|

| AI only | 0.847 correlation | Up to 10% | Moderate |

| Human only | Baseline | Baseline | High |

| Hybrid (AI + human) | Highest | Up to 10%+ | Highest |

The meta-analysis evidence reinforces that no single mechanism drives these gains. Rather, it is the combination of speed, specificity, and consistency that makes AI-assisted feedback effective. Human feedback is often richer in nuance, but it cannot scale at the same rate or guarantee the same consistency across a large cohort.

Actionable feedback is particularly important for sustaining growth over time. When learners know precisely what to adjust and why, they are more likely to revise meaningfully rather than simply resubmit with surface-level edits. Explore how improved outcomes with feedback are being achieved in CIPD contexts, and compare approaches using AI grading software options to find what fits your institution's model.

Proven strategies for delivering actionable feedback with AI

Knowing that detailed feedback works is one thing. Knowing how to produce it reliably at scale is another. The following strategies represent research-backed methodologies that assessment centres can implement within their existing AI-assisted workflows.

Best practice methodology identifies several key approaches: formative versus summative feedback structures, feedback delivered at task, process, and self-regulatory levels (as defined by Hattie and Timperley), knowledge graphs for diagnostic tracking, rubric-based prompting using zero-shot and chain-of-thought methods, and hybrid human-AI review loops.

Strategies to build into your feedback pipeline:

- Use formative feedback during the learning process to guide revision before a final submission is graded

- Structure feedback prompts around rubric criteria so AI outputs map directly to assessment standards

- Apply chain-of-thought prompting to encourage AI tools to reason through responses rather than generate surface-level commentary

- Implement knowledge graphs to track which concepts a learner struggles with across multiple submissions, enabling diagnostic rather than reactive feedback

- Design hybrid review loops where AI produces the first draft of feedback and a human assessor reviews flagged submissions

A practical process for designing a feedback pipeline:

- Define rubric criteria clearly before configuring AI prompts

- Test AI outputs against a sample of previously human-marked submissions

- Set confidence thresholds to trigger mandatory human review for low-certainty responses

- Establish a review cadence so assessors check flagged submissions within 24 to 48 hours

- Collect learner response data to monitor whether feedback is being used to revise effectively

Pro Tip: For edge cases, such as highly creative responses or submissions with unusual structuring, always route to a human assessor. AI tools handle standard formats well but benefit from human context when patterns fall outside their training range. Explore ethical feedback strategies for additional guidance on responsible deployment.

Review accurate AI assessment practices for technical configuration guidance, and consider how AI integration principles align with your institutional values before deploying at scale.

Addressing limitations and ensuring quality feedback

AI-assisted feedback brings genuine advantages, but it also carries risks that institutions must acknowledge and manage deliberately. Ignoring these risks does not eliminate them. It simply means they surface at the worst possible moment.

AI grading risks include hallucinations where the AI generates plausible but incorrect commentary on novel errors, overreliance where learners stop revising because they assume the AI has caught everything, potential algorithmic bias that disadvantages certain learner groups, and fatigue reduction for human assessors that can paradoxically reduce attentiveness over time.

Hybrid models are strongly recommended precisely because AI excels in speed and consistency but carries real risks around relationship erosion and algorithmic bias. A purely automated approach risks reducing the assessor-learner relationship to a transactional exchange, which undermines the trust and motivation that make feedback effective in the first place.

"Hybrid approaches that blend AI efficiency with human judgement consistently outperform either method alone, both in accuracy and in learner satisfaction."

Mitigation strategies for assessment centres:

- Implement structured human review for a random sample of every cohort's submissions, not just flagged ones

- Communicate clearly with learners about how AI is used in their assessment process, what it can and cannot do

- Conduct regular bias audits across demographic groups to identify patterns in how feedback varies by learner background

- Retain teacher involvement in feedback conversations, particularly for resubmissions and borderline cases

- Review privacy compliance continuously to ensure AI tools meet GDPR and UK data protection requirements

For further reading, explore feedback reliability and compliance, AI ethics in assessment, and the specific role of human review in grading within a responsible AI workflow.

A fresh perspective on feedback: prioritising uptake over automation

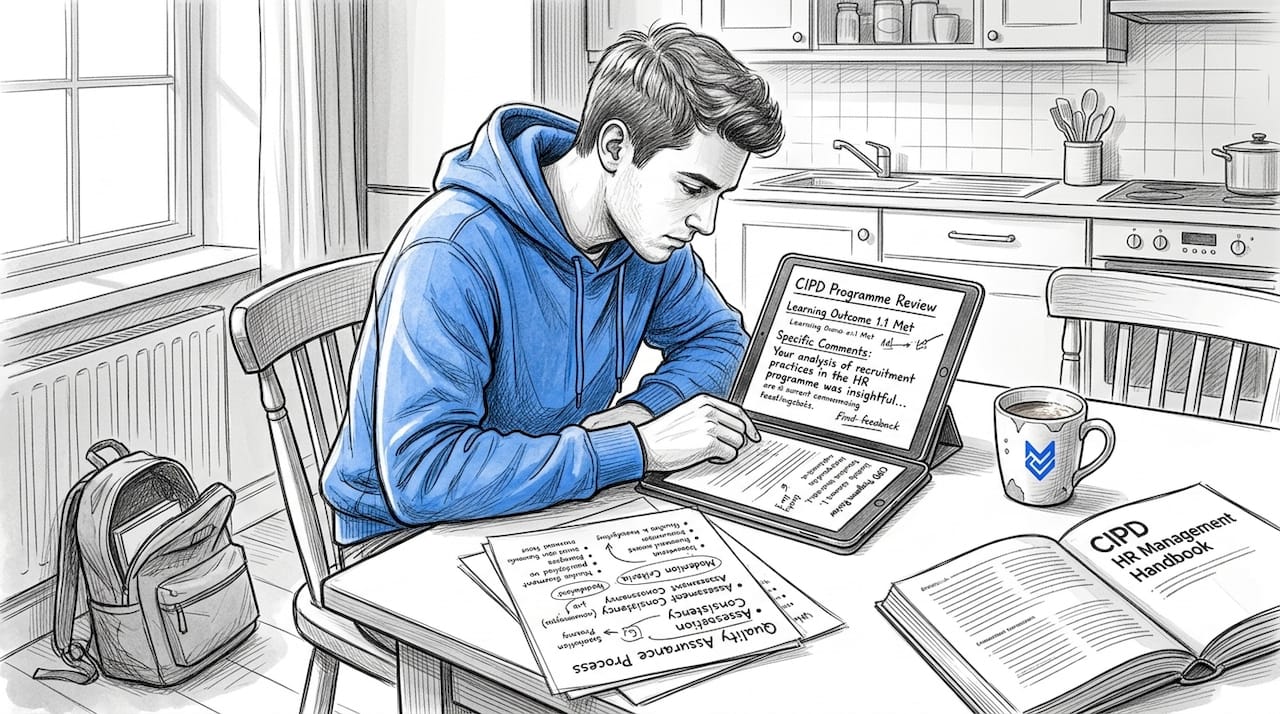

Here is something the research quietly confirms but most technology conversations understate: the quality of feedback is only half the equation. The other half is whether learners actually use it.

AI can produce timely, specific, actionable, balanced feedback at a scale no human team could match. But it cannot make a learner read their comments, reflect on them, or change their approach. Student uptake and engagement are the variables that ultimately determine whether detailed feedback translates into improved outcomes, and these depend on factors that no algorithm controls.

This is the uncomfortable truth: institutions that invest heavily in automating feedback generation but neglect the structures that encourage learner engagement with that feedback will see modest gains at best. A learner who glances at a mark and ignores three paragraphs of actionable commentary has received no benefit whatsoever from the sophistication of the system that produced it.

The solution is not less automation. It is pairing automation with deliberate structures for feedback dialogue. Scheduled follow-up check-ins, short reflection prompts embedded after feedback is delivered, and assessor availability for questions all increase the likelihood that feedback is absorbed and acted upon. Feedback is a conversation, not a delivery mechanism. AI can open the conversation, but institutions must create the conditions for it to continue. That is where feedback for CIPD outcomes is most powerfully realised.

Transform your assessment outcomes with AI-powered feedback

The evidence is clear: detailed, structured, timely feedback drives measurable improvements in learner performance. The challenge lies in delivering it consistently, at scale, without compromising quality or compliance.

AI-assisted marking from EduMark combines advanced AI feedback generation with built-in human oversight, structured rubric alignment, inline commenting, and full GDPR-compliant data handling. Assessment centres and training providers using EduMark benefit from faster turnaround times, greater consistency across markers, and feedback that genuinely supports learner progression. If you are ready to move from principles to practical, scalable results, EduMark is built precisely for that transition.

Frequently asked questions

Why is detailed feedback more effective than brief scores?

Detailed feedback guides learners on exactly what to improve and how to do it, producing measurable gains that brief scores cannot achieve. Timely, actionable feedback carries an effect size of 0.73, far exceeding most other educational interventions.

How does AI feedback compare to human feedback?

AI feedback achieves a correlation of 0.847 with expert human judgement, making it highly accurate, though human review remains valuable for nuanced cases and maintaining learner confidence.

What are the main risks of relying solely on AI for feedback?

Hybrid models are recommended because sole reliance on AI risks algorithmic bias, hallucinated errors, reduced learner revision behaviour, and erosion of the trust that underpins the assessor-learner relationship.

How can institutions maximise the benefits of detailed feedback using AI?

Blend AI efficiency with structured teacher oversight, use rubric-aligned feedback frameworks, and create deliberate engagement opportunities so learners act on the feedback they receive. Student engagement is the factor most likely to determine whether detailed feedback translates into real improvement.