TL;DR:

- AI-assisted marking requires human oversight, validated tools, and documented institutional policies.

- GDPR compliance and informed student consent are essential for ethical AI evaluation processes.

- Continuous risk management, regular audits, and a living compliance checklist ensure assessment integrity.

AI is reshaping how CIPD training providers assess learners, offering speed, consistency, and scalable feedback. Yet for compliance officers and assessment managers, the stakes are exceptionally high. Regulatory frameworks such as Ofqual's principles, the EU AI Act, and GDPR create a complex landscape where a single misstep can compromise both institutional reputation and learner trust. This guide cuts through the complexity with a practical, itemised checklist covering every critical compliance dimension: from regulatory baselines and consent protocols to ongoing risk oversight. If you are responsible for assessment integrity, this is the framework you need.

Table of Contents

- Core compliance requirements for AI-assisted marking

- Consent, privacy, and transparency: essential steps

- Operational risk management and continuous oversight

- Building and maintaining your compliance checklist

- Expert perspective: what most compliance checklists miss

- Harness compliance confidence with leading AI marking solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Human oversight is mandatory | Regulations require humans to supervise and validate AI-supported marking decisions. |

| Consent and privacy first | Only use AI tools with explicit student consent and compliance with data protection laws. |

| Continuous risk management | Regular risk assessment, documentation, and model reviews are essential for ongoing compliance. |

| Checklists drive audit readiness | Structured, regularly updated checklists simplify compliance and support successful audit outcomes. |

Core compliance requirements for AI-assisted marking

With the compliance challenge firmly in mind, let us clarify the non-negotiable rules every CIPD provider must follow before introducing AI into any marking workflow.

The compliance baseline rests on three pillars: explicit human oversight, validated tools, and documented institutional policies. None of these are optional. Human oversight is essential in all AI-assisted marking, meaning AI cannot independently determine final marks or judgements. This is not a suggestion. It is a foundational principle shared across all reputable guidance bodies.

"AI cannot be the sole marking mechanism for high-stakes qualifications. Safety, transparency, and fairness must be upheld at every stage." — Ofqual AI marking principles

From a regulatory standpoint, your institution must align with all three of these frameworks simultaneously. Treating them as separate tasks is a common and costly mistake. Instead, build a single integrated policy that satisfies all three at once.

Here is your core compliance checklist for this stage:

- Institutional approval: Confirm your AI marking tool has been formally approved by your organisation's governance body before deployment.

- Human review mandate: Every AI-generated mark or feedback output must be reviewed and signed off by a qualified assessor.

- Tool validation: Verify the tool has been independently tested for accuracy, bias, and reliability within your specific assessment context.

- Policy documentation: Maintain written policies covering AI tool selection, usage boundaries, and escalation procedures.

- Assessor training: Ensure all staff using AI marking tools have completed structured training on both the tool itself and its limitations.

- Audit trail: Keep records of every AI-assisted marking decision, including the human reviewer's final judgement.

Understanding AI ethics in education is equally important for building policy frameworks that hold up to scrutiny. Equally, knowing what accurate grading explained actually means in practice helps your assessors apply appropriate scepticism to AI outputs. The human review importance cannot be overstated when it comes to protecting both learners and institutions.

Consent, privacy, and transparency: essential steps

Once the baseline is secured, safeguarding privacy and gaining informed consent are the next priorities. This area is where many providers make avoidable errors, often because they treat GDPR compliance as a tick-box exercise rather than an ongoing commitment.

The rules here are unambiguous. You must use only approved tools that comply with GDPR and institutional privacy regulations, and you must never upload student work without explicit consent. Furthermore, transparency with students about AI use in marking and feedback processes is a clear best practice expectation.

Follow these steps in sequence:

- Map your data flows: Identify exactly what student data is processed, where it is stored, and how long it is retained within the AI tool.

- Vet your tools: Confirm GDPR compliance through supplier documentation, including Data Processing Agreements.

- Design your consent process: Create clear, plain-English consent forms that explain AI use, data handling, and opt-out rights.

- Communicate proactively: Inform learners at enrolment and again at assessment submission that AI may assist in marking their work.

- Implement opt-out protocols: Establish a clear procedure for learners who decline AI-assisted marking, ensuring this does not disadvantage them.

- Handle special cases: Maintain documented procedures for learners with protected characteristics or those whose work involves sensitive data.

- Train your staff: Run regular briefings so that all assessors and administrators understand both the consent process and the rationale behind it.

Pro Tip: Prepare a standard consent form template and a brief explainer document for learners. Review these every six months or whenever regulations or tool providers change. This reduces administrative burden significantly and keeps your consent records audit-ready.

For more detailed guidance on navigating AI feedback and compliance in UK education contexts, you will find practical frameworks directly applicable to CIPD providers.

Operational risk management and continuous oversight

With informed consent and transparency in place, managing ongoing operational risk is what keeps your systems compliant and defensible when regulators come knocking.

AI-assisted assessment is classified as high-risk under EU AI Act Annex III Area 3, which means your institution must conduct formal risk assessments, maintain rigorous data governance, and ensure human override capability at every decision point. This is not bureaucracy for its own sake. It is the foundation of a system that can withstand scrutiny.

Importantly, no empirical benchmarks exist for pass rates or error rates in AI-assisted marking. This means you cannot rely on raw accuracy metrics alone. Validity frameworks, moderation processes, and human judgement are your actual quality controls.

The following comparison illustrates how different oversight models perform across key compliance dimensions:

| Oversight model | Compliance strength | Audit defensibility | Efficiency | Risk of error |

|---|---|---|---|---|

| Human-led only | Very high | Very high | Low | Low |

| AI-led only | Very low | Very low | Very high | High |

| Hybrid (recommended) | High | High | High | Low to medium |

A hybrid model allocates routine, lower-stakes feedback tasks to AI while reserving final marking decisions and complex cases for qualified assessors. This balances operational efficiency with the integrity demands of CIPD qualifications.

Your operational risk checklist should include:

- Formal risk assessment completed and documented before deployment.

- Defined escalation pathways for borderline, contested, or high-stakes decisions.

- Regular moderation cycles, including second-marking samples and cross-assessor calibration.

- Incident reporting procedures for errors or unexpected AI outputs.

- Scheduled review of the tool's performance against your validity framework.

Exploring AI reliability compliance tips and strategies to improve marking accuracy will strengthen your operational controls considerably.

Building and maintaining your compliance checklist

To ensure all bases are covered, formalising your checklist is critical for ongoing compliance and audit readiness. A checklist only works if it is a living document, not a one-time exercise filed away and forgotten.

Hybrid models that assign AI to routine tasks while keeping humans in oversight roles represent the most defensible and efficient approach available to CIPD providers today.

Here is a sample compliance checklist table to adapt for your institution:

| Checklist item | Purpose | Evidence of control | Review timeline |

|---|---|---|---|

| Institutional tool approval | Governance alignment | Approval letter or board minutes | Annual or on tool change |

| GDPR Data Processing Agreement | Legal compliance | Signed supplier DPA | Annual or on contract renewal |

| Learner consent records | Privacy protection | Signed consent forms | Each cohort |

| Assessor training completion | Competency assurance | Training records | Each academic year |

| Human review sign-off logs | Integrity evidence | Marked scripts with reviewer name | Each assessment cycle |

| Risk assessment documentation | Regulatory compliance | Completed risk register | Bi-annual or on incident |

| Moderation and calibration records | Quality assurance | Moderation meeting minutes | Each assessment cycle |

| Incident and override log | Audit trail | Incident register | Ongoing |

Review triggers are just as important as the checklist itself. Initiate a full review whenever you encounter a significant tool update, a regulatory change, a policy amendment from CIPD or Ofqual, or any incident involving an AI marking error or learner complaint.

Pro Tip: Move your checklist to a shared digital workspace such as a compliance management platform or a structured spreadsheet with version control. This makes it significantly easier to pull evidence together when an audit or external quality review arrives unexpectedly.

For a fully developed educator compliance checklist tailored to CIPD contexts, as well as a deeper look at assessment principles and AI, both resources will help you build a more robust framework.

Expert perspective: what most compliance checklists miss

Having built your checklist, it is worth stepping back to challenge a few assumptions that are widespread in the sector.

Most compliance frameworks focus heavily on documentation and process. They treat human oversight as a checkbox: assign a reviewer, log the decision, move on. But the real risk is cultural, not procedural. Institutions that treat compliance as an administrative burden rather than a genuine commitment to fairness are the ones that face the most damaging failures when things go wrong.

The tension between automation and professional judgement is not resolved by policy alone. Assessors need to feel genuinely empowered to override AI outputs, not subtly pressured to defer to them for speed or consistency. That distinction matters enormously in practice.

There are also missed opportunities that almost no checklist addresses. Proactive dialogue with learners about AI use, regular bias audits of AI outputs across different learner demographics, and scenario-based drills to test your escalation procedures are all rare but genuinely valuable practices. Understanding what accurate AI assessment actually requires reveals just how much judgement remains irreducibly human.

The uncomfortable truth is this: regulators inspect frameworks, but they judge institutions on lived integrity. The distance between those two things is where reputational risk lives.

Harness compliance confidence with leading AI marking solutions

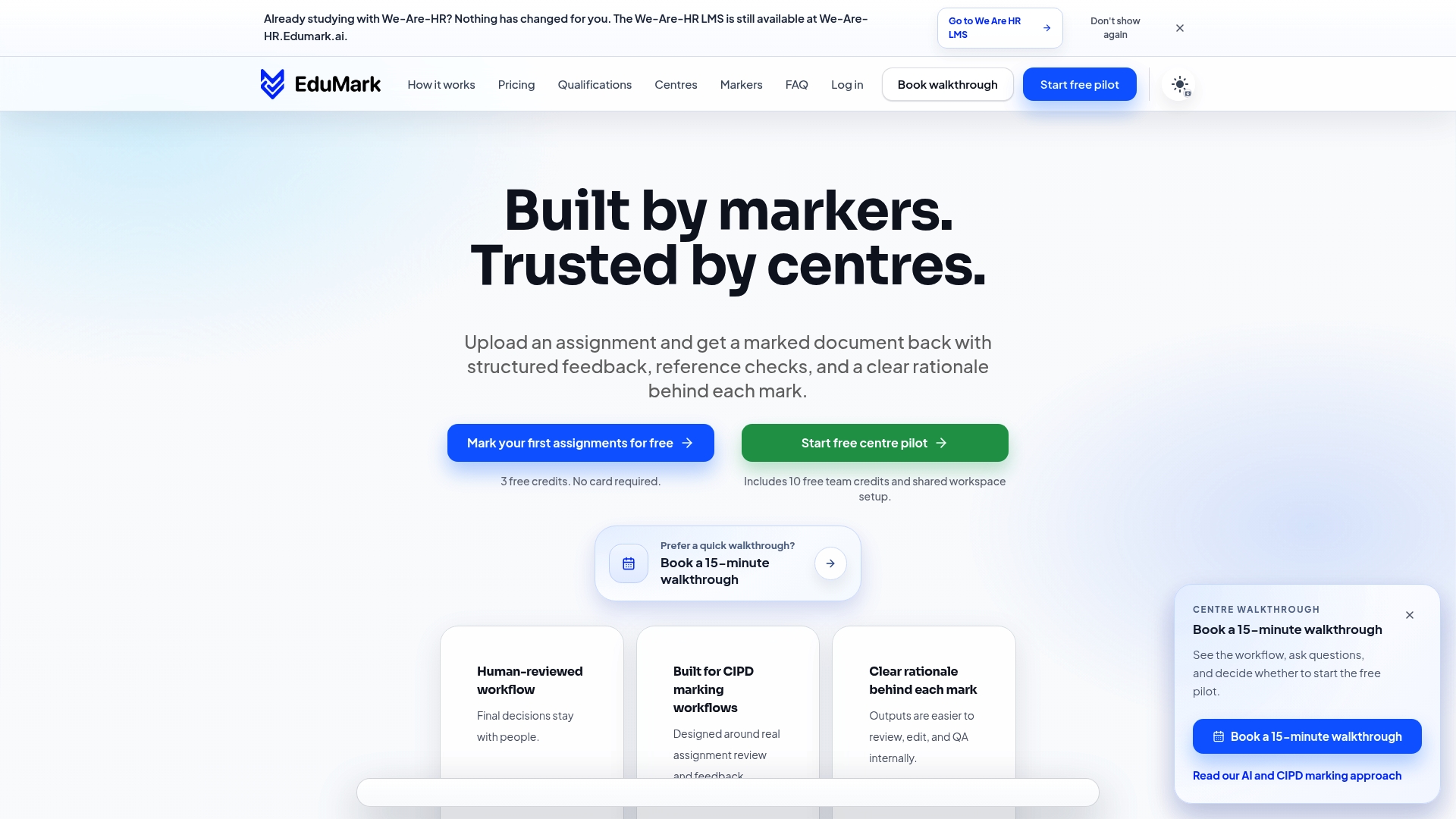

If you are ready to move from checklist to implementation, EduMark.ai offers an AI-assisted CIPD marking platform built around every compliance touchstone covered in this article.

EduMark's workflow is designed with GDPR-aligned data handling, transparent mark rationale, confidence scoring, and structured human review at its core. Every submission is processed within a human-reviewed, AI-supported environment, directly reflecting Ofqual and EU AI Act principles. Whether you are scaling up your assessment operations or seeking more consistent feedback quality, EduMark gives you the tools to improve accuracy with AI without compromising integrity. Compliance confidence is not a destination. It is a practice, and EduMark is built to support it every step of the way.

Frequently asked questions

Can AI ever be used as the sole marker for CIPD assessments?

No. Ofqual's AI marking principles require human oversight in all high-stakes educational assessments, and CIPD qualifications fall squarely within that category.

Do I need student consent to use AI marking tools on their assignments?

Yes. Explicit consent is required before uploading student work to any AI platform, in line with GDPR and your institution's own data protection policy.

Are there set benchmarks for accuracy in AI marking systems?

No definitive benchmarks exist, so validity frameworks over metrics and continual human oversight matter far more than chasing a particular accuracy percentage.

What triggers a review or update of my compliance checklist?

Reviews should be triggered by software updates, regulatory changes, policy adjustments from CIPD or Ofqual, or any incident report involving AI marking errors, with hybrid oversight models requiring particular attention during any transition periods.